Stacking Explained Advanced Ensemble Learning In Machine Learning

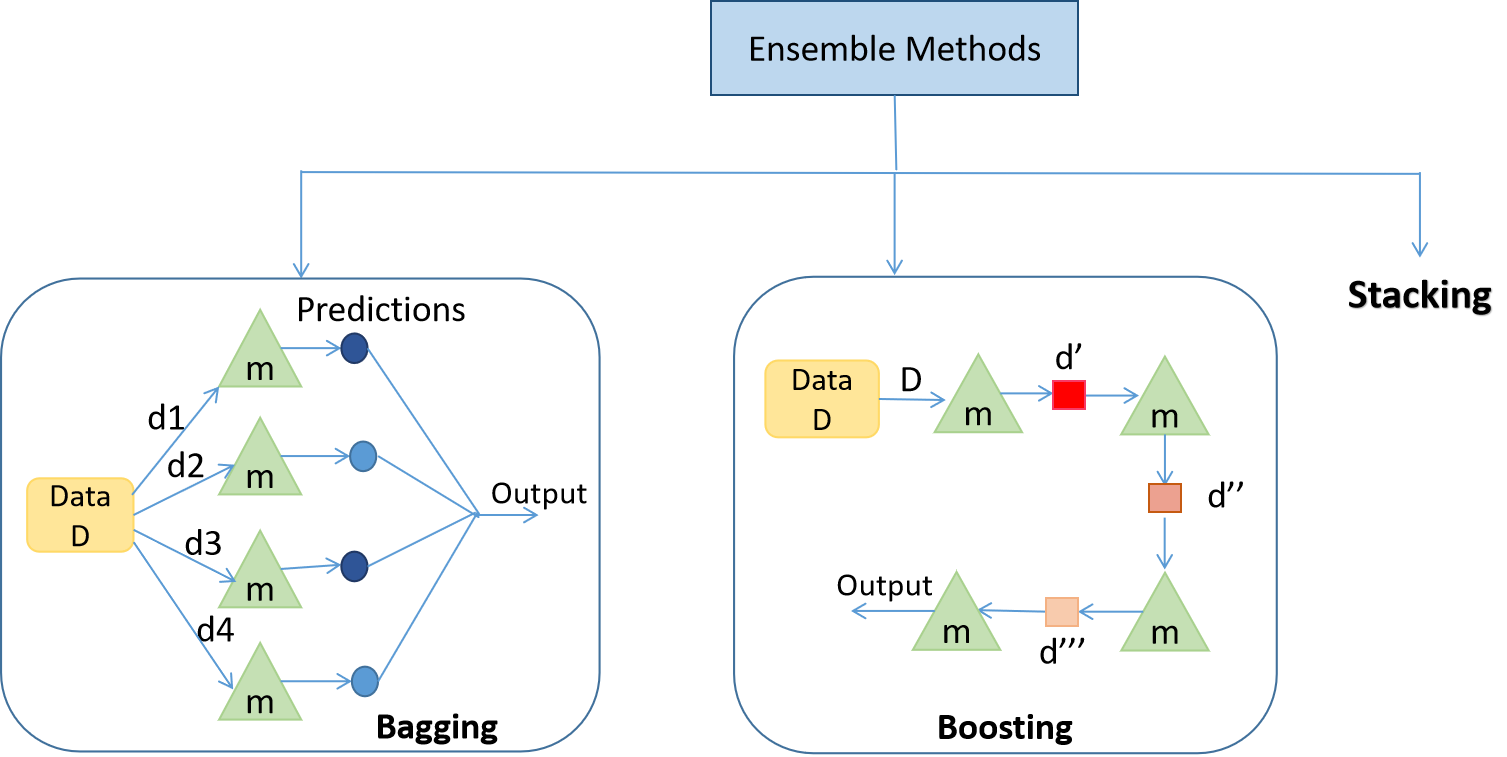

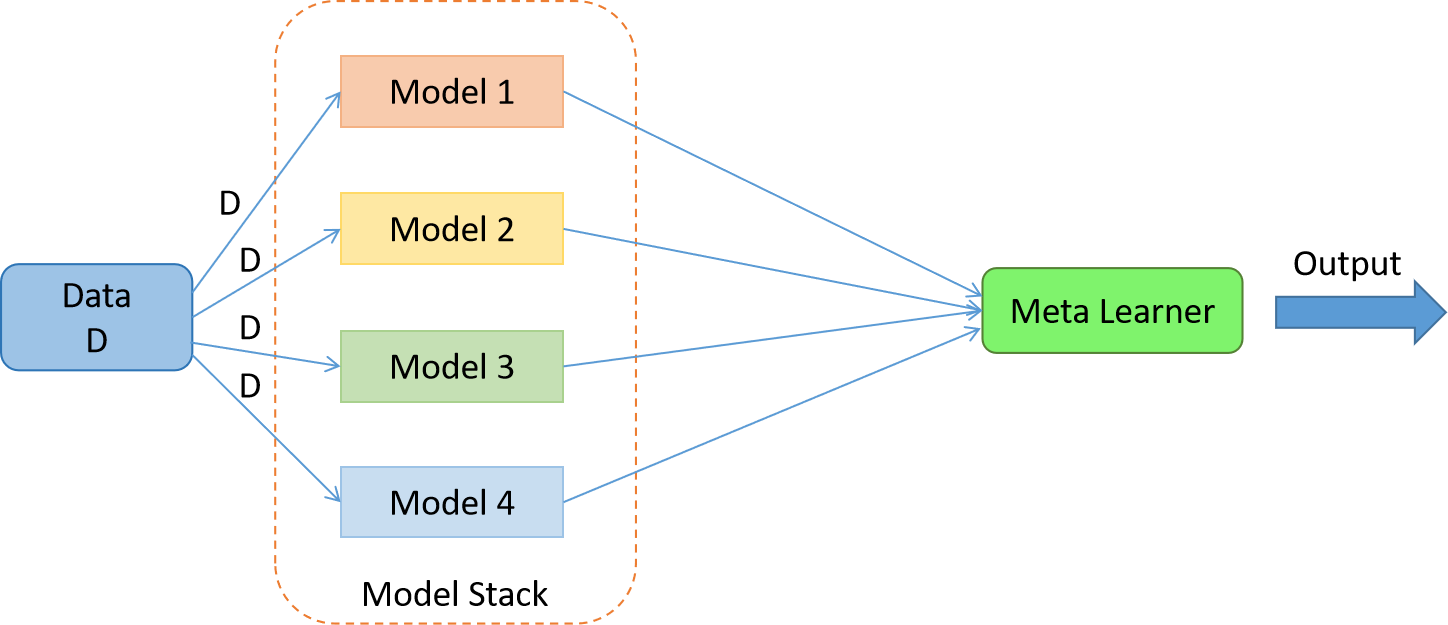

Demystifying Ensemble Methods Boosting Bagging And Stacking Stacking, or stacked generalization, is a sophisticated ensemble learning technique designed to enhance prediction accuracy by intelligently combining multiple base models. let’s explore the methodology, mathematical underpinnings, and practical considerations involved in stacking. Stacking is an ensemble learning technique that uses predictions from multiple models (for example decision tree, knn or svm) to build a new model. this model is used for making predictions.

Ensemble Stacking For Machine Learning And Deep Learning Hiswai Stacking is a ensemble learning technique where the final model known as the “stacked model" combines the predictions from multiple base models. the goal is to create a stronger model by using different models and combining them. In this paper, we introduce xstacking, an effective and inherently explainable framework that addresses these limitations by integrating dynamic feature transformation with model agnostic shapley additive explanations. Discover the power of stacking in machine learning – a technique that combines multiple models into a single powerhouse predictor. this article explores stacking from its basics to advanced techniques, unveiling how it blends the strengths of diverse models for enhanced accuracy. In this lesson, we explain stacking, an advanced ensemble learning technique used in machine learning.

Ensemble Stacking For Machine Learning And Deep Learning Hiswai Discover the power of stacking in machine learning – a technique that combines multiple models into a single powerhouse predictor. this article explores stacking from its basics to advanced techniques, unveiling how it blends the strengths of diverse models for enhanced accuracy. In this lesson, we explain stacking, an advanced ensemble learning technique used in machine learning. Is stacking a type of ensemble, or are they different approaches entirely? this comprehensive guide clarifies the relationship between stacking and ensemble methods, explores their unique characteristics, and provides practical guidance on when to use each approach. Stacking is a strong ensemble learning strategy in machine learning that combines the predictions of numerous base models to get a final prediction with better performance. it is also. Ensemble machine learning (eml) techniques, especially stacking, have been shown to improve predictive performance by combining multiple base models. however, they are often criticized for their lack of interpretability. Ensemble stacking is a versatile and effective approach for improving the accuracy of machine learning and deep learning models. combining different model predictions through stacking can lead to significantly better outcomes than using individual models.

Comments are closed.