Stable Diffusion Video

Stable Diffusion Tutorial How To Create Video With Text Prompts Lablab Stability ai’s first open generative ai video model based on the image model stable diffusion. Stable video diffusion is the first open‑source video model from stability ai. it turns any text prompt or static image into dynamic, high‑resolution video clips, empowering marketers, educators and storytellers to create cinematic content in minutes.

Stable Video Diffusion Generator Online For Free For research purposes, we recommend our generative models github repository ( github stability ai generative models), which implements the most popular diffusion frameworks (both training and inference). the chart above evaluates user preference for svd image to video over gen 2 and pikalabs. Home how to use faq upload pictures. Stable video diffusion (svd) is a powerful image to video generation model that can generate 2 4 second high resolution (576x1024) videos conditioned on an input image. This notebook allows you to generate videos by interpolating the latent space of stable diffusion. you can either dream up different versions of the same prompt, or morph between different.

Stable Video Diffusion Movie Workflow Comfyui Stable Diffusion Art Stable video diffusion (svd) is a powerful image to video generation model that can generate 2 4 second high resolution (576x1024) videos conditioned on an input image. This notebook allows you to generate videos by interpolating the latent space of stable diffusion. you can either dream up different versions of the same prompt, or morph between different. Learn how to use stable video diffusion, the first stable diffusion model for generating video, on google colab, comfyui, or windows. see examples of animated images and parameters to customize your video output. This tutorial taught us how to set up an environment for stable video diffusion, install it, and run it. this is an excellent way to get familiar with generative ai models and how to tune. Learn three workflows to animate videos using stable diffusion and controlnet extensions. see examples of photorealistic, anime and tile based styles with mov2mov, sd cn animation and temporal kit. Animatediff turns a text prompt into a video using a stable diffusion model. you can think of it as a slight generalization of text to image: instead of generating an image, it generates a video.

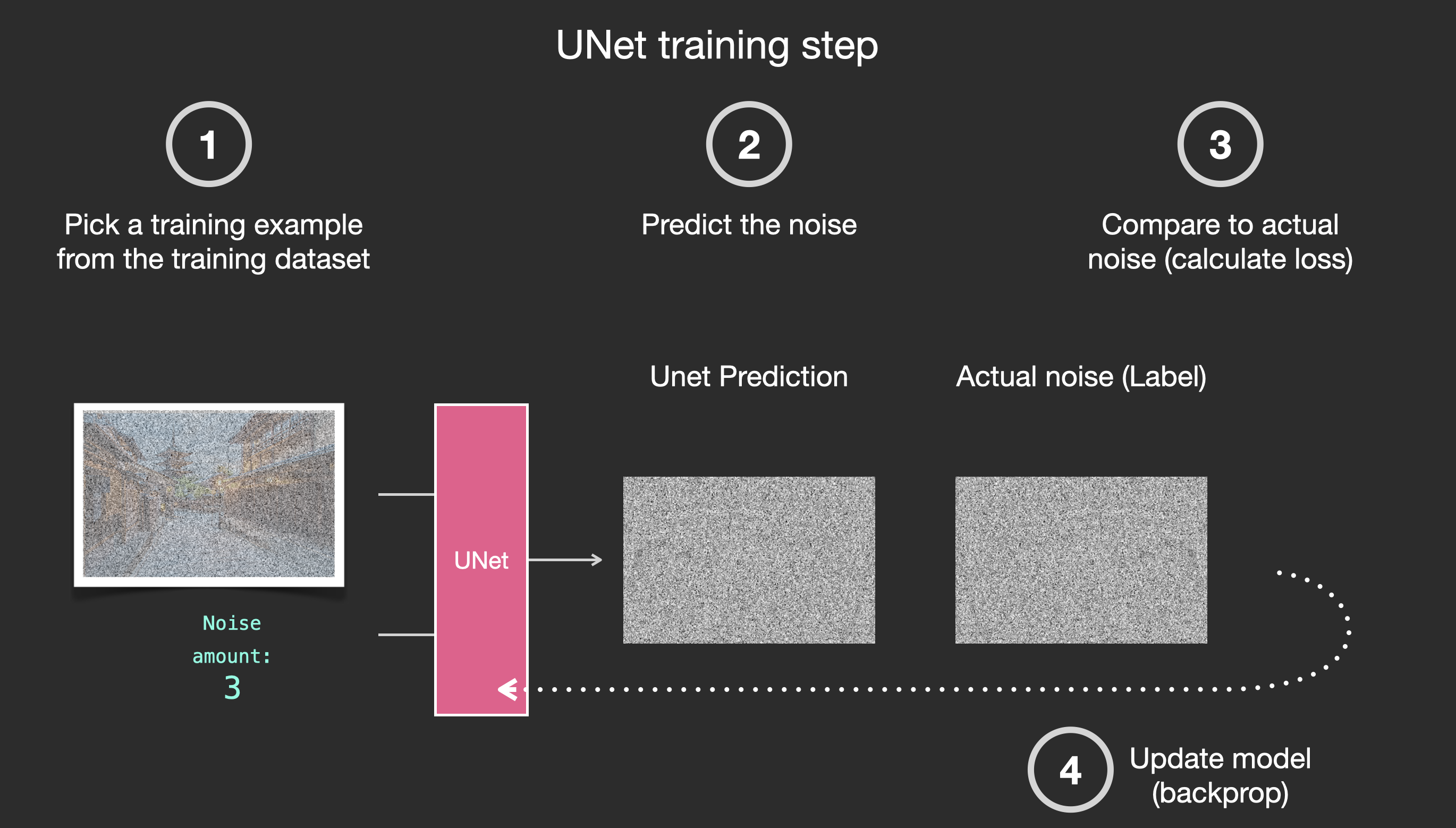

The Illustrated Stable Diffusion Jay Alammar Visualizing Machine Learn how to use stable video diffusion, the first stable diffusion model for generating video, on google colab, comfyui, or windows. see examples of animated images and parameters to customize your video output. This tutorial taught us how to set up an environment for stable video diffusion, install it, and run it. this is an excellent way to get familiar with generative ai models and how to tune. Learn three workflows to animate videos using stable diffusion and controlnet extensions. see examples of photorealistic, anime and tile based styles with mov2mov, sd cn animation and temporal kit. Animatediff turns a text prompt into a video using a stable diffusion model. you can think of it as a slight generalization of text to image: instead of generating an image, it generates a video.

Stable Video Diffusion Generatte Video Based On Stable Diffusion Ahorai Learn three workflows to animate videos using stable diffusion and controlnet extensions. see examples of photorealistic, anime and tile based styles with mov2mov, sd cn animation and temporal kit. Animatediff turns a text prompt into a video using a stable diffusion model. you can think of it as a slight generalization of text to image: instead of generating an image, it generates a video.

Comments are closed.