Stable Diffusion Controlnet In Architecture

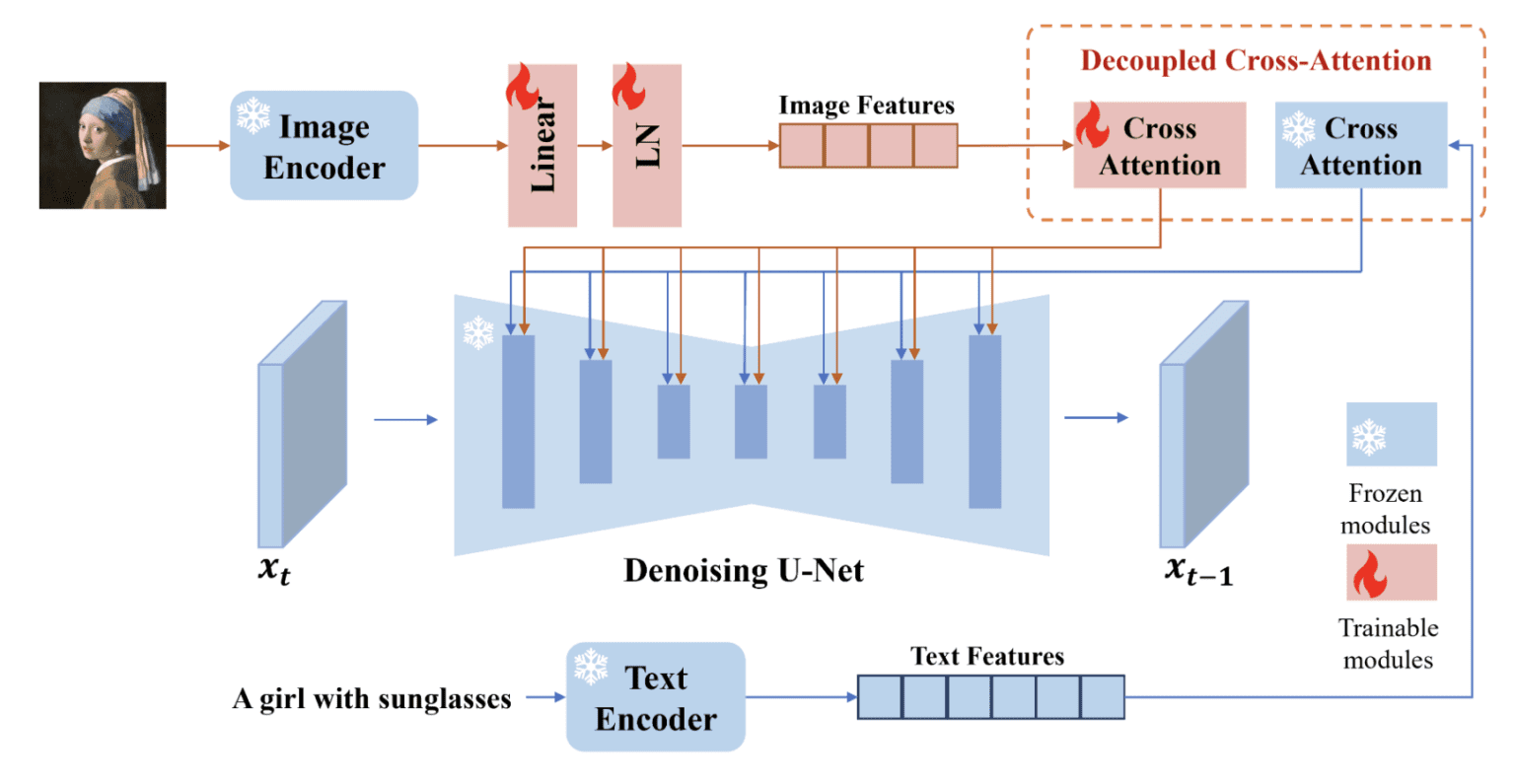

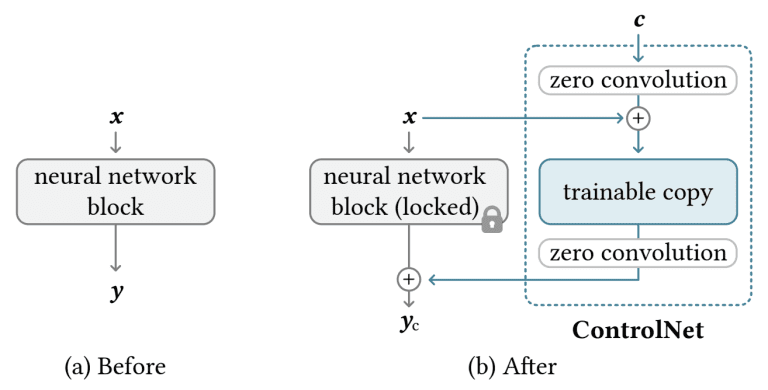

Stable Diffusion Controlnet In Architecture Cadman Architectural Controlnet works by attaching trainable network modules to various parts of the u net (noise predictor) of the stable diffusion model. the weight of the stable diffusion model is locked so that they are unchanged during training. We present controlnet, a neural network architecture to add spatial conditioning controls to large, pretrained text to image diffusion models.

Controlnet V1 1 A Complete Guide Stable Diffusion Art Stable diffusion controlnet for architects. this is the full 58 minute walkthrough (77k views on ) showing how i use controlnet for controlled iterations in early stage design. In this work we propose a new controlling architecture, called controlnet xs, which does not suffer from this problem, and hence can focus on the given task of learning to control. in contrast to controlnet, our model needs only a fraction of parameters, and hence is about twice as fast during inference and training time. Turn architectural sketches into photorealistic renders using controlnet with stable diffusion and the diffusers library in python. Controlnets allow for the inclusion of conditional inputs, such as edge maps, segmentation maps, and key points, into large diffusion models like stable diffusion.

Controlnet V1 1 A Complete Guide Stable Diffusion Art Turn architectural sketches into photorealistic renders using controlnet with stable diffusion and the diffusers library in python. Controlnets allow for the inclusion of conditional inputs, such as edge maps, segmentation maps, and key points, into large diffusion models like stable diffusion. This project demonstrates how to control stable diffusion with architectural sketches (edge style line drawings) using controlnet to generate building facade renderings. Discover how to use 3d model screenshots, txt2img, img2img, and inpainting to refine concepts and streamline early stage design workflows. Controlnet lets you guide stable diffusion’s image generation with spatial conditioning inputs hand drawn sketches, canny edge maps, depth images, or openpose skeletons so the output follows your compositional intent rather than relying on prompt engineering alone. you feed a preprocessed control image alongside your text prompt, and the model generates artwork that matches the structure. In this project, we investigate the size and architectural design of controlnet [zhang et al., 2023] for controlling the image generation process with stable diffusion based models.

Controlnet A Complete Guide Stable Diffusion Art This project demonstrates how to control stable diffusion with architectural sketches (edge style line drawings) using controlnet to generate building facade renderings. Discover how to use 3d model screenshots, txt2img, img2img, and inpainting to refine concepts and streamline early stage design workflows. Controlnet lets you guide stable diffusion’s image generation with spatial conditioning inputs hand drawn sketches, canny edge maps, depth images, or openpose skeletons so the output follows your compositional intent rather than relying on prompt engineering alone. you feed a preprocessed control image alongside your text prompt, and the model generates artwork that matches the structure. In this project, we investigate the size and architectural design of controlnet [zhang et al., 2023] for controlling the image generation process with stable diffusion based models.

A Complete Guide On Stable Diffusion Controlnet Aiarty Controlnet lets you guide stable diffusion’s image generation with spatial conditioning inputs hand drawn sketches, canny edge maps, depth images, or openpose skeletons so the output follows your compositional intent rather than relying on prompt engineering alone. you feed a preprocessed control image alongside your text prompt, and the model generates artwork that matches the structure. In this project, we investigate the size and architectural design of controlnet [zhang et al., 2023] for controlling the image generation process with stable diffusion based models.

Comments are closed.