Sparse Matrices Intro To Parallel Programming

Sparse Matrices Advanced Programming Lecture Slides Docsity In this chapter we will introduce different sparse matrix storage formats and their corresponding processing methods. these approaches employ a compaction technique but introduce a certain degree of irregularity. Course description: objectives: sparse matrix systems in parallel environments are one of the widely used techniques in the scientific communit. and industry as well. this course aims to introduce students from interdisciplinary engineering and science streams to the fundamentals of the sparse matrix .

Ranking Of Programming Activities Sparse Matrices Linked Lists This video is part of an online course, intro to parallel programming. check out the course here: udacity course cs344. We first briefly review parallel processing concepts in this chapter. two ways of achieving this goal are shared memory and distributed memory approaches as we describe. It includes routines for multiplying sparse matrices by dense matrices, solving block diagonal systems with triangular diagonal entries, and preprocessing sparse matrices. it contains also additional routines for dense matrix operations. It is suitable for input to a parallel computer, since all information about a nonzero is contained in its triple. the triples can be sent directly to the responsible processors.

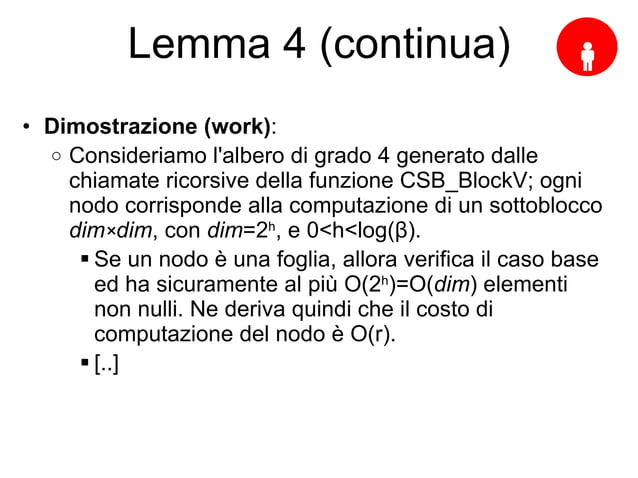

Parallel Sparse Matrix Vector Multiplication Using Csb Ppt We used sparse summa as a building block to design and implement scalable parallel routines for sparse matrix indexing (spref) and assignment (spasgn). these operations are important in the context of graph operations. The process of triangular factorization (gaussian eliminat ion) for the case of sparse matrices. note: in general, during factorization we have to do pivoting in order to assure numerical stability. We used sparse summa as a building block to design and implement scalable parallel routines for sparse matrix indexing (spref) and assignment (spasgn). these operations are important in the context of graph operations. After studying matrices and their application throughout the book, this chapter will introduce different matrix designs for sparse matrices which improves performance both in terms of time and memory.

Comments are closed.