Sparse Gaussian Process Variational Autoencoders

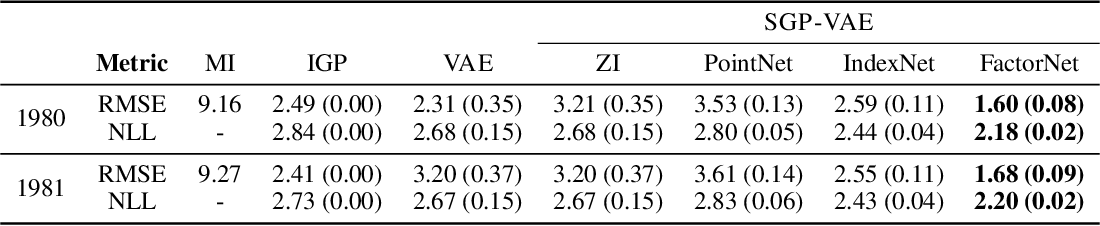

Github Prkh2607 Sparse Gaussian Process Regression We address these shortcomings with the development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for parameterising sparse gp approximations. We present a fully bayesian autoencoder model that treats both local latent variables and global decoder parameters in a bayesian fashion. this approach allows for flexible priors and posterior approximations while keeping the inference costs low.

Sparse Gaussian Process Hyperparameters Optimize Or Integrate Paper We evaluate our model on a range of experiments focusing on dynamic representation learning and genera tive modeling, demonstrating the strong perfor mance of our approach in comparison to existing methods that combine gaussian processes and autoencoders. We evaluate the proposed method on both real and simulated data, demonstrating that the physics informed gpvae (pigpvae) outperforms state of the art methods in terms of diversity and accuracy of the generated samples, even under small data conditions. Published with hugo blox builder — the free, open source website builder that empowers creators. We address these shortcomings with the development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for parameterising sparse gp approximations.

Sparse Gaussian Process Variational Autoencoders Published with hugo blox builder — the free, open source website builder that empowers creators. We address these shortcomings with the development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for parameterising sparse gp approximations. We address these shortcomings with the development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for. The development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for parameterising sparse gp approximations, enables inference in multi output sparse gps on previously unobserved data with no additional training. We evaluate our approach qualitatively and quantitatively on a wide range of representation learning and generative modeling tasks and show that our approach consistently outperforms multiple alternatives relying on variational autoencoders. We propose a new scalable gp vae model that outperforms existing ap proaches in terms of runtime and memory footprint, is easy to implement, and allows for joint end to end optimization of all com ponents.

Sparse Gaussian Process Variational Autoencoders Deepai We address these shortcomings with the development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for. The development of the sparse gaussian process variational autoencoder (sgp vae), characterised by the use of partial inference networks for parameterising sparse gp approximations, enables inference in multi output sparse gps on previously unobserved data with no additional training. We evaluate our approach qualitatively and quantitatively on a wide range of representation learning and generative modeling tasks and show that our approach consistently outperforms multiple alternatives relying on variational autoencoders. We propose a new scalable gp vae model that outperforms existing ap proaches in terms of runtime and memory footprint, is easy to implement, and allows for joint end to end optimization of all com ponents.

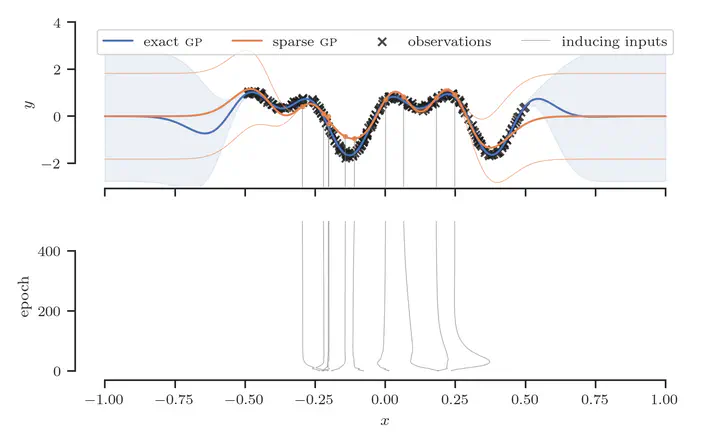

Sparse Gaussian Process Using Pseudo Input Download Scientific Diagram We evaluate our approach qualitatively and quantitatively on a wide range of representation learning and generative modeling tasks and show that our approach consistently outperforms multiple alternatives relying on variational autoencoders. We propose a new scalable gp vae model that outperforms existing ap proaches in terms of runtime and memory footprint, is easy to implement, and allows for joint end to end optimization of all com ponents.

A Handbook For Sparse Variational Gaussian Processes Louis Tiao

Comments are closed.