Spark Shuffle Hash Join Spark Sql Interview Question

Spark Sql Shuffle Partitions Spark By Examples Join us in this video to gain a comprehensive understanding of how shuffle hash join works and how to leverage it effectively in your spark applications. Apache spark offers several join methods, including broadcast joins, sort merge joins, and shuffle hash joins. shj stands out as a middle ground approach: it shuffles both tables like sort merge joins to align data with the same key.

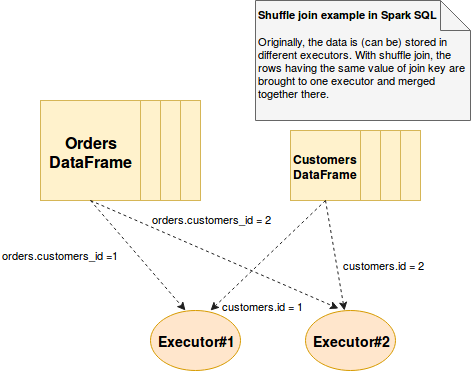

Shuffle Join In Spark Sql On Waitingforcode Articles About Apache Interviewer (i) and candidate © — a senior data engineer with 8 years of spark experience — walk through a deceptively simple question that reveals deeper internals about spark shuffle. The shuffle data is then sorted and merged with the other data sets with the same join key. here's a step by step explanation of how hash shuffle join works in spark:. But i have failed to find an article that explains the inner workings of shuffle hash join and sort merge join. can anyone please give the step by step algorithm for those 2? here is a good material: shuffle hash join. sort merge join. notice that since spark 2.3 the default value of spark.sql.join.prefersortmergejoin has been changed to true. Understand how spark's join strategies work and how they are used to optimize join performance.

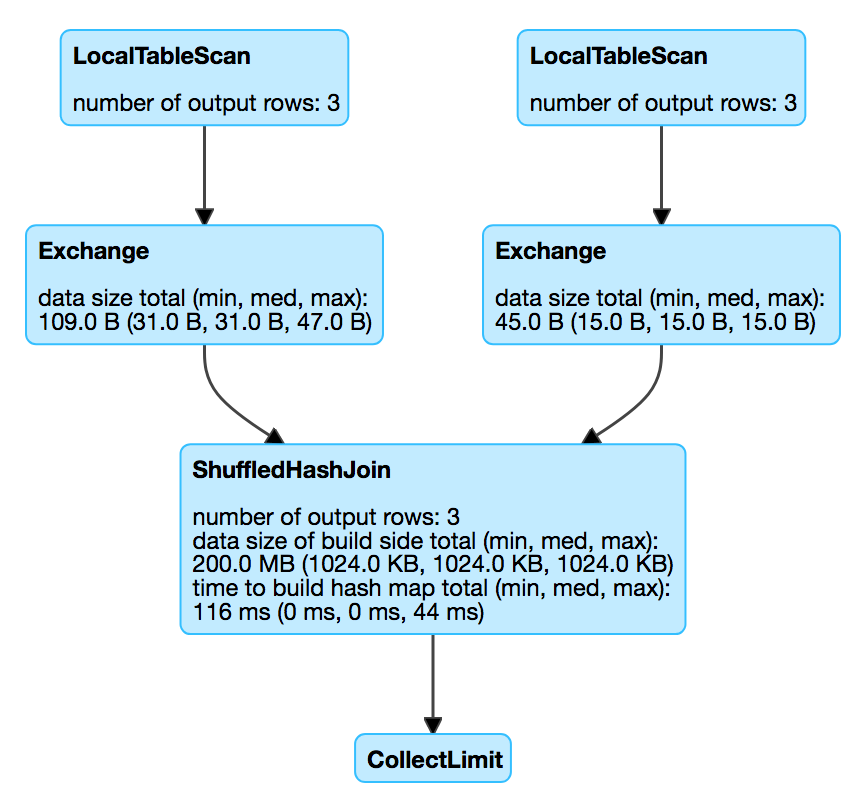

Spark Sql Shuffle Partitions Best Practices Top 10 But i have failed to find an article that explains the inner workings of shuffle hash join and sort merge join. can anyone please give the step by step algorithm for those 2? here is a good material: shuffle hash join. sort merge join. notice that since spark 2.3 the default value of spark.sql.join.prefersortmergejoin has been changed to true. Understand how spark's join strategies work and how they are used to optimize join performance. Shuffling is the process where spark redistributes data across different. 1. each row's join key is hashed. 2. based on this hash, the row is sent to a specific executor. joining. why is this important? without shuffling, matching rows from different nodes can’t be. compared and joined. Common join strategies in spark include sort merge join, broadcast join, and shuffle hash join. experiment with different join strategies to find the most efficient one for your specific scenario. To be qualified for the shuffle hash join, at least one of the join relations needs to be small enough for building a hash table, whose size should be smaller than the product of the broadcast threshold (spark.sql.autobroadcastjointhreshold) and the number of shuffle partitions. The join strategy hints, namely broadcast, merge, shuffle hash and shuffle replicate nl, instruct spark to use the hinted strategy on each specified relation when joining them with another relation.

Top 30 Spark Sql Interview Questions 2025 Update Shuffling is the process where spark redistributes data across different. 1. each row's join key is hashed. 2. based on this hash, the row is sent to a specific executor. joining. why is this important? without shuffling, matching rows from different nodes can’t be. compared and joined. Common join strategies in spark include sort merge join, broadcast join, and shuffle hash join. experiment with different join strategies to find the most efficient one for your specific scenario. To be qualified for the shuffle hash join, at least one of the join relations needs to be small enough for building a hash table, whose size should be smaller than the product of the broadcast threshold (spark.sql.autobroadcastjointhreshold) and the number of shuffle partitions. The join strategy hints, namely broadcast, merge, shuffle hash and shuffle replicate nl, instruct spark to use the hinted strategy on each specified relation when joining them with another relation.

Shuffledhashjoinexec The Internals Of Spark Sql To be qualified for the shuffle hash join, at least one of the join relations needs to be small enough for building a hash table, whose size should be smaller than the product of the broadcast threshold (spark.sql.autobroadcastjointhreshold) and the number of shuffle partitions. The join strategy hints, namely broadcast, merge, shuffle hash and shuffle replicate nl, instruct spark to use the hinted strategy on each specified relation when joining them with another relation.

Comments are closed.