Solution Expectation Maximization Machine Learning Studypool

Ml 2 Expectation Maximization Pdf Support Vector Machine Cluster Rather than hoping conflict will go away, this paper will explore and identify the reasons for conflict and how to successfully address them in a team environment. write a four page paper (excluding title and reference pages) assessing the components of conflict. The expectation maximization (em) algorithm is a powerful iterative optimization technique used to estimate unknown parameters in probabilistic models, particularly when the data is incomplete, noisy or contains hidden (latent) variables.

A Gentle Introduction To Expectation Maximization Em Algorithm The expectation maximization methodology was first presented in a general way by dempster, laird and rubin in 1977. they define em algorithm as an iterative estimation algorithm that can derive the maximum likelihood (ml) estimates in the presence of missing hidden data (“incomplete data”). Algorithms like expectation maximization (em) may be used to recover the ground truth regressors for this problem. recently, in \cite {pal2022learning,ghosh agnostic} the mixed linear regression problem is studied in the agnostic setting, where no generative model on data is assumed. Learn the principles and steps of the expectation maximization (em) algorithm. explore the advantages and disadvantages of the em algorithm in parameter estimation and missing data handling. In statistics, an expectation–maximization (em) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (map) estimates of parameters in statistical models, where the model depends on unobserved latent variables. [1].

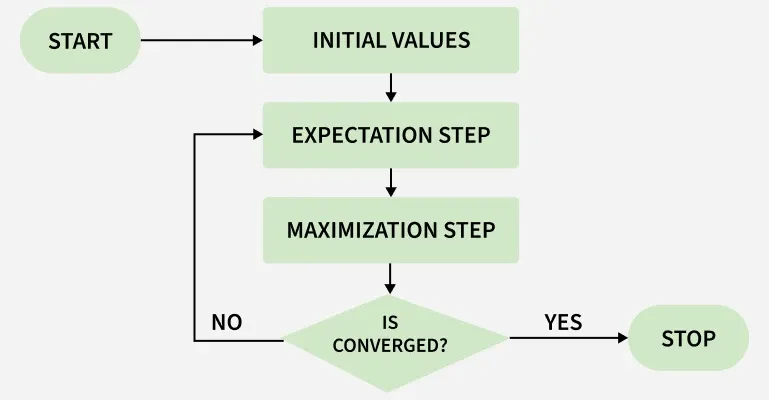

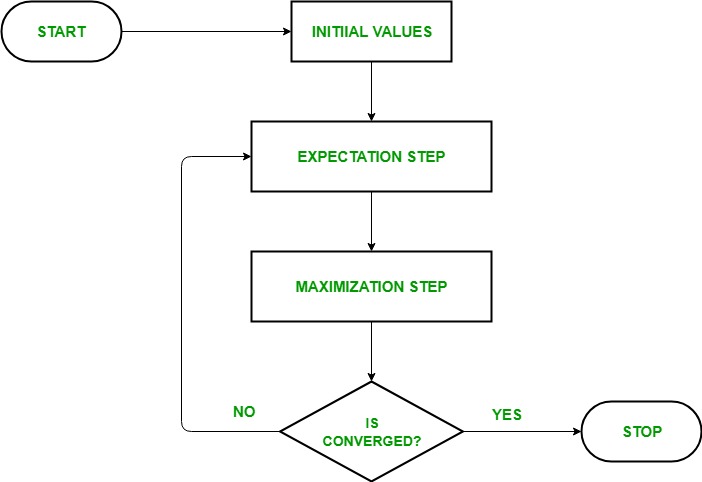

07 Machine Learning Expectation Maximization Pdf Physics Science Learn the principles and steps of the expectation maximization (em) algorithm. explore the advantages and disadvantages of the em algorithm in parameter estimation and missing data handling. In statistics, an expectation–maximization (em) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (map) estimates of parameters in statistical models, where the model depends on unobserved latent variables. [1]. Ideally, if we have the distribution of the complete data x, then finding the parameter can be done by maximizing f(x|θ). however, the complete data is only a virtual thing we created to solved the problem. It consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. both steps involve maximizing a lower bound on the likelihood. The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. The iterative algorithm then starts from any initial value, say θ(1), of θ and performs a sequence of steps, where the k th step computes θ(k 1) from θ(k) by applying the expectation and the maximization step in sequence.

Expectation Maximization Algorithm Ml Geeksforgeeks Ideally, if we have the distribution of the complete data x, then finding the parameter can be done by maximizing f(x|θ). however, the complete data is only a virtual thing we created to solved the problem. It consists of iterating between two steps (\expectation step" and \maximization step", or \e step" and \m step" for short) until convergence. both steps involve maximizing a lower bound on the likelihood. The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. The iterative algorithm then starts from any initial value, say θ(1), of θ and performs a sequence of steps, where the k th step computes θ(k 1) from θ(k) by applying the expectation and the maximization step in sequence.

Expectation Maximization Algorithm Ml Geeksforgeeks The expectation maximization algorithm is an approach for performing maximum likelihood estimation in the presence of latent variables. it does this by first estimating the values for the latent variables, then optimizing the model, then repeating these two steps until convergence. The iterative algorithm then starts from any initial value, say θ(1), of θ and performs a sequence of steps, where the k th step computes θ(k 1) from θ(k) by applying the expectation and the maximization step in sequence.

Expectation Maximization Algorithm Ml Geeksforgeeks

Comments are closed.