Shifted Window Transformers Random Walks

Introduction To Transformers Random Walks In order to theoretically understand transformers, a number of recent works have investigated their capability in learning from sequential data that follows certain classic statistical models. Discover how the shifted window scheme enables efficient local self attention and scalable global context propagation in modern transformer architectures.

Github Naveenrajusg Image Classification Using Hierarchical Based It is basically a hierarchical transformer whose representation is computed with shifted windows. the shifted windowing scheme brings greater efficiency by limiting self attention computation to non overlapping local windows while also allowing for cross window connection. Unlike standard vision transformers which use global attention, swin transformer introduces a "shifted window" technique. this allows neighboring windows to interact with each other in subsequent layers, efficiently capturing both local and global features in an image. This document details the architectural design of the swin transformer, explaining its core components, hierarchical structure, and the shifted window mechanism that gives it its name. A swin transformer block consists of a shifted window based msa module, followed by a 2 layer mlp with gelu nonlinearity in between. a layernorm (ln) layer is applied before each msa module and each mlp, and a residual connection is applied after each module.

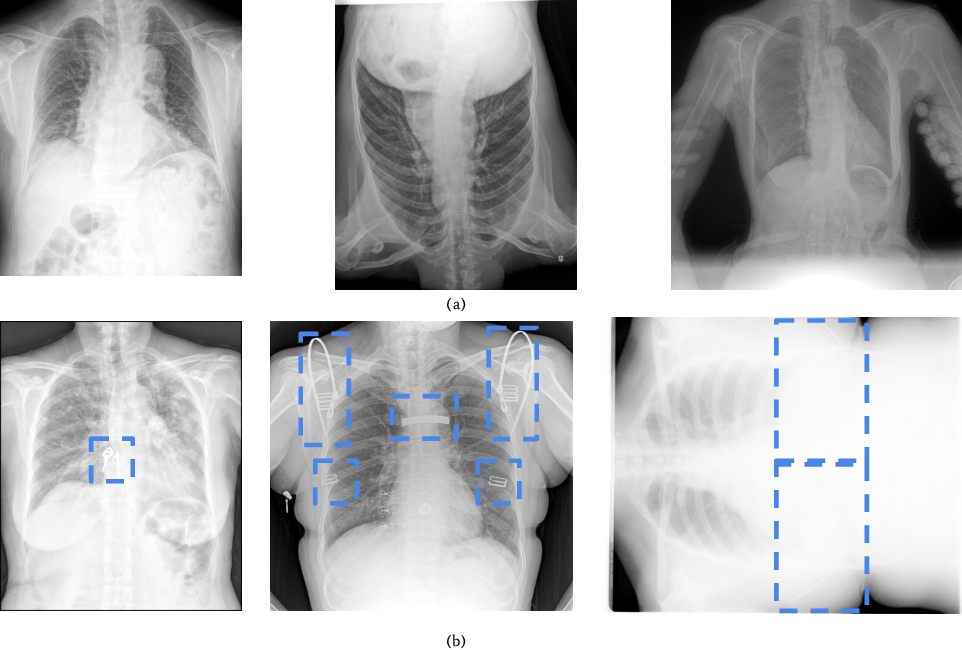

Shifted Windows Transformers For Medical Image Quality Assessment This document details the architectural design of the swin transformer, explaining its core components, hierarchical structure, and the shifted window mechanism that gives it its name. A swin transformer block consists of a shifted window based msa module, followed by a 2 layer mlp with gelu nonlinearity in between. a layernorm (ln) layer is applied before each msa module and each mlp, and a residual connection is applied after each module. To this end, this paper proposed a multi channel calibrated transformer with shifted windows (mcswin t) for computing self attention in each non overlapping window which models the relations between the sequences of split patches. The test batch contains exactly 1000 randomly selected images from each class. the training batches contain the remaining images in random order, but some training batches may contain more images from one class than another. One of the experiments is to apply the proposed hierarchical design and the shifted window approach to the mlp mixer, referred to as swin mixer. it has better speed accuracy trade off compared. The shifted window mechanism is the defining innovation of the swin transformer. it replaces global self attention with local window attention while using a shifting strategy to maintain cross window information flow.

Shifted Window Transformer Swin Transformer Diagram Used In The Study To this end, this paper proposed a multi channel calibrated transformer with shifted windows (mcswin t) for computing self attention in each non overlapping window which models the relations between the sequences of split patches. The test batch contains exactly 1000 randomly selected images from each class. the training batches contain the remaining images in random order, but some training batches may contain more images from one class than another. One of the experiments is to apply the proposed hierarchical design and the shifted window approach to the mlp mixer, referred to as swin mixer. it has better speed accuracy trade off compared. The shifted window mechanism is the defining innovation of the swin transformer. it replaces global self attention with local window attention while using a shifting strategy to maintain cross window information flow.

Shifted Window Transformer Model Illustration Stable Diffusion Online One of the experiments is to apply the proposed hierarchical design and the shifted window approach to the mlp mixer, referred to as swin mixer. it has better speed accuracy trade off compared. The shifted window mechanism is the defining innovation of the swin transformer. it replaces global self attention with local window attention while using a shifting strategy to maintain cross window information flow.

Illustration Of Shifted Window Partition In Swin Transformers 33 2

Comments are closed.