Share The Load With Distributed Copycat Training

Distributed Model Training Pyblog Copycat can share the training load across multiple machines, with a familiar interface, so that distributing training feels just like distributing rendering using standard render farm applications such as opencue. Pytorch, a popular deep learning framework, provides robust support for distributed training. alongside distributed training, the ability to save and load models in a distributed setting is crucial for checkpointing, model sharing, and resuming training.

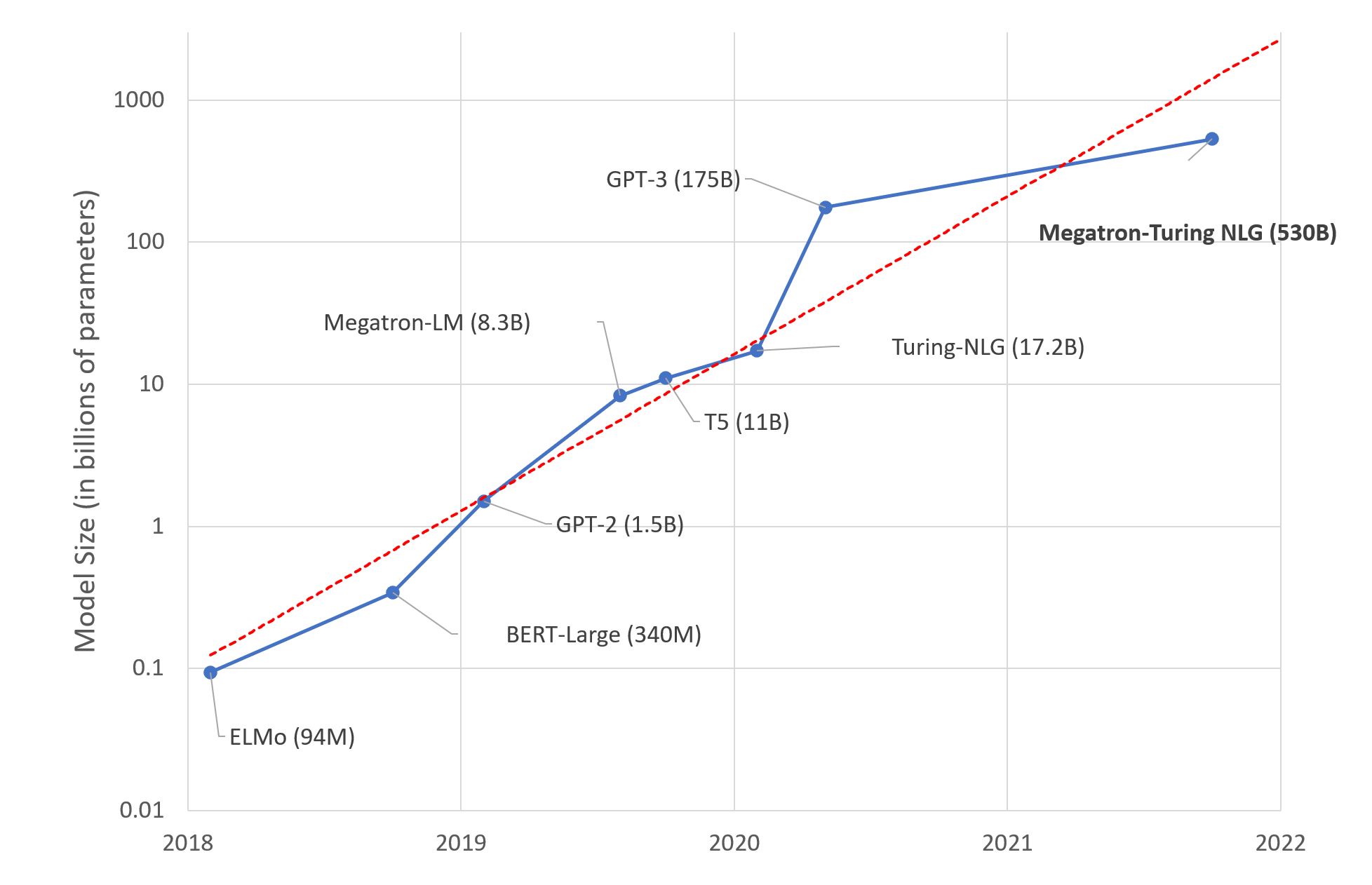

Distributed Training Colossal Ai When doing distributed training, the efficiency with which you load data can often become critical. here are a few tips to make sure your tf.data pipelines run as fast as possible. Learn federated learning with transformers through practical code examples. build privacy preserving distributed ai models without sharing raw data. This is an end to end example of distributed training using multiworkermirroredstrategy in tensorflow. the example uses the mnist dataset to train a simple cnn model. Distributing training jobs allow you to push past the single gpu memory and compute bottlenecks, expediting the training of larger models (or even making it possible to train them in the first place) by training across many gpus simultaneously.

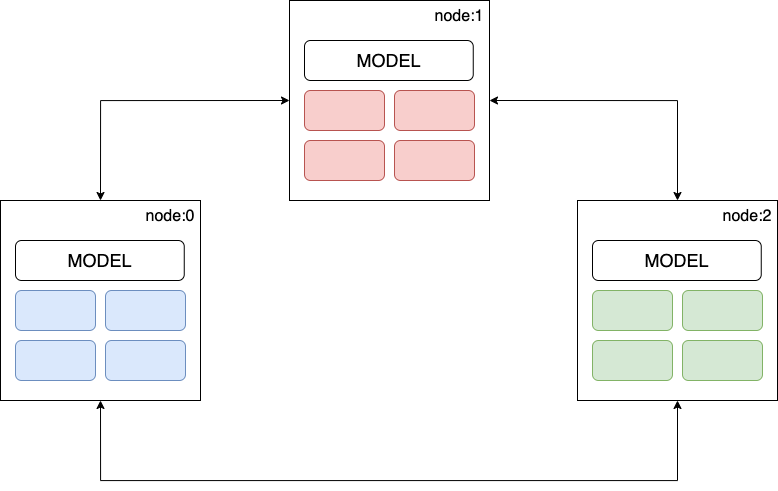

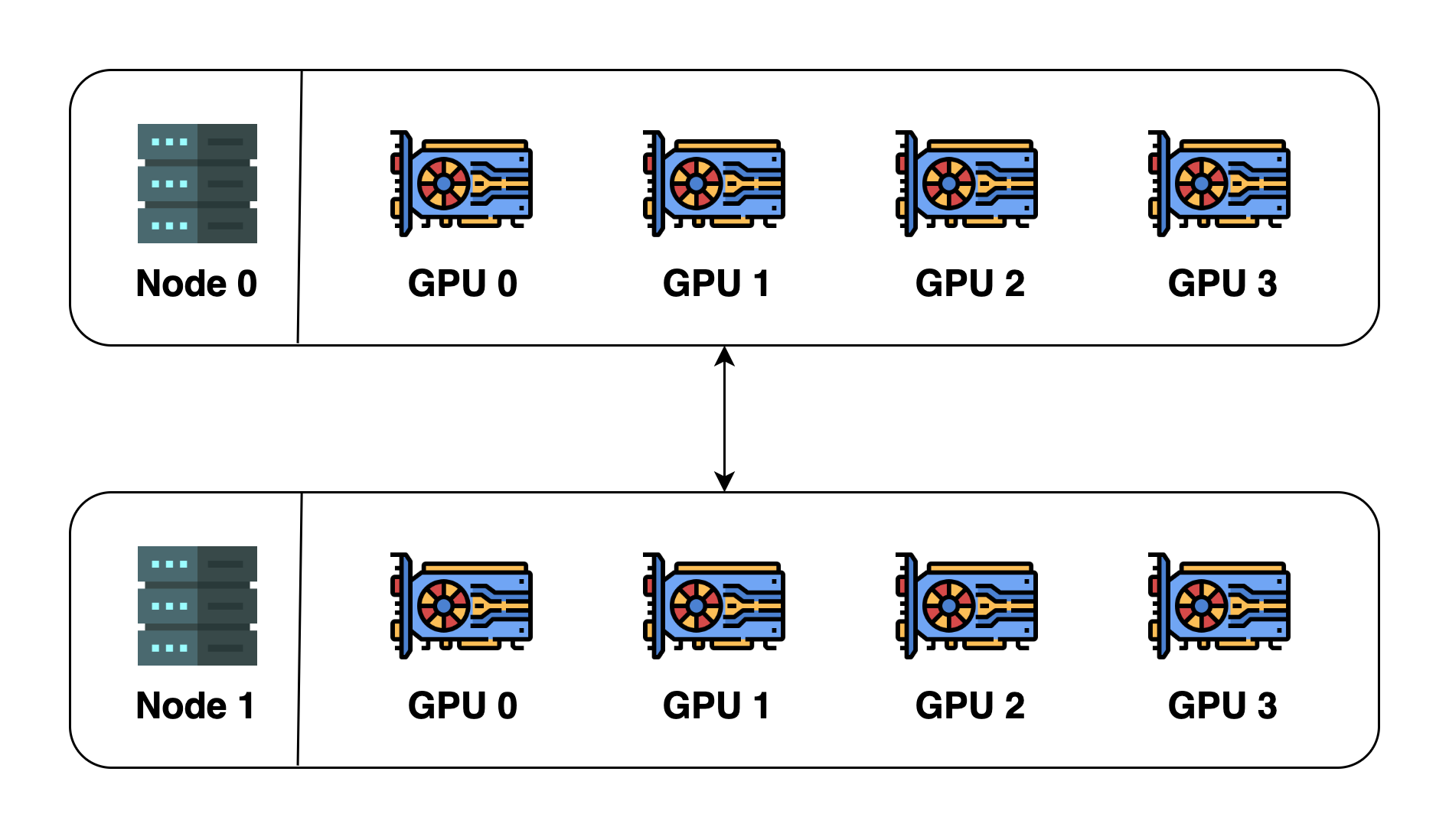

Distributed Training Colossal Ai This is an end to end example of distributed training using multiworkermirroredstrategy in tensorflow. the example uses the mnist dataset to train a simple cnn model. Distributing training jobs allow you to push past the single gpu memory and compute bottlenecks, expediting the training of larger models (or even making it possible to train them in the first place) by training across many gpus simultaneously. In this article, we will discuss distributed training with tensorflow and understand how you can incorporate it into your ai workflows. in order to maximize performance when addressing the ai challenges of today, we'll uncover best practices and valuable tips for utilizing tensorflow's capabilities. It covers the setup process for training the transformer model across multiple gpus on one or more compute nodes, the coordination between processes, and the data distribution strategy. Learn data parallelism, model parallelism, and pipeline parallelism strategies. distributed training spreads ai model training across multiple gpus or machines so you can train larger models faster. It allows you to carry out distributed training using existing models and training code with minimal changes. this tutorial demonstrates how to use the tf.distribute.mirroredstrategy to perform in graph replication with synchronous training on many gpus on one machine.

Distributed Training Download Scientific Diagram In this article, we will discuss distributed training with tensorflow and understand how you can incorporate it into your ai workflows. in order to maximize performance when addressing the ai challenges of today, we'll uncover best practices and valuable tips for utilizing tensorflow's capabilities. It covers the setup process for training the transformer model across multiple gpus on one or more compute nodes, the coordination between processes, and the data distribution strategy. Learn data parallelism, model parallelism, and pipeline parallelism strategies. distributed training spreads ai model training across multiple gpus or machines so you can train larger models faster. It allows you to carry out distributed training using existing models and training code with minimal changes. this tutorial demonstrates how to use the tf.distribute.mirroredstrategy to perform in graph replication with synchronous training on many gpus on one machine.

Comments are closed.