Serverless Machine Learning Inference Datachef

Datachef In our post on serverless architectures pause, think, and then redesign, we talked about the advantages of serverless architecture in general. here we will focus on its use cases in machine learning inference. This post will review and compare the available options for building a machine learning serverless inference endpoint within aws services. you can find the best one according to your.

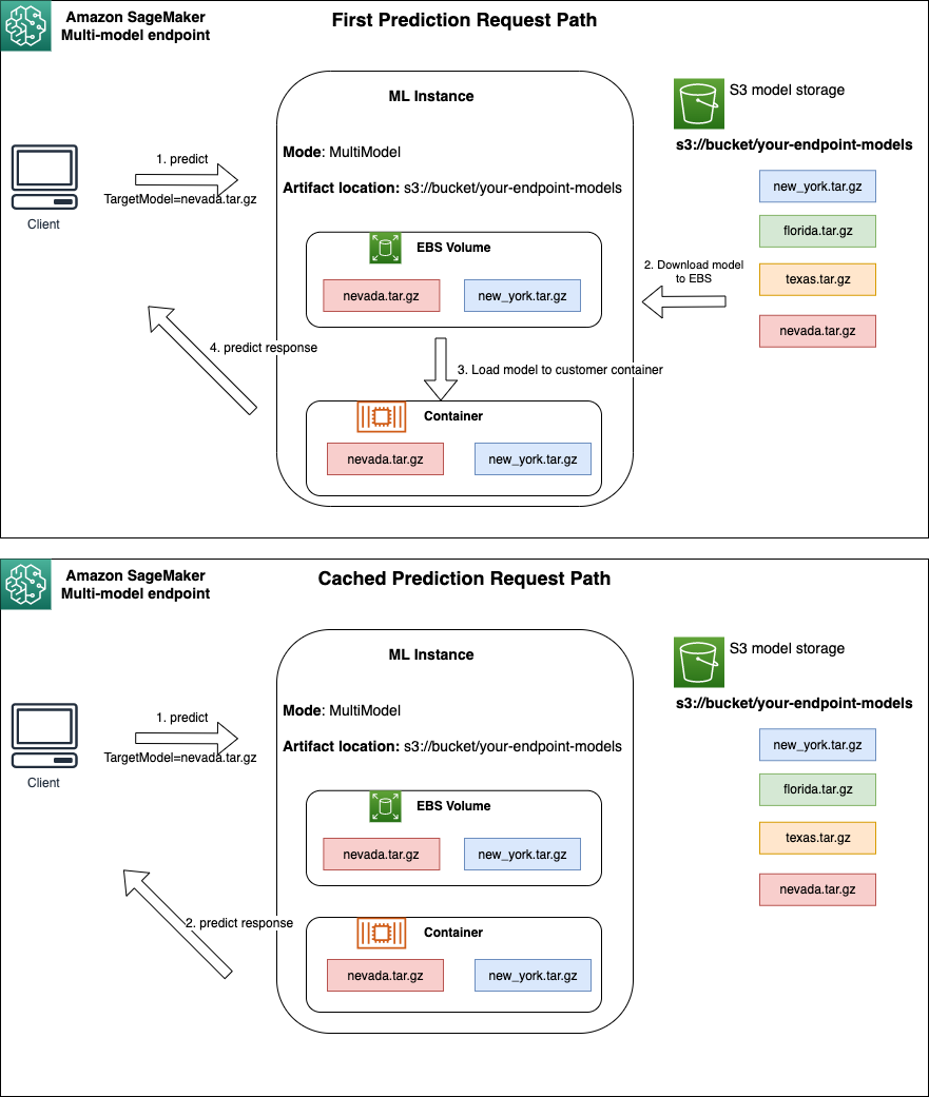

Serverless Machine Learning Inference Datachef In this post, we will compare different options for serverless inference for your machine learning models within aws. Machine learning inference is the process of using a trained machine learning model to make predictions on new, unseen data. this process is critical for deploying machine learning models in real world applications, where the model must provide timely and accurate predictions. Serverless computing can be used for real time machine learning (ml) prediction using serverless inference functions. deploying an ml serverless inference function involves building a compute resource, deploying an ml model, network infrastructure, and permissions to call the inference function. Serverless inference is an approach to using machine learning models that eliminates the need to provision or manage any underlying infrastructure while still enabling applications to access ai capabilities.

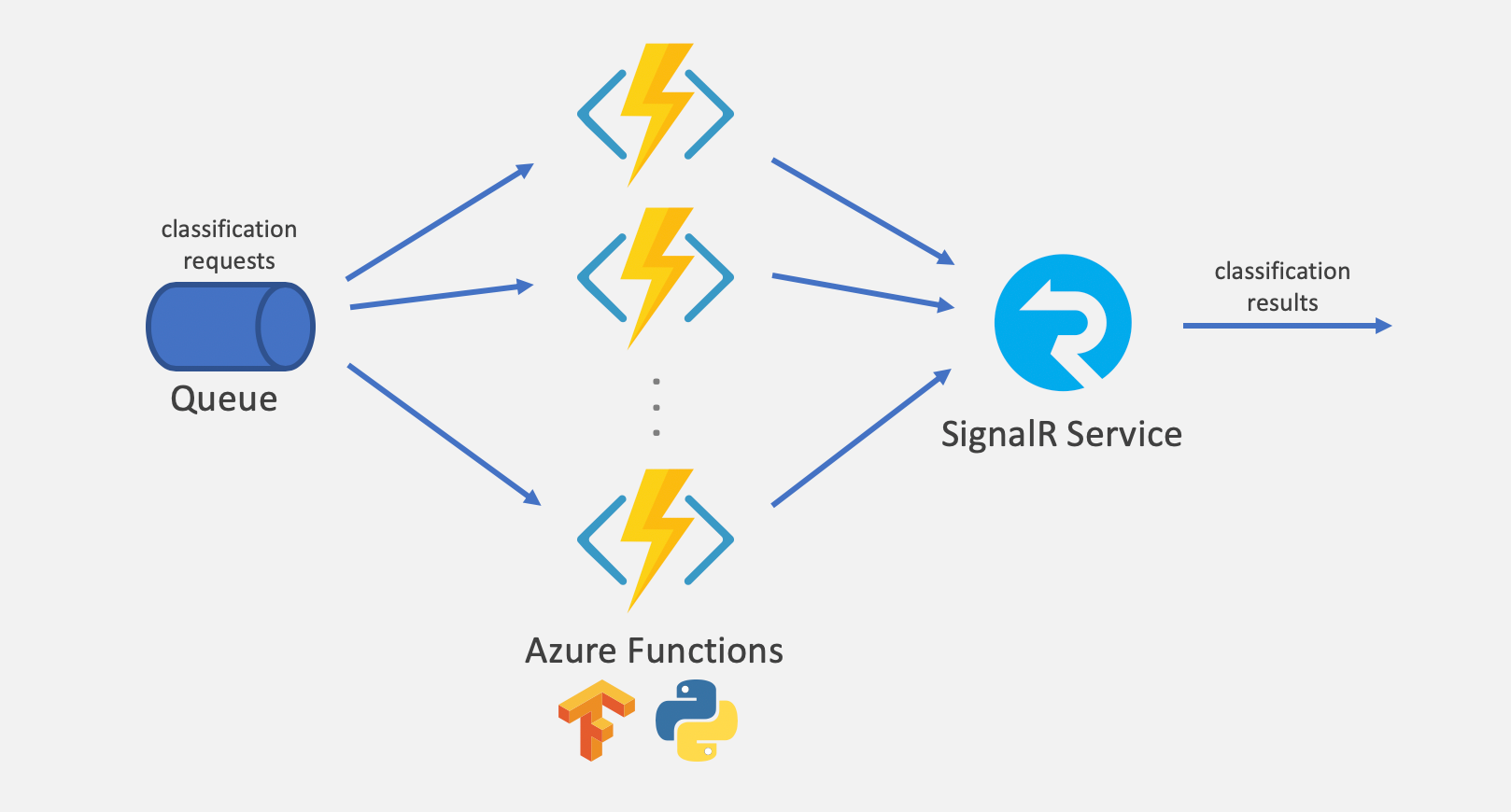

Large Scale Serverless Machine Learning Inference With Azure Functions Serverless computing can be used for real time machine learning (ml) prediction using serverless inference functions. deploying an ml serverless inference function involves building a compute resource, deploying an ml model, network infrastructure, and permissions to call the inference function. Serverless inference is an approach to using machine learning models that eliminates the need to provision or manage any underlying infrastructure while still enabling applications to access ai capabilities. Due to the limitations of serverless function deployment and resource provisioning, the combination of ml and serverless is a complex undertaking. we tackle this problem through decomposition of large ml models into smaller sub models, referred to as slices. Large machine learning models often demand gpu resources for efficient inference to meet slos. in the context of these trends, there is growing interest in hosting ai models in a serverless architecture while still providing gpu access for inference tasks. In our research, we focus on selecting serverless computing as the preferred infrastructure for deploying these popular inference workloads. Batching is critical for latency performance and cost effectiveness of machine learning inference, but unfortunately it is not supported by existing serverless platforms due to their stateless design.

How To Scale Machine Learning Inference For Multi Tenant Saas Use Cases Due to the limitations of serverless function deployment and resource provisioning, the combination of ml and serverless is a complex undertaking. we tackle this problem through decomposition of large ml models into smaller sub models, referred to as slices. Large machine learning models often demand gpu resources for efficient inference to meet slos. in the context of these trends, there is growing interest in hosting ai models in a serverless architecture while still providing gpu access for inference tasks. In our research, we focus on selecting serverless computing as the preferred infrastructure for deploying these popular inference workloads. Batching is critical for latency performance and cost effectiveness of machine learning inference, but unfortunately it is not supported by existing serverless platforms due to their stateless design.

Large Scale Serverless Machine Learning Inference With Azure Functions In our research, we focus on selecting serverless computing as the preferred infrastructure for deploying these popular inference workloads. Batching is critical for latency performance and cost effectiveness of machine learning inference, but unfortunately it is not supported by existing serverless platforms due to their stateless design.

What Is Inference In Machine Learning

Comments are closed.