Serverless Deployment With Google Gemma Using Beam Cloud

Serverless Deployment With Google Gemma Using Beam Cloud Deploy google gemma 2b on beam cloud using fastapi for serverless inference. this guide covers model setup, hugging face token authentication, autoscaling, and seamless deployment. This article is the redemption. gemma 4 just launched. it's a bigger jump than gemma 3 was. and this time, i'm deploying it on cloud run, which scales to zero when you're not using it. forget to turn it off. i dare you. you won't pay a cent. this article is in two parts.

Serverless Deployment With Google Gemma Using Beam Cloud Step by step guide to deploying gemma 4 (31b dense and 26b moe with ~4b active params) on gpu cloud using vllm. hardware requirements, cost breakdown, and benchmarks included. This codelab will guide you through deploying and serving google’s gemma 3 model by leveraging vllm on google cloud run. Gemma 4 eliminates that friction entirely. google deepmind's newest open model family ships under a standard apache 2.0 license — the same permissive terms used by qwen, mistral, arcee, and most. Run sandboxes, inference, and training with ultrafast boot times, instant autoscaling, and a developer experience that just works.

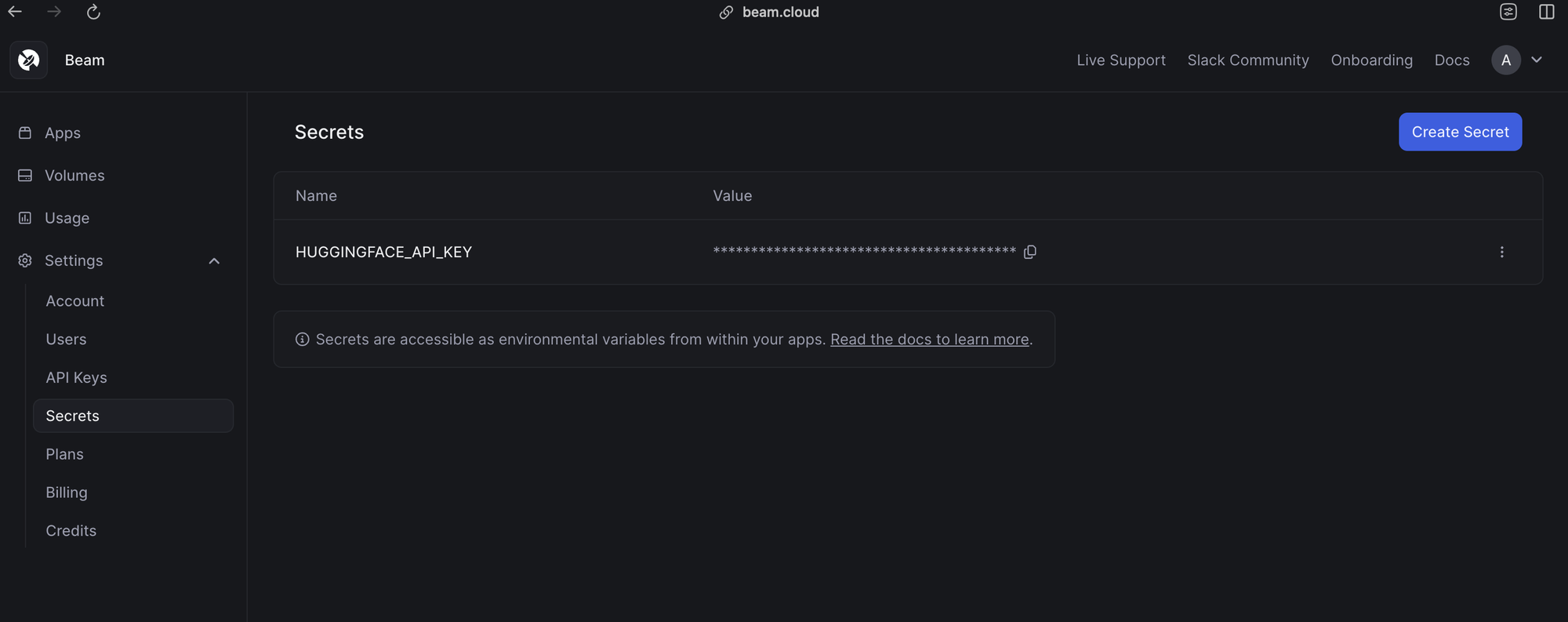

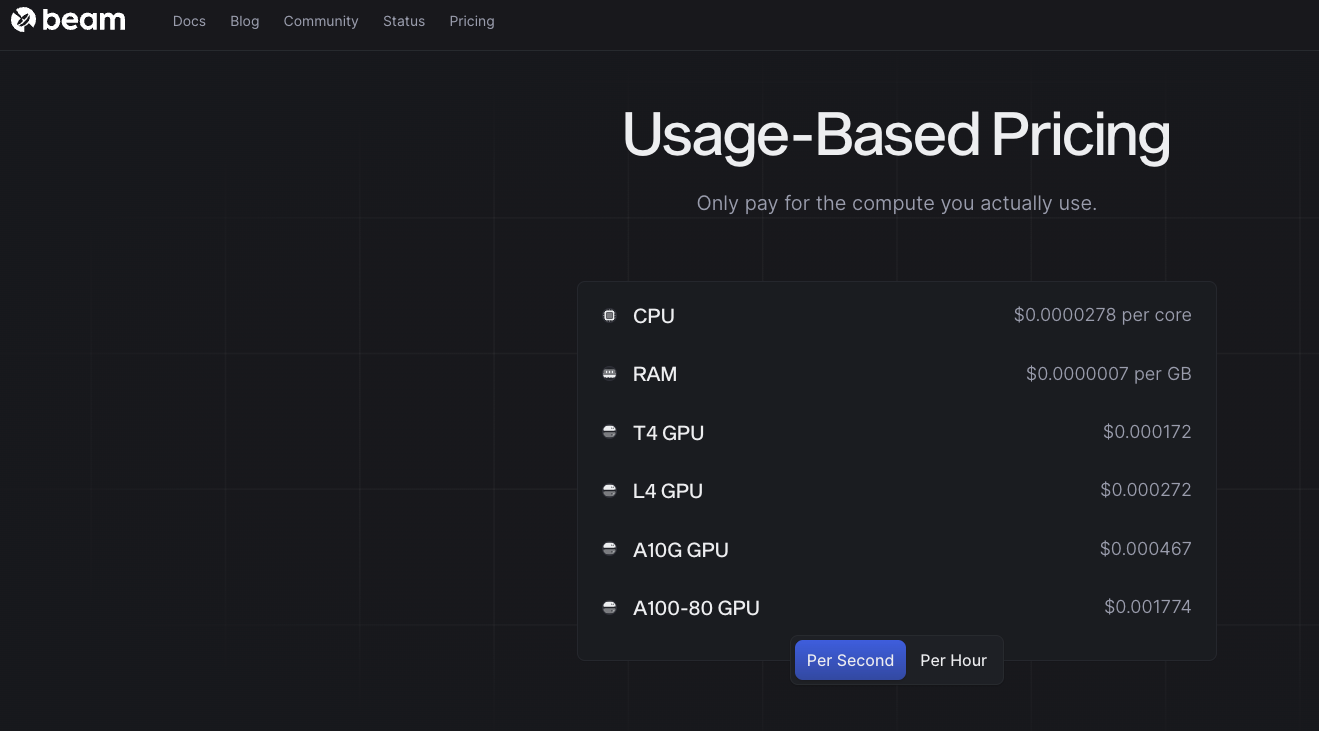

Serverless Deployment With Google Gemma Using Beam Cloud Gemma 4 eliminates that friction entirely. google deepmind's newest open model family ships under a standard apache 2.0 license — the same permissive terms used by qwen, mistral, arcee, and most. Run sandboxes, inference, and training with ultrafast boot times, instant autoscaling, and a developer experience that just works. Gemma 4 is now available on google cloud with open‑weight models you can fine‑tune and deploy across vertex ai, cloud run, gke, gpus, and tpus while keeping enterprise‑grade security and control. In this guide, i’m excited to show you how this new hardware release transforms complex fine tuning into a scalable, serverless experience without the need to manage complex clusters or maintain. Beam is a fast, open source runtime for serverless ai workloads. it gives you a pythonic interface to deploy and scale ai applications with zero infrastructure overhead. Learn about running functions, deploying endpoints, and testing your code. you only pay for the compute you use, by the millisecond of usage. create an account on beam. you’ll get 15 hours of free credit when you signup! activate a python virtualenv, which is where you’ll install the beam sdk.

Comments are closed.