Sequential Preference Ranking For Efficient Reinforcement Learning From

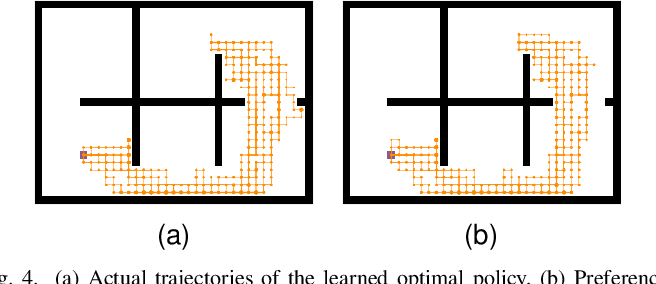

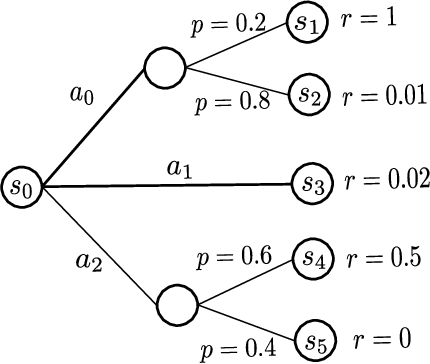

Efficient Preference Based Reinforcement Learning Using Learned However, existing rlhf models are considered inefficient as they produce only a single preference data from each human feedback. to tackle this problem, we propose a novel rlhf framework called seqrank, that uses sequential preference ranking to enhance the feedback efficiency. Sequential preference ranking for efficient reinforcement learning from human feedback. anonymous codes for neurips 2023 submission.

Efficient Meta Reinforcement Learning For Preference Based Fast Trust region based safe distributional reinforcement learning for multiple constraints sequential preference ranking for efficient reinforcement learning from human feedback. Sequential preference ranking for efficient reinforcement learning from human feedback. The paper introduces a new method called seqrank that improves how machines learn from human preferences in reinforcement learning. instead of asking humans to compare just two options at a time,. Leveraging randomized exploration for tractable and efficient preference query selection, we provide both online algorithms with regret guarantees and a preference free algorithm with pac style guarantees under rl oracle assumptions.

Preference Guided Reinforcement Learning For Efficient Exploration The paper introduces a new method called seqrank that improves how machines learn from human preferences in reinforcement learning. instead of asking humans to compare just two options at a time,. Leveraging randomized exploration for tractable and efficient preference query selection, we provide both online algorithms with regret guarantees and a preference free algorithm with pac style guarantees under rl oracle assumptions. The illustration of the three multi task learning frameworks for sequential recommendation, including joint learning, pre training with fine tuning, and ensemble learning. Dive into the research topics of 'sequential preference ranking for efficient reinforcement learning from human feedback'. together they form a unique fingerprint. Bibliographic details on sequential preference ranking for efficient reinforcement learning from human feedback.

Preference Based Reinforcement Learning With Finite Time Guarantees The illustration of the three multi task learning frameworks for sequential recommendation, including joint learning, pre training with fine tuning, and ensemble learning. Dive into the research topics of 'sequential preference ranking for efficient reinforcement learning from human feedback'. together they form a unique fingerprint. Bibliographic details on sequential preference ranking for efficient reinforcement learning from human feedback.

Efficient Reinforcement Learning Through Trajectory Generation Deepai Bibliographic details on sequential preference ranking for efficient reinforcement learning from human feedback.

Robust Reinforcement Learning Objectives For Sequential Recommender

Comments are closed.