Selection Of Performance Measures For Binary And Multiclass Classifiers

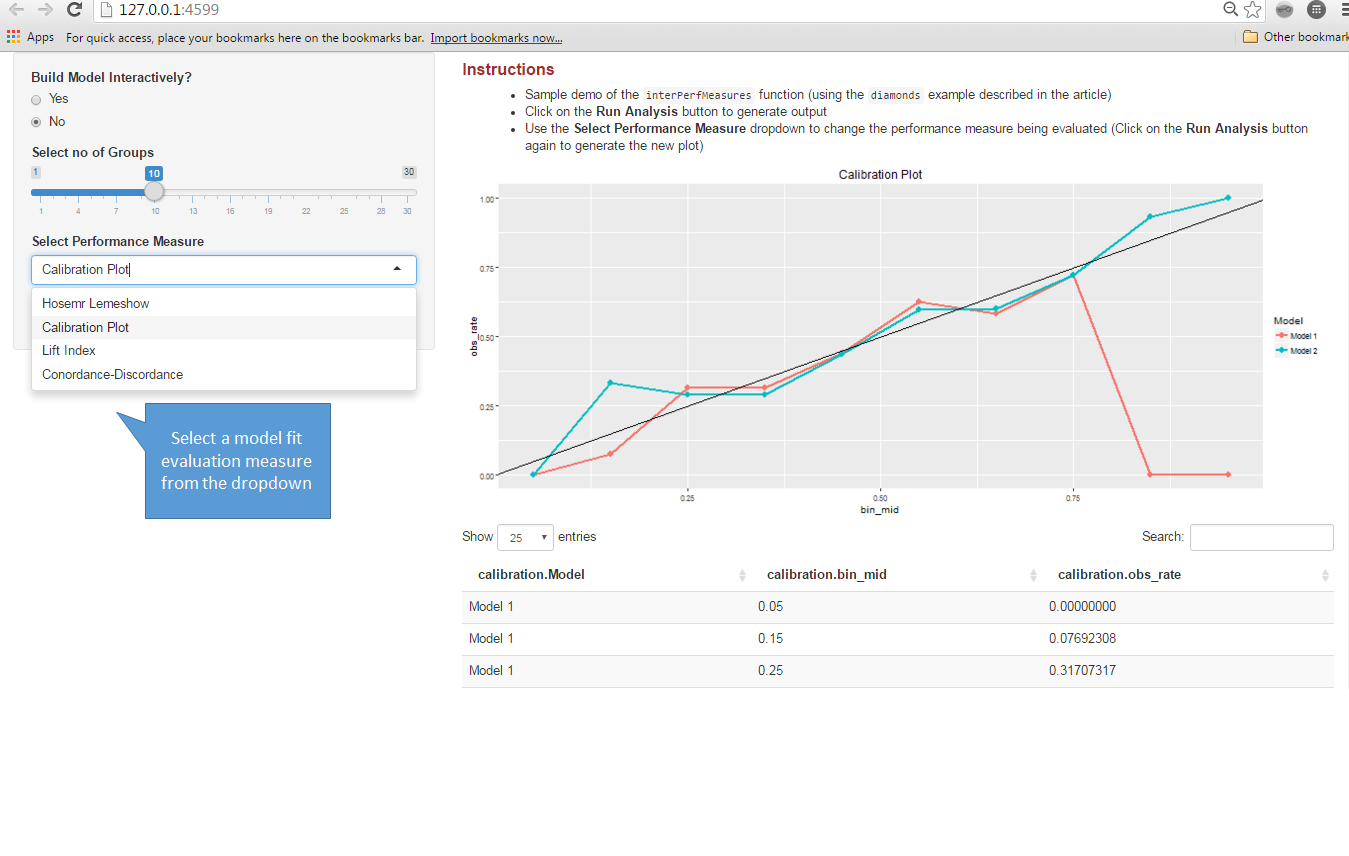

Interactive Performance Evaluation Of Binary Classifiers Datascience These performance measures of two binary classification data can be simply compared on the basis of two independent proportions when the study design is unpaired. To choose the right model, it is important to gauge the performance of each classification algorithm. this tutorial will look at different evaluation metrics to check the model's performance and explore which metrics to choose based on the situation.

Interactive Performance Evaluation Of Binary Classifiers Datascience Generally in the form of improving that metric on the dev set. useful to quantify the “gap” between: desired performance and baseline (estimate effort initially). desired performance and current performance. measure progress over time. useful for lower level tasks and debugging (e.g. diagnosing bias vs variance). This paper presents a systematic analysis of twenty four performance measures used in the complete spectrum of machine learning classification tasks, i.e., binary, multi class, multi labelled, and hierarchical. The performance of the developed classifier is evaluated using datasets from binary, multi class and multi label problems. the results obtained are compared with state of the art. How to calculate performance for multi class problems? learn about micro and macro averaged f1 scores as well as a generalization of the auc here!.

Performance Measures Of Different Classifiers Download Scientific Diagram The performance of the developed classifier is evaluated using datasets from binary, multi class and multi label problems. the results obtained are compared with state of the art. How to calculate performance for multi class problems? learn about micro and macro averaged f1 scores as well as a generalization of the auc here!. The general idea is to apply several classifiers to a selection of real world data sets, and to process their accuracy through various measures. these are then compared in terms of correlation. Our aim here is to introduce the most common metrics for binary and multi class classification, regression, image segmentation, and object detection. we explain the basics of. Abstract ures for the evaluation of binary classi ers. these measures are categorized into three broad families: measures based on a single classi cation threshold, measures based on a probabilistic nterpretation of error, and ranking measures. graphical methods, such as roc curves, precision recall curves, tpr fpr plots, gai. In this tutorial, we’ll discuss how to measure the success of a classifier for both binary and multiclass classification problems. we’ll cover some of the most widely used classification measures; namely, accuracy, precision, recall, f 1 score, roc curve, and auc.

Comments are closed.