Sdg Hub An Open Source Toolkit For Synthetic Data Generation Llm Customization

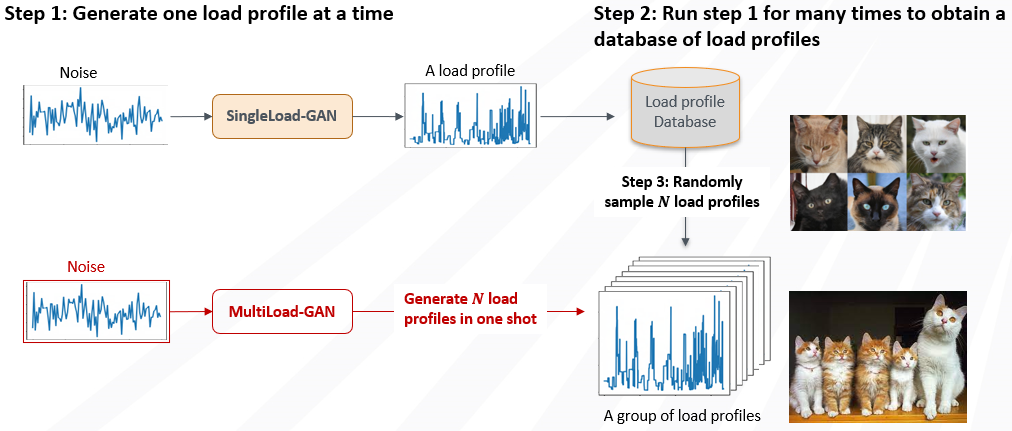

Free Video Sdg Hub An Open Source Toolkit For Synthetic Data A modular python framework for building synthetic data generation pipelines using composable blocks and flows. transform datasets through building block composition mix and match llm powered and traditional processing blocks to create sophisticated data generation workflows. In this talk, we will introduce sdg hub, an open source toolkit developed at red hat for customizing language models using synthetic data. we will begin by unpacking what synthetic data means in the context of llms, and how it enables model customization.

Github Syntheticdatagenerationandsharing Sdg Algorithms Data With sdg hub, users can mix and match llm based components with traditional data processing tools. it supports yaml based orchestration, schema discovery, input validation, asynchronous execution, and monitoring. Most setups consist of makeshift solutions—scripts cobbled together, prone to breaking and notoriously difficult to scale. this is where sdghub steps in, aiming to revolutionize this segment by providing a structured, efficient framework for managing synthetic data pipelines. A modular python framework for building synthetic data generation pipelines using composable blocks and flows. transform datasets through building block composition mix and match llm powered and traditional processing blocks to create sophisticated data generation workflows. A modular python framework for building synthetic data generation pipelines using composable blocks and flows.

Github Syntheticdatagenerationandsharing Sdg Algorithms Data A modular python framework for building synthetic data generation pipelines using composable blocks and flows. transform datasets through building block composition mix and match llm powered and traditional processing blocks to create sophisticated data generation workflows. A modular python framework for building synthetic data generation pipelines using composable blocks and flows. We will begin by unpacking what synthetic data means in the context of llms, and how it enables model customization. Gain practical insights into leveraging synthetic data for llm customization and discover how this open source toolkit can streamline your machine learning workflows. This document provides an overview of sdg hub, a modular python framework for building synthetic data generation pipelines. it covers the system's purpose, high level architecture, core components, and how they interact. If you want to train an llm but don’t have enough clean or shareable data, here’s something useful: i just published a quick start guide (with frank la vigne!) on how to generate synthetic.

Comments are closed.