Scaling And Renormalization In High Dimensional Regression Ai

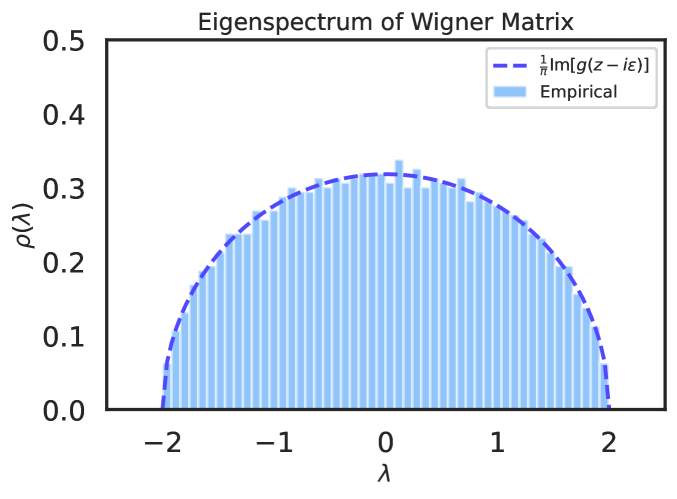

Scaling And Renormalization In High Dimensional Regression Ai In this paper, we present a unifying perspective on recent results on ridge regression using the basic tools of random matrix theory and free probability, aimed at readers with backgrounds in physics and deep learning. This paper presents a succinct derivation of the training and generalization performance of a variety of high dimensional ridge regression models using the basic tools of random matrix theory and free probability.

Scaling And Renormalization In High Dimensional Regression Ai This work develops a tractable reduction that reveals a three phase trajectory that provides the first rigorous characterization of scaling laws in nonlinear regression with anisotropic data, highlighting how anisotropy reshapes learning dynamics. The paper discusses challenges in high dimensional regression, using tools from random matrix theory and free probability to analyze and understand the behavior of estimators. This paper explores the scaling and renormalization of high dimensional regression models, particularly in the context of neural networks. it builds upon previous work on neural scaling laws and meta learning for high dimensional regression. Abstract: this paper presents a succinct derivation of the training and generalization performance of a variety of high dimensional ridge regression models using the basic tools of random matrix theory and free probability.

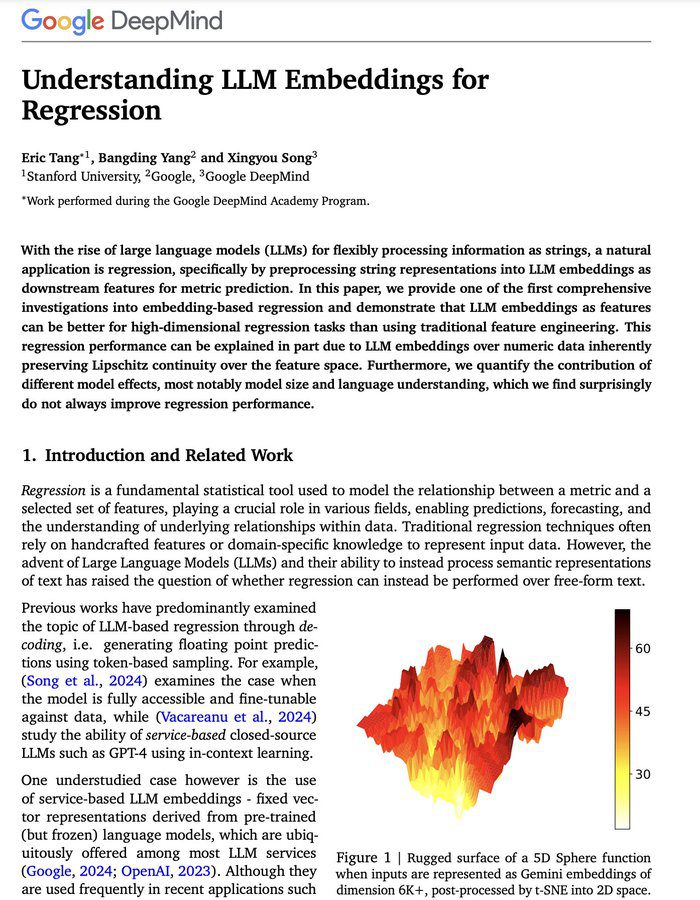

The Surprising Efficacy Of Llm Embeddings In High Dimensional This paper explores the scaling and renormalization of high dimensional regression models, particularly in the context of neural networks. it builds upon previous work on neural scaling laws and meta learning for high dimensional regression. Abstract: this paper presents a succinct derivation of the training and generalization performance of a variety of high dimensional ridge regression models using the basic tools of random matrix theory and free probability. Sk structure determine scaling behavior. for a very recent review of the scaling laws literature in the context of anguage models, see anwar et al. (2024). let l(n, t) be the performance of a model with n parameters trained on t tokens.2 we will be interested in characterizing the scaling p. It provides free access to secondary information on researchers, articles, patents, etc., in science and technology, medicine and pharmacy. the search results guide you to high quality primary information inside and outside jst. We introduce the basic concepts of random matrix theory and free probability necessary to characterize the performance of high dimensional regression across a variety of machine learning models.

Comments are closed.