Scaling Ai With Google Clouds Tpus

Scaling Ai With Google Cloud S Tpus Cloud tpus are designed to scale cost efficiently for a wide range of ai workloads, spanning training, fine tuning, and inference. cloud tpus provide the versatility to accelerate. Explore the tpu cloud architecture, from individual chips to massive pods and multislice configurations, showcasing how google builds scalable, purpose built infrastructure to meet the.

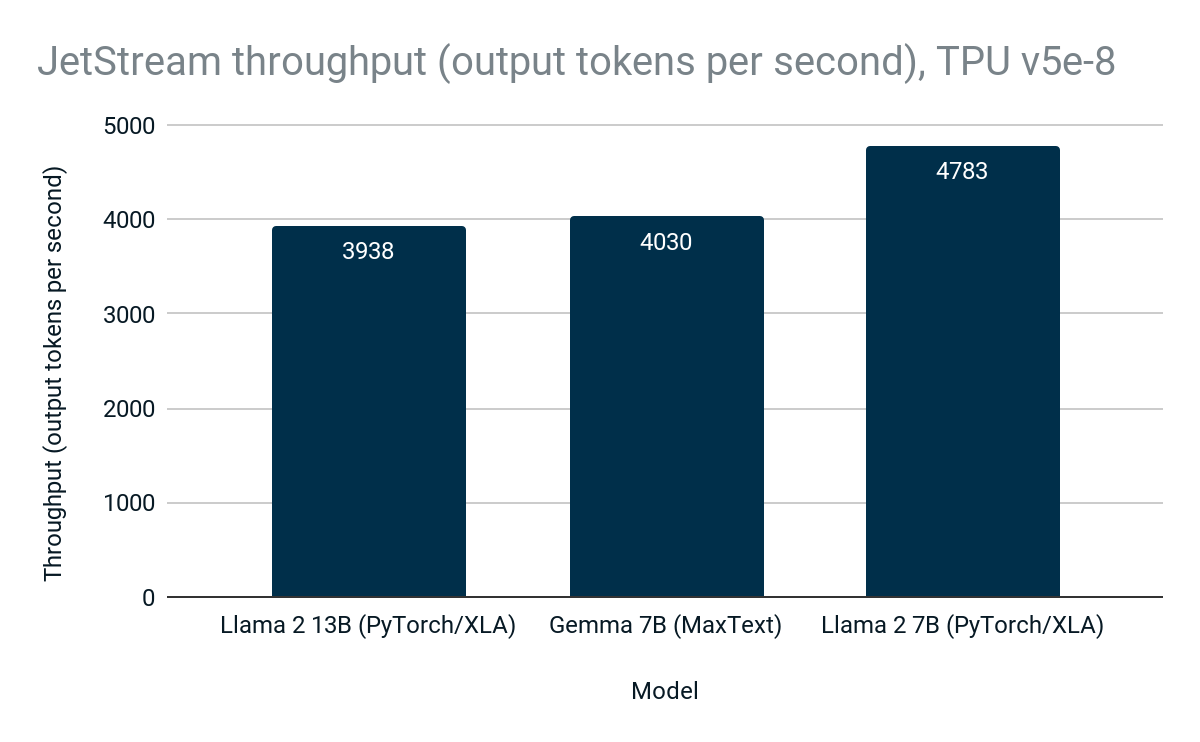

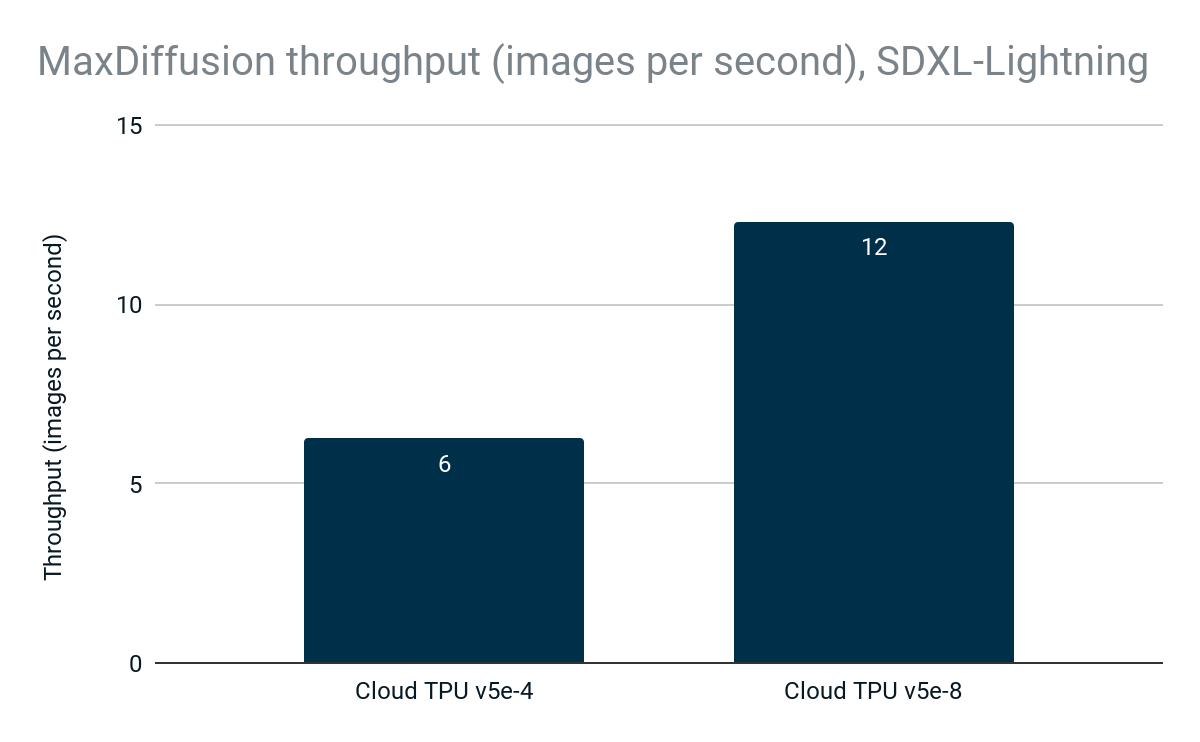

Accelerating Ai Inference With Google Cloud Tpus And Gpus Google Today at google cloud next 25, we’re introducing ironwood, our seventh generation tensor processing unit (tpu) — our most performant and scalable custom ai accelerator to date, and the first designed specifically for inference. For google, tpus offer a competitive edge at a time when all the hyperscalers are rushing to build mammoth data centers, and ai processors can't get manufactured fast enough to meet demand . Discover the unique tpu cloud architecture, including pods and the critical interchip interconnect, essential for massive, reliable distributed training. understand how to select the right tpu version for your specific needs, balancing cost and performance for your ai infrastructure. Revolutionize your ai ml workloads with google cloud tpus. this webinar shows you how to work with large clusters of tpus, optimize inference at scale, and reduce costs while achieving your performance and scalability targets.

Accelerating Ai Inference With Google Cloud Tpus And Gpus Google Discover the unique tpu cloud architecture, including pods and the critical interchip interconnect, essential for massive, reliable distributed training. understand how to select the right tpu version for your specific needs, balancing cost and performance for your ai infrastructure. Revolutionize your ai ml workloads with google cloud tpus. this webinar shows you how to work with large clusters of tpus, optimize inference at scale, and reduce costs while achieving your performance and scalability targets. At google, our tensor processing units (tpus) are foundational to our supercomputing infrastructure. these custom asics power training and serving for both google’s own ai platforms, like gemini and veo, and the massive workloads of our cloud customers. Content discussing different aspects of scaling ml workloads, guidance for addressing common issues, and code demonstrations for deploying with google cloud platform. This article aims to provide a comprehensive guide on how to utilize cloud tpus effectively for high performance machine learning on gcp. getting started with cloud tpus. Seamless integration: tpus are designed to work optimally with google’s software frameworks, such as tensorflow. this compatibility streamlines the process of deploying ai models and enhances.

Accelerating Ai Inference With Google Cloud Tpus And Gpus Google At google, our tensor processing units (tpus) are foundational to our supercomputing infrastructure. these custom asics power training and serving for both google’s own ai platforms, like gemini and veo, and the massive workloads of our cloud customers. Content discussing different aspects of scaling ml workloads, guidance for addressing common issues, and code demonstrations for deploying with google cloud platform. This article aims to provide a comprehensive guide on how to utilize cloud tpus effectively for high performance machine learning on gcp. getting started with cloud tpus. Seamless integration: tpus are designed to work optimally with google’s software frameworks, such as tensorflow. this compatibility streamlines the process of deploying ai models and enhances.

Comments are closed.