Samurai Github

Github Google Samurai Samurai Shape And Material From Unconstrained This repository is the official implementation of samurai: adapting segment anything model for zero shot visual tracking with motion aware memory. all rights are reserved to the copyright owners (tm & © universal (2019)). this clip is not intended for commercial use and is solely for academic demonstration in a research paper. Samurai is an enhanced adaptation of segment anything model 2 for visual object tracking. it uses temporal motion cues and motion aware memory selection to achieve robust, accurate tracking without fine tuning.

Samurai Adapting Segment Anything Model For Zero Shot Visual Tracking For researchers, developers, and enthusiasts, samurai’s open source implementation on github offers a gateway to explore and build upon this transformative model. You can create a release to package software, along with release notes and links to binary files, for other people to use. learn more about releases in our docs. This article will provide an in depth exploration of samurai’s architecture, working procedure, and key innovations, incorporating insights from its official github repository. Samurai operates in real time and demonstrates strong zero shot performance across diverse benchmark datasets, showcasing its ability to generalize without fine tuning.

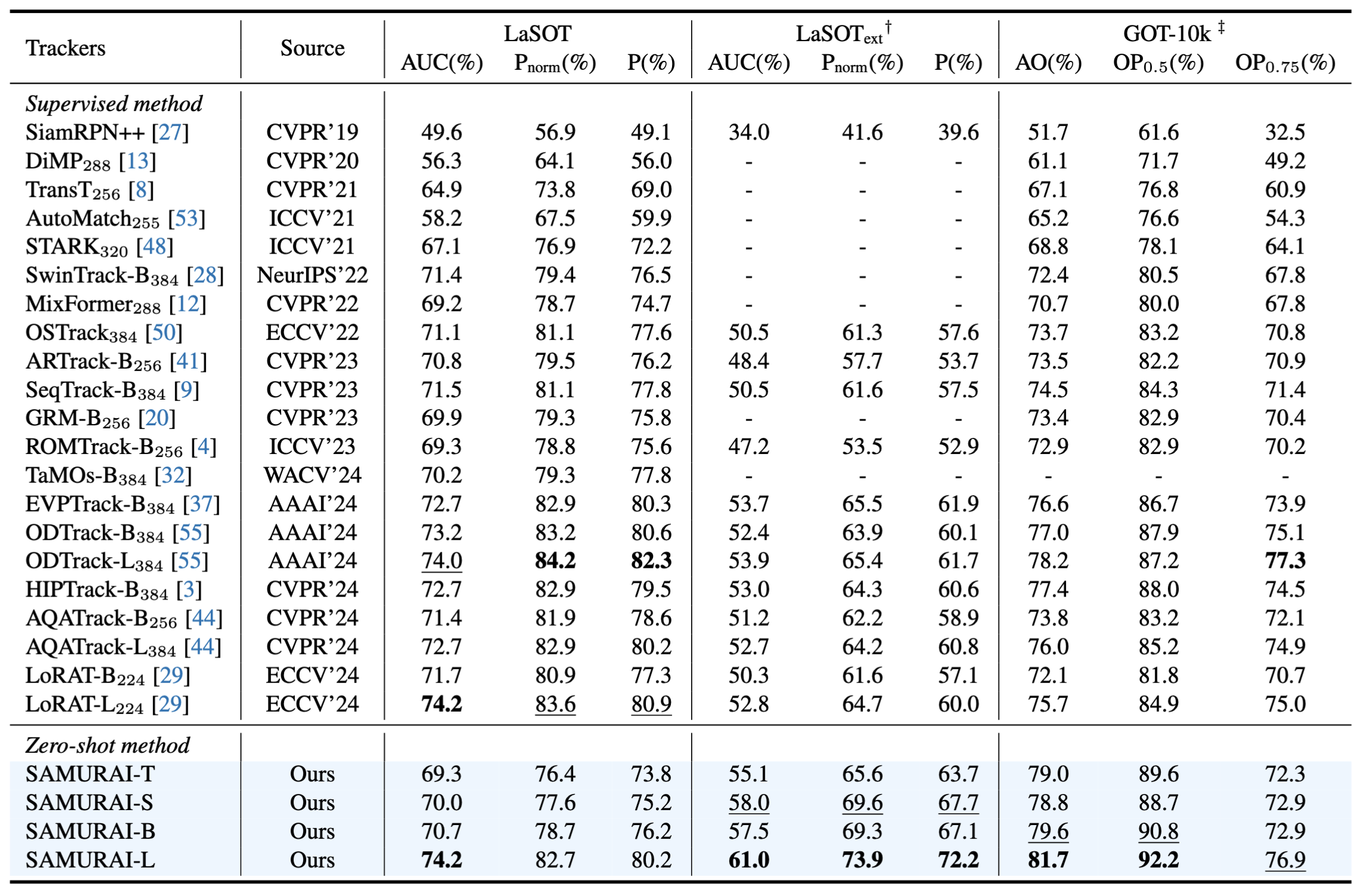

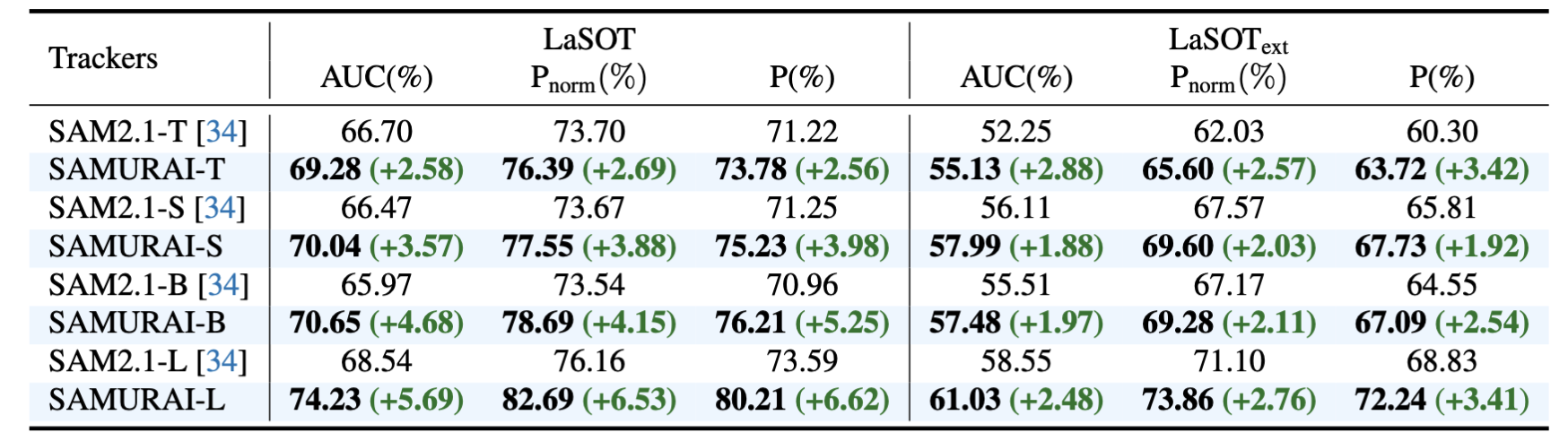

Samurai Adapting Segment Anything Model For Zero Shot Visual Tracking This article will provide an in depth exploration of samurai’s architecture, working procedure, and key innovations, incorporating insights from its official github repository. Samurai operates in real time and demonstrates strong zero shot performance across diverse benchmark datasets, showcasing its ability to generalize without fine tuning. Learn how to run samurai, a zero shot visual tracking model based on sam (segment anything model), on google colab. this step by step guide covers setting up gpu runtime, installing dependencies, and running inference with the lasot dataset for motion tracking. Implementation for samurai. a novel method which decomposes multiple coarsly posed images into shape, brdf and illumination. a conda environment is used for dependency management. in case new datasets should be processed, we also provide a bash script to setup the u2net: download one of our test scenes and extract it to a folder. then run:. Samurai enhances the segment anything model 2 for visual object tracking by integrating motion cues and a memory selection mechanism, improving performance and achieving real time zero shot tracking without retraining. We present samurai, a visual object tracking framework built on top of the segment anything model by introducing the motion based score for better mask prediction and memory selection to deal with self occlusion and abrupt motion in crowded scenes.

Comments are closed.