Run Open Source Llms Locally With Lm Studio Sid K

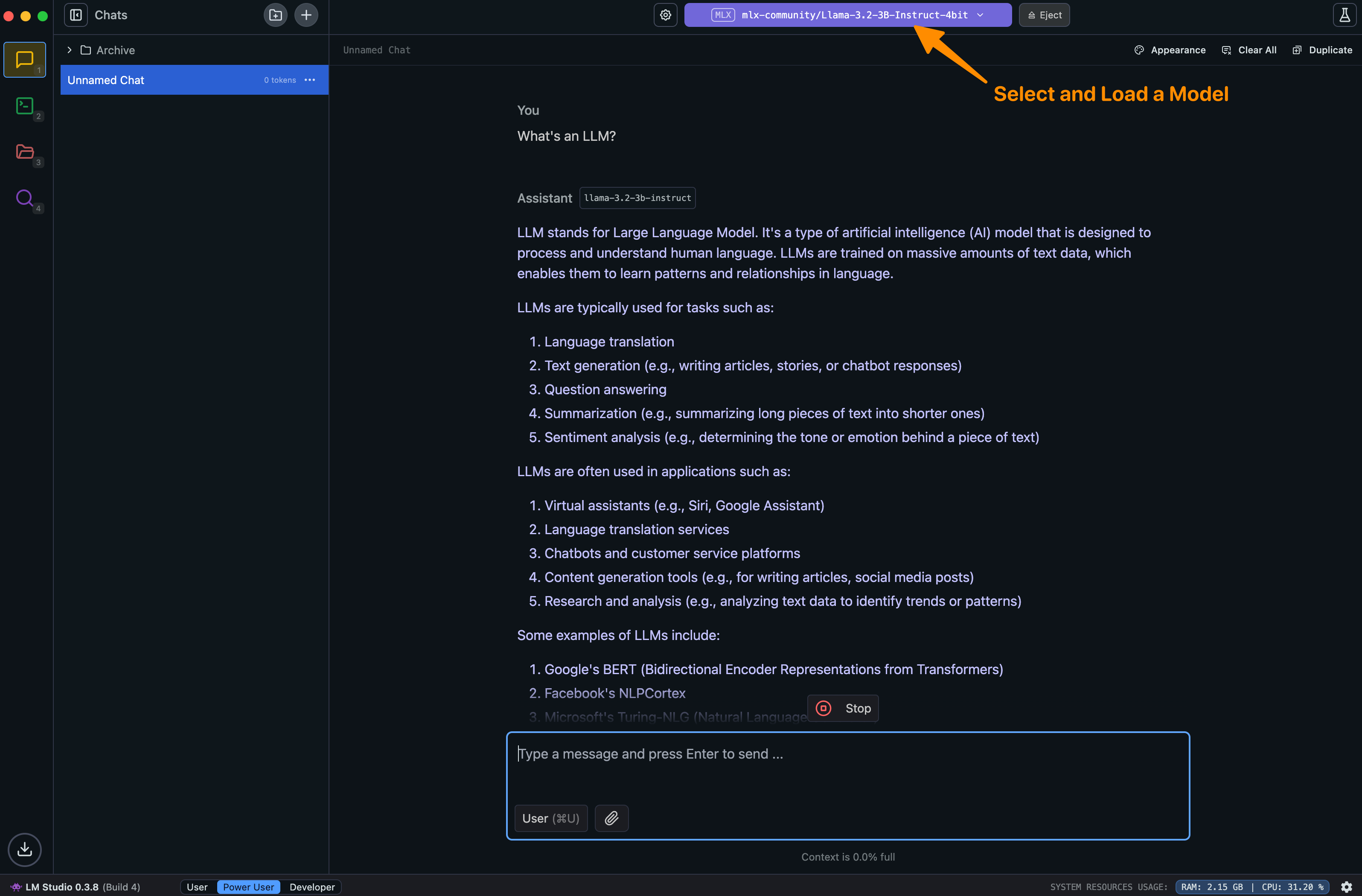

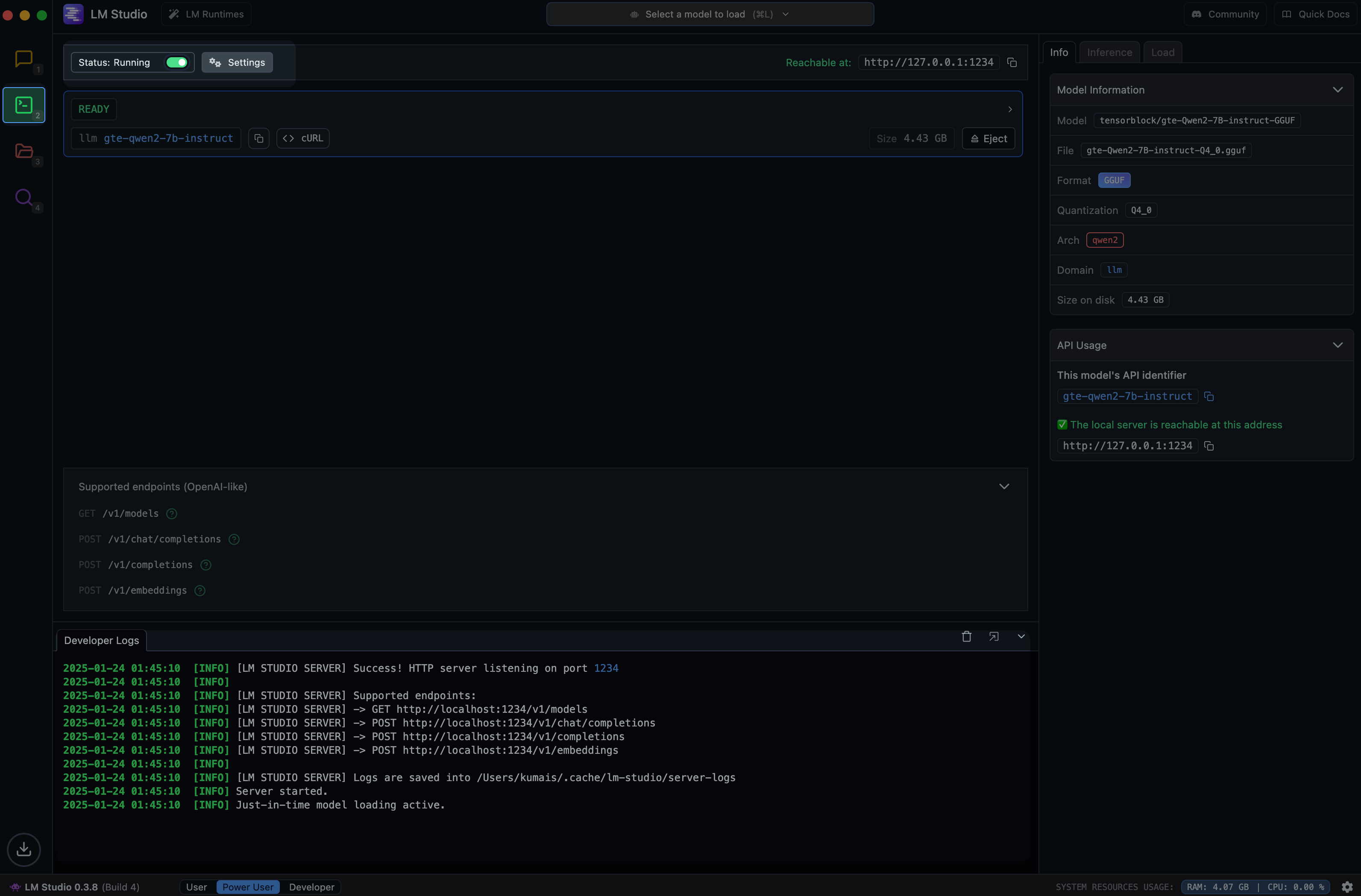

Run Open Source Llms Locally With Lm Studio Sid K Running ai models on your own machine is easier than ever before. in this guide, we will look at setting up lm studio and running open source models locally. It provides a user friendly gui (plus cli and api access) for downloading, loading, and interacting with open source llms on your own hardware.

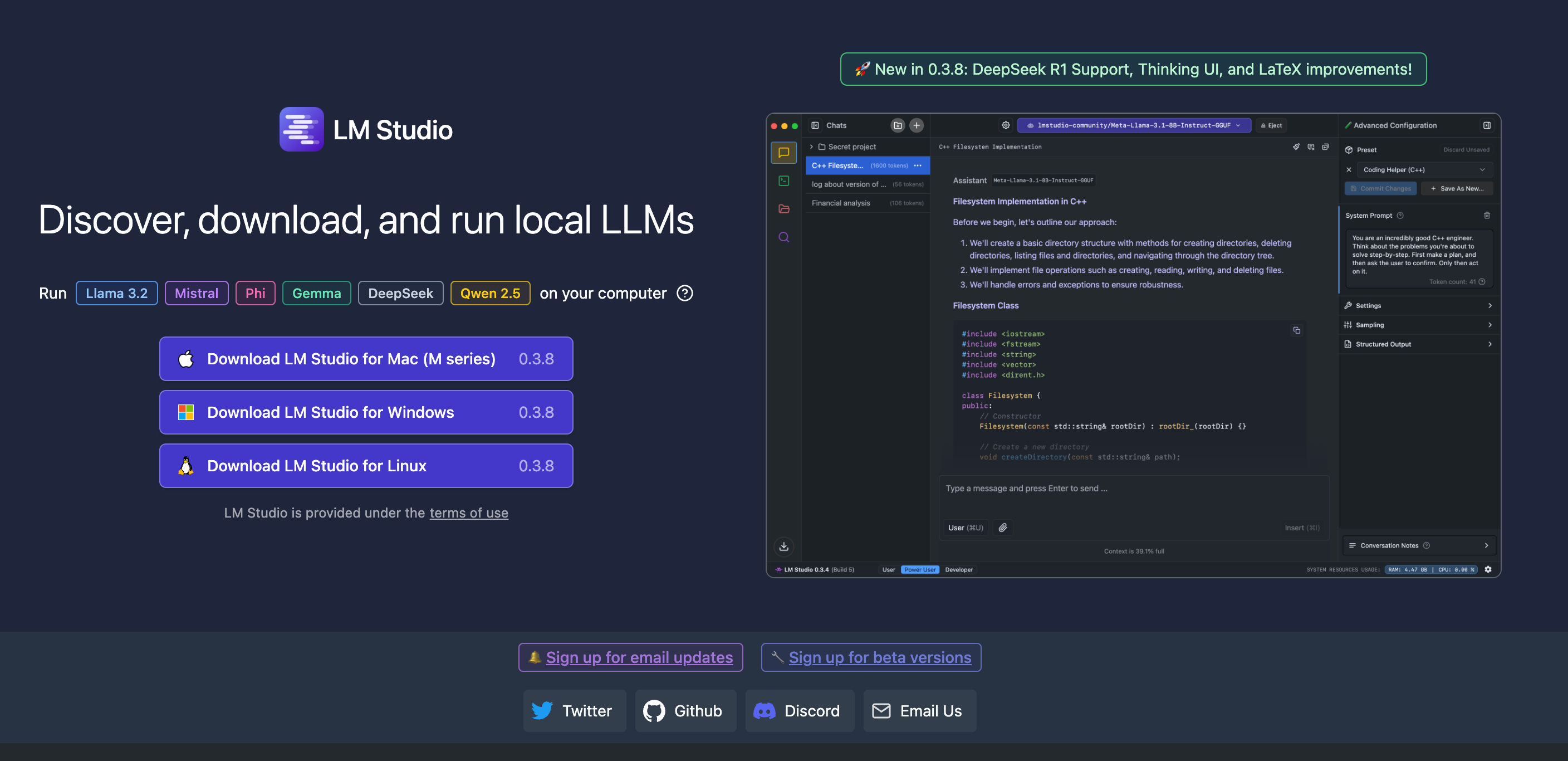

Run Open Source Llms Locally With Lm Studio Sid K Connect to remote instances of lm studio, load your models, and use them as if they were local. Video tutorial: running llms locally with lm studio to provide a more hands on understanding of lm studio's capabilities, here is a relevant video tutorial that walks you through the process of setting up and running llms locally. In this guide, you’ll learn how to install lm studio and ollama on windows, macos, and linux, and how to set up your first model for local use. 1. what are lm studio and ollama? a user friendly desktop application that allows you to download, run, and manage open source language models locally. Learn how to install, configure, and master lm studio — the free desktop app that lets you run open source large language models locally with full gpu acceleration and zero cloud dependency.

Run Open Source Llms Locally With Lm Studio Sid K In this guide, you’ll learn how to install lm studio and ollama on windows, macos, and linux, and how to set up your first model for local use. 1. what are lm studio and ollama? a user friendly desktop application that allows you to download, run, and manage open source language models locally. Learn how to install, configure, and master lm studio — the free desktop app that lets you run open source large language models locally with full gpu acceleration and zero cloud dependency. Running llms locally offers several advantages including privacy, offline access, and cost efficiency. this repository provides step by step guides for setting up and running llms using various frameworks, each with its own strengths and optimization techniques. Lm studio is a lightweight, open source desktop application that allows users to run and interact with open source llms on local desktop. it supports execution of open source. You can run any compatible large language model (llm) from hugging face, both in gguf (llama.cpp) format, as well as in the mlx format (mac only). you can run gguf text embedding models. It is exactly what the average windows mac linux user would find comfortable to run llms locally. just download the app and off you go. no messing about. the performance is going to vary depending on your computer, gpu and the model you choose, but it is much more lightweight than my ollama example.

Comments are closed.