Rmsprop Optimizer Visually Explained Deep Learning 12

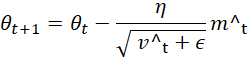

Rmsprop In this video, you’ll learn how rmsprop makes gradient descent faster and more stable by adjusting the step size for every parameter instead of treating all. Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm designed to improve the performance and speed of training deep learning models.

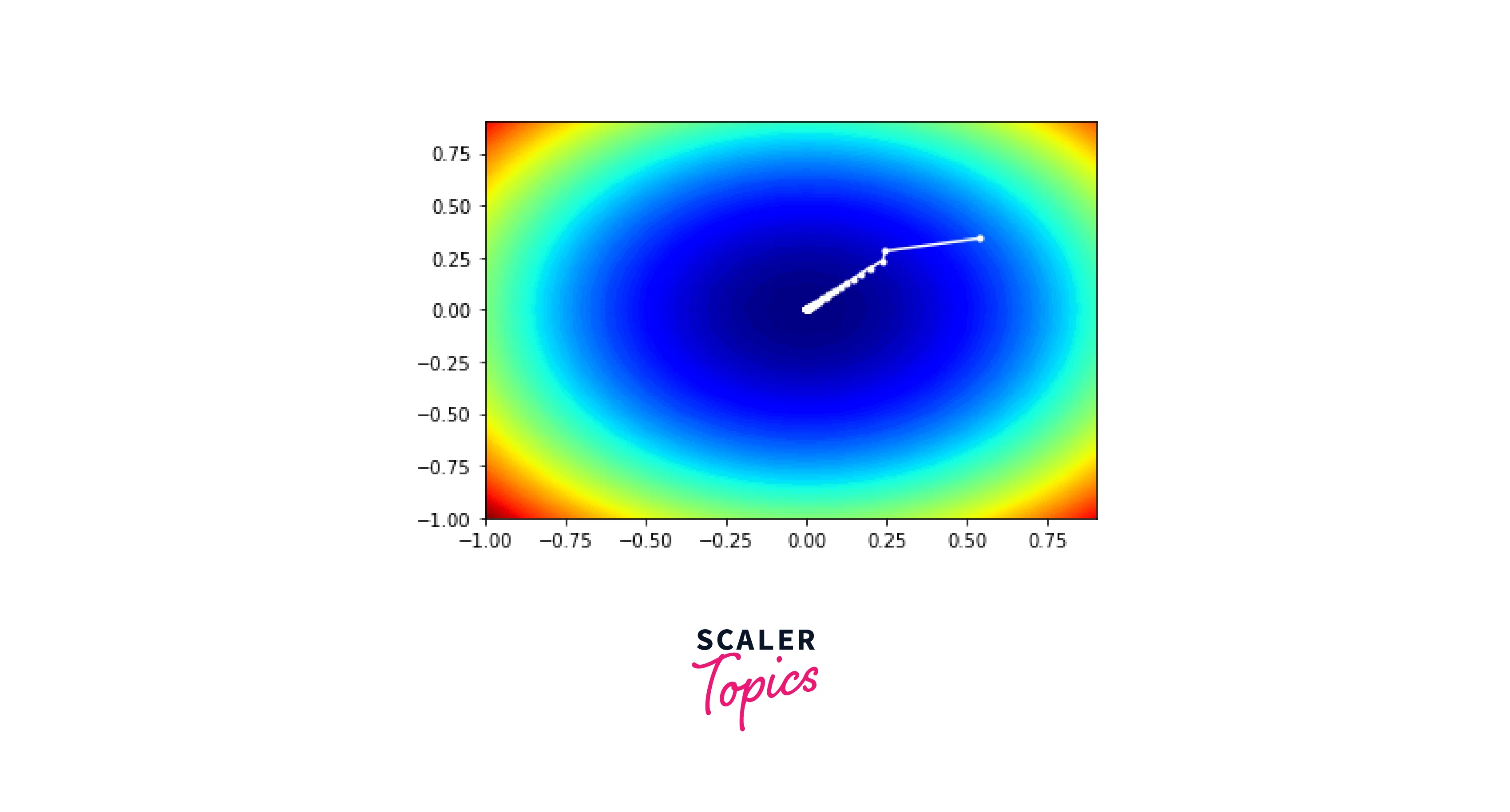

Rmsprop Optimizer Explained The Secret Behind Stable Learning Rmsprop (root mean square propagation) is an optimization algorithm designed to address this issue by maintaining a per parameter learning rate. it achieves this by keeping track of a moving average of the squared gradients for each parameter. Rmsprop, short for root mean square propagation, is a widely used optimization algorithm in deep learning. it’s an improvement over adagrad (adaptive gradient algorithm) and addresses some. Optimization on non convex functions in high dimensional spaces, like those encountered in deep learning, can be hard to visualize. however, we can learn a lot from visualizing optimization paths on simple 2d non convex functions. click anywhere on the function contour to start a minimization. Rmsprop was elaborated as an improvement over adagrad which tackles the issue of learning rate decay. similarly to adagrad, rmsprop uses a pair of equations for which the weight update is absolutely the same.

Rmsprop Scaler Topics Optimization on non convex functions in high dimensional spaces, like those encountered in deep learning, can be hard to visualize. however, we can learn a lot from visualizing optimization paths on simple 2d non convex functions. click anywhere on the function contour to start a minimization. Rmsprop was elaborated as an improvement over adagrad which tackles the issue of learning rate decay. similarly to adagrad, rmsprop uses a pair of equations for which the weight update is absolutely the same. Try out what happens to rmsprop on a real machine learning problem, such as training on fashion mnist. experiment with different choices for adjusting the learning rate. Learn about the rmsprop optimization algorithm, its intuition, and how to implement it in python. discover how this adaptive learning rate method improves on traditional gradient descent for machine learning tasks. This implementation of rmsprop uses plain momentum, not nesterov momentum. the centered version additionally maintains a moving average of the gradients, and uses that average to estimate the variance. From standard momentum to the 'look ahead' of nesterov and the adaptive learning rates of adam. we break down the math and intuition behind the most popular deep learning optimizers to help you choose the right one.

Checking Intuition Rmsprop Normalization Vs Speed Improvement Post Try out what happens to rmsprop on a real machine learning problem, such as training on fashion mnist. experiment with different choices for adjusting the learning rate. Learn about the rmsprop optimization algorithm, its intuition, and how to implement it in python. discover how this adaptive learning rate method improves on traditional gradient descent for machine learning tasks. This implementation of rmsprop uses plain momentum, not nesterov momentum. the centered version additionally maintains a moving average of the gradients, and uses that average to estimate the variance. From standard momentum to the 'look ahead' of nesterov and the adaptive learning rates of adam. we break down the math and intuition behind the most popular deep learning optimizers to help you choose the right one.

Rmsprop Optimizer Explained This implementation of rmsprop uses plain momentum, not nesterov momentum. the centered version additionally maintains a moving average of the gradients, and uses that average to estimate the variance. From standard momentum to the 'look ahead' of nesterov and the adaptive learning rates of adam. we break down the math and intuition behind the most popular deep learning optimizers to help you choose the right one.

A Complete Guide To The Rmsprop Optimizer Built In

Comments are closed.