Rmsprop Optimizer Explained

Rmsprop Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm designed to improve the performance and speed of training deep learning models. Rmsprop, short for root mean square propagation, is a widely used optimization algorithm in deep learning. it’s an improvement over adagrad (adaptive gradient algorithm) and addresses some of.

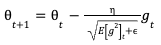

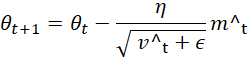

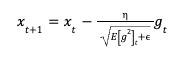

A Complete Guide To The Rmsprop Optimizer Built In Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm primarily used to stabilize training in deep learning models. it is particularly effective for recurrent neural networks (rnns) and problems with non stationary objectives, such as reinforcement learning. Rmsprop (root mean square propagation) is an optimization algorithm designed to address this issue by maintaining a per parameter learning rate. it achieves this by keeping track of a moving average of the squared gradients for each parameter. Learn about the rmsprop optimization algorithm, its intuition, and how to implement it in python. Rmsprop was elaborated as an improvement over adagrad which tackles the issue of learning rate decay. similarly to adagrad, rmsprop uses a pair of equations for which the weight update is absolutely the same.

A Complete Guide To The Rmsprop Optimizer Built In Learn about the rmsprop optimization algorithm, its intuition, and how to implement it in python. Rmsprop was elaborated as an improvement over adagrad which tackles the issue of learning rate decay. similarly to adagrad, rmsprop uses a pair of equations for which the weight update is absolutely the same. Optimizer that implements the rmsprop algorithm. the gist of rmsprop is to: maintain a moving (discounted) average of the square of gradients divide the gradient by the root of this average this implementation of rmsprop uses plain momentum, not nesterov momentum. Rmsprop stands for root mean square propagation. it is an optimization algorithm introduced as an improvement over adagrad. adagrad works well when we have sparse data, meaning some features contain many zero values. This article explains what the rmsprop optimizer is, why practitioners still use it, how to implement it effectively, common pitfalls to avoid, and actionable next steps you can apply in your projects today. Rmsprop, root mean squared propagation is the optimization machine learning algorithm to train the artificial neural network (ann) by different adaptive learning rate and derived from the concepts of gradients descent and rprop.

A Complete Guide To The Rmsprop Optimizer Built In Optimizer that implements the rmsprop algorithm. the gist of rmsprop is to: maintain a moving (discounted) average of the square of gradients divide the gradient by the root of this average this implementation of rmsprop uses plain momentum, not nesterov momentum. Rmsprop stands for root mean square propagation. it is an optimization algorithm introduced as an improvement over adagrad. adagrad works well when we have sparse data, meaning some features contain many zero values. This article explains what the rmsprop optimizer is, why practitioners still use it, how to implement it effectively, common pitfalls to avoid, and actionable next steps you can apply in your projects today. Rmsprop, root mean squared propagation is the optimization machine learning algorithm to train the artificial neural network (ann) by different adaptive learning rate and derived from the concepts of gradients descent and rprop.

A Complete Guide To The Rmsprop Optimizer Built In This article explains what the rmsprop optimizer is, why practitioners still use it, how to implement it effectively, common pitfalls to avoid, and actionable next steps you can apply in your projects today. Rmsprop, root mean squared propagation is the optimization machine learning algorithm to train the artificial neural network (ann) by different adaptive learning rate and derived from the concepts of gradients descent and rprop.

Comments are closed.