Rmsprop

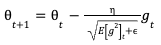

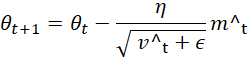

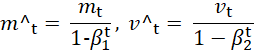

Rmsprop Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm designed to improve the performance and speed of training deep learning models. Rmsprop is an optimization algorithm for neural network training that adapts the learning rate based on the moving average of squared gradients. learn the theory, methodology, numerical example and applications of rmsprop from this open textbook.

A Complete Guide To The Rmsprop Optimizer Built In Centered (bool, optional) – if true, compute the centered rmsprop, the gradient is normalized by an estimation of its variance capturable (bool, optional) – whether this instance is safe to capture in a graph, whether for cuda graphs or for torch pile support. Understanding rmsprop: a simple guide to one of deep learning’s powerful optimizers rmsprop, short for root mean square propagation, is a widely used optimization algorithm in deep learning. Rmsprop is an algorithm that adjusts the learning rate based on the gradient variance. learn how to use the rmsprop class in keras, with its parameters, options and examples. Rmsprop (root mean square propagation) is an optimization algorithm designed to address this issue by maintaining a per parameter learning rate. it achieves this by keeping track of a moving average of the squared gradients for each parameter.

A Complete Guide To The Rmsprop Optimizer Built In Rmsprop is an algorithm that adjusts the learning rate based on the gradient variance. learn how to use the rmsprop class in keras, with its parameters, options and examples. Rmsprop (root mean square propagation) is an optimization algorithm designed to address this issue by maintaining a per parameter learning rate. it achieves this by keeping track of a moving average of the squared gradients for each parameter. Rmsprop is an optimization algorithm used in machine learning. it's important because it adapts learning rates for each parameter, making it effective for training deep neural networks and solving non convex optimization problems. Discover rmsprop, the adaptive optimization algorithm that conquers treacherous loss landscapes. learn its principles, applications in rl & gans, and its role in dl. Learn what rmsprop is, how it works, and why it is useful for deep learning models. see the algorithm, an example, and the advantages and limitations of this adaptive learning rate optimization algorithm. Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm proposed by geoffrey hinton. it is designed to address the problems of non stationary objectives and oscillations in steep dimensions during training, which often occur with standard gradient descent.

A Complete Guide To The Rmsprop Optimizer Built In Rmsprop is an optimization algorithm used in machine learning. it's important because it adapts learning rates for each parameter, making it effective for training deep neural networks and solving non convex optimization problems. Discover rmsprop, the adaptive optimization algorithm that conquers treacherous loss landscapes. learn its principles, applications in rl & gans, and its role in dl. Learn what rmsprop is, how it works, and why it is useful for deep learning models. see the algorithm, an example, and the advantages and limitations of this adaptive learning rate optimization algorithm. Rmsprop (root mean square propagation) is an adaptive learning rate optimization algorithm proposed by geoffrey hinton. it is designed to address the problems of non stationary objectives and oscillations in steep dimensions during training, which often occur with standard gradient descent.

Comments are closed.