Revolutionizing Ai Evaluation The Agent As A Judge Approach Fusion Chat

Revolutionizing Ai Evaluation The Agent As A Judge Approach Fusion Chat Discover the groundbreaking agent as a judge framework for evaluating ai systems, enhancing feedback and advancing ai development. In this review, we define the agent as a judge concept, trace its evolution from single model judges to dynamic multi agent debate frameworks, and critically examine their strengths and shortcomings.

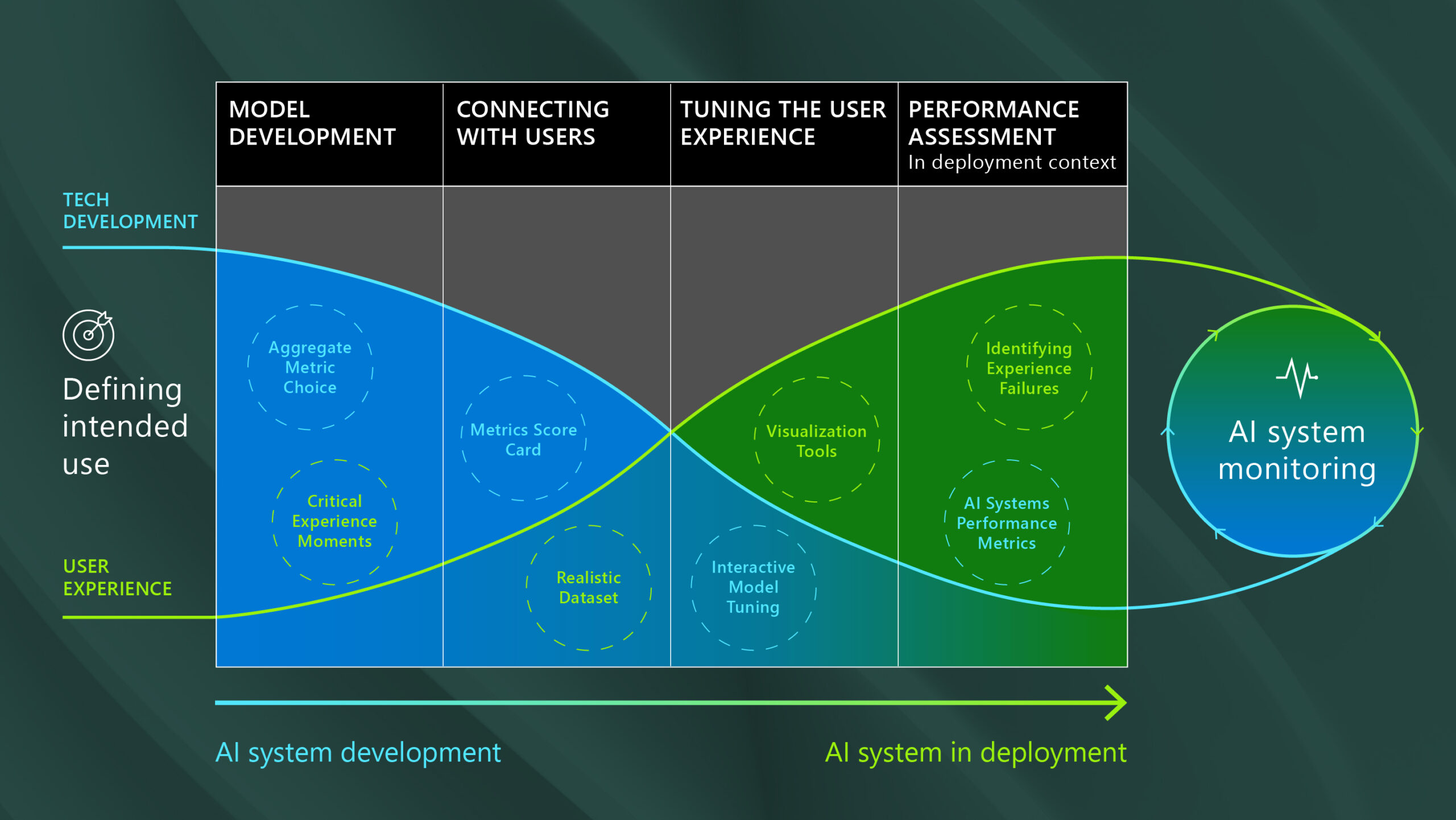

Revolutionizing Ai Evaluation The Agent As A Judge Approach Fusion Chat What is an "ai agent as a judge"? at its core, the "ai agent as a judge" approach involves using one or more ai agents to evaluate the outputs and behaviors of another ai. This paper proposes the agent as a judge framework, which leverages agentic systems to evaluate other ai agents, addressing the limitations of current evaluation methods that either ignore intermediate steps or are too labor intensive. Agent as a judge is an advanced paradigm for evaluating ai systems by decomposing tasks with dynamic planning and multi agent coordination. it enhances evaluation reliability by addressing parametric bias and shallow reasoning through tool augmented verification and persistent memory. Agent as a judge introduces an innovative approach to ai evaluation. we’ve outlined some key insights from the discussion, highlighting the limitations of traditional methods.

Revolutionizing Ai Evaluation The Agent As A Judge Approach Fusion Chat Agent as a judge is an advanced paradigm for evaluating ai systems by decomposing tasks with dynamic planning and multi agent coordination. it enhances evaluation reliability by addressing parametric bias and shallow reasoning through tool augmented verification and persistent memory. Agent as a judge introduces an innovative approach to ai evaluation. we’ve outlined some key insights from the discussion, highlighting the limitations of traditional methods. Explore agent as a judge, a novel approach using llms to evaluate agentic systems. discover how it optimizes performance and reduces costs in ai app development. Traditional evaluation methods have limitations. human evaluation is slow and subjective, automated scoring lacks depth, and benchmark based testing often fails to capture real world performance. that’s where agent as a judge comes in, a new paradigm that is transforming that ai evaluation space. In this paper [1], authors introduce agent as a judge framework, wherein agentic systems are used to evaluate agentic systems. this is an organic extension of the llm as a judge. Discover agent as a judge, a groundbreaking framework that uses ai agents to evaluate other agents. moving beyond traditional human and llm based evaluation, this approach introduces real time, step by step analysis to measure reasoning, execution, and outcomes.

Unveiling The Ai Surge Latest Trends And Insights Fusion Chat Explore agent as a judge, a novel approach using llms to evaluate agentic systems. discover how it optimizes performance and reduces costs in ai app development. Traditional evaluation methods have limitations. human evaluation is slow and subjective, automated scoring lacks depth, and benchmark based testing often fails to capture real world performance. that’s where agent as a judge comes in, a new paradigm that is transforming that ai evaluation space. In this paper [1], authors introduce agent as a judge framework, wherein agentic systems are used to evaluate agentic systems. this is an organic extension of the llm as a judge. Discover agent as a judge, a groundbreaking framework that uses ai agents to evaluate other agents. moving beyond traditional human and llm based evaluation, this approach introduces real time, step by step analysis to measure reasoning, execution, and outcomes.

Comments are closed.