Revisiting Min Max Optimization Problem In Adversarial Training Ai

Revisiting Min Max Optimization Problem In Adversarial Training Ai Recent works demonstrate that convolutional neural networks are susceptible to adversarial examples where the input images look similar to the natural images but are classified incorrectly by the model. Our proposed method offers significant resistance and a concrete security guarantee against multiple adversaries. the goal of this paper is to act as a stepping stone for a new variation of deep.

Revisiting Adversarial Training At Scale Ai Research Paper Details This work studies the adversarial robustness of neural networks through the lens of robust optimization, and suggests the notion of security against a first order adversary as a natural and broad security guarantee. Recent works demonstrate that convolutional neural networks are susceptible to adversarial examples where the input images look similar to the natural images but are classified incorrectly by the model. Recent works demonstrate that convolutional neural networks are susceptible to adversarial examples where the input images look similar to the natural images but are classified incorrectly by the model. This paper revisits the min max optimization problem that is central to adversarial training of deep neural networks. the authors propose several novel methods, including targeted min max optimization, stochastic batch norm, and gradient smoothing, to improve the effectiveness of this optimization.

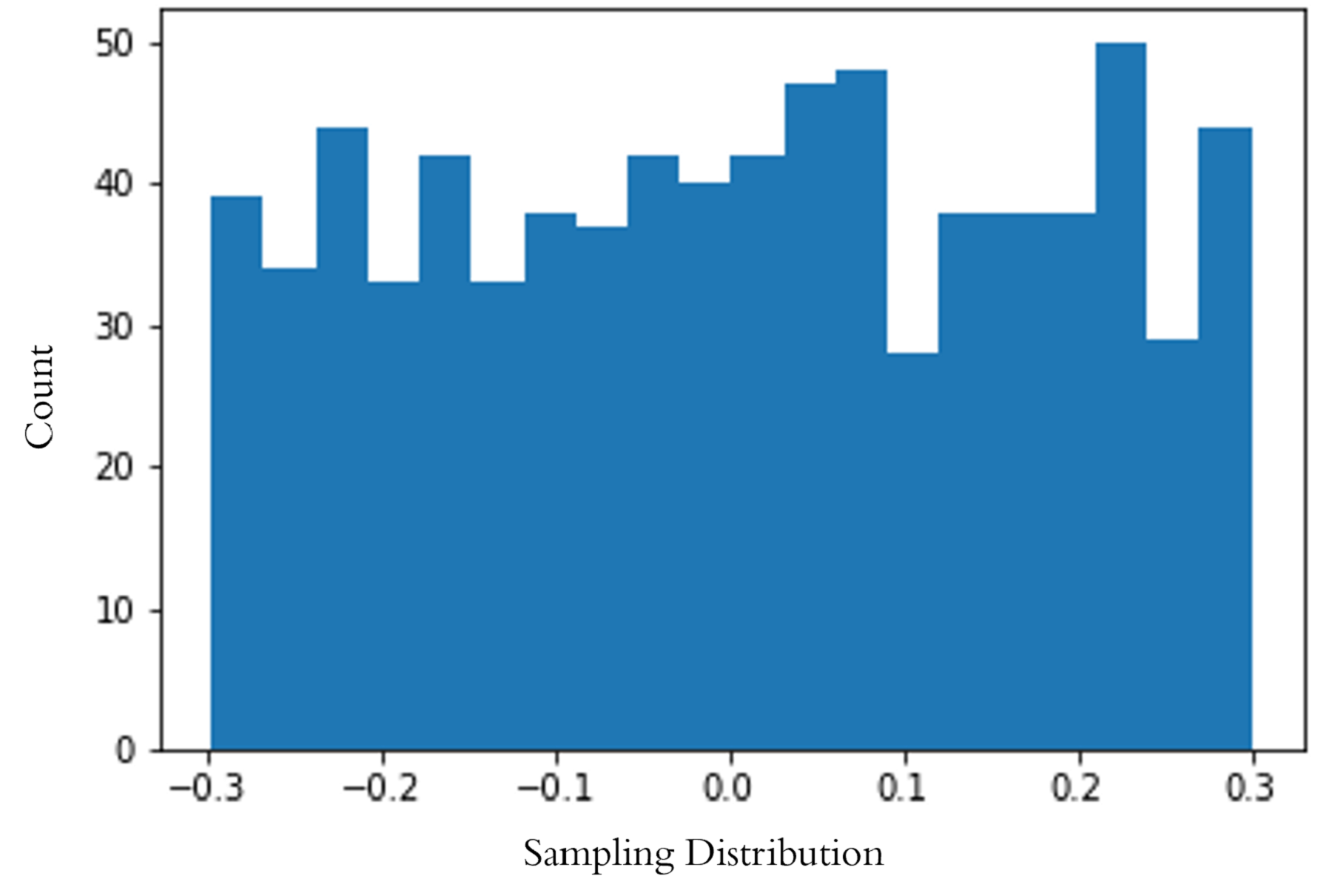

Revisiting Adversarial Training At Scale Ai Research Paper Details Recent works demonstrate that convolutional neural networks are susceptible to adversarial examples where the input images look similar to the natural images but are classified incorrectly by the model. This paper revisits the min max optimization problem that is central to adversarial training of deep neural networks. the authors propose several novel methods, including targeted min max optimization, stochastic batch norm, and gradient smoothing, to improve the effectiveness of this optimization. Each summary below covers the same ai paper, written at different levels of difficulty. the medium difficulty and low difficulty versions are original summaries written by groovesquid , while the high difficulty version is the paper’s original abstract. Of course, the question arises as to which adversarial examples we should train on. to get at an answer to this question, let’s return to a topic we touch on briefly in the introductory chapter. supposing we generally want to optimize the min max objective using gradient descent, how do we do so?. Article "revisiting min max optimization problem in adversarial training" detailed information of the j global is an information service managed by the japan science and technology agency (hereinafter referred to as "jst"). It is built on min max optimization (mmo), where the minimizer (i.e., defender) seeks a robust model to minimize the worst case training loss in the presence of adversarial examples crafted by the maximizer (i.e., attacker).

Comments are closed.