Residual Connections And Layer Normalization Layer Normalization Vs Batch Normalizationtransformer

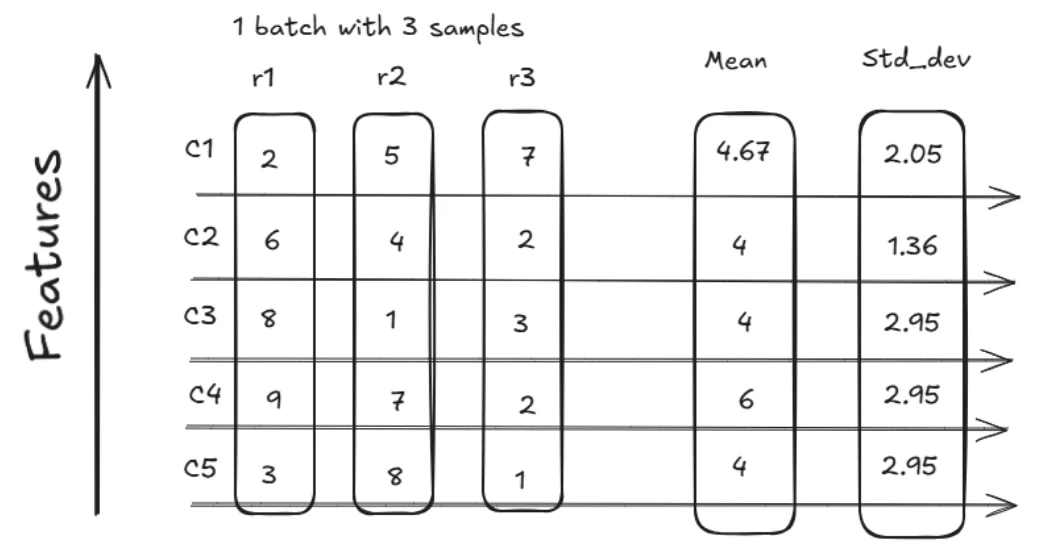

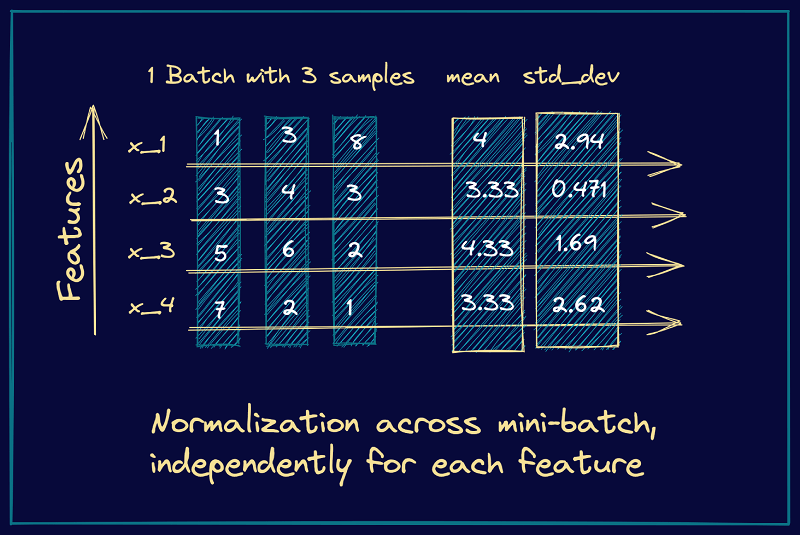

Layer Normalization Vs Batch Normalization The following will detail four common normalization methods: batch normalization, layer normalization, weight normalization, and rms normalization, and analyze their advantages, disadvantages, and applicable scenarios. Learn the key differences between batch normalization & layer normalization in deep learning, with use cases, pros, and when to apply each.

Layer Normalization Vs Batch Normalization What S The Difference Here’s how you can implement batch normalization and layer normalization using pytorch. we’ll cover a simple feedforward network with bn and an rnn with ln to see these techniques in. This week, i dove into something that seemed simple at first but turned into one of the most "aha!" inducing topics yet: layer normalization vs batch normalization. Explore the differences between layer normalization and batch normalization, how these methods improve the speed and efficiency of artificial neural networks, and how you can start learning more about using these methods. In this chapter, we will understand the role of layer normalization and residual connections, how they work, their benefits and some practical considerations while implementing them into a transformer model.

Batch Layer Normalization A New Normalization Layer For Cnns And Rnn Explore the differences between layer normalization and batch normalization, how these methods improve the speed and efficiency of artificial neural networks, and how you can start learning more about using these methods. In this chapter, we will understand the role of layer normalization and residual connections, how they work, their benefits and some practical considerations while implementing them into a transformer model. I'm aman, a data scientist & ai mentor. 🚀 topics for the video: residual connection and layer normalization, layer normalization vs batch normalization, layer normalization. Residual connections and layer normalization are essential for training deep transformer networks. they solve two critical problems: vanishing gradients and training instability. This "post ln" configuration (normalization after residual addition) is the original design from the paper. alternative orderings like "pre ln" (normalize before sub layers) have been explored in subsequent research but are not used in this implementation. Understanding batch normalization and layer normalization is the difference between models that struggle and models that soar. this guide will show you exactly what normalization does, why it works, and how to use it effectively in your neural networks.

Batch And Layer Normalization Pinecone I'm aman, a data scientist & ai mentor. 🚀 topics for the video: residual connection and layer normalization, layer normalization vs batch normalization, layer normalization. Residual connections and layer normalization are essential for training deep transformer networks. they solve two critical problems: vanishing gradients and training instability. This "post ln" configuration (normalization after residual addition) is the original design from the paper. alternative orderings like "pre ln" (normalize before sub layers) have been explored in subsequent research but are not used in this implementation. Understanding batch normalization and layer normalization is the difference between models that struggle and models that soar. this guide will show you exactly what normalization does, why it works, and how to use it effectively in your neural networks.

Comments are closed.