Regularization What Why And How Part 1

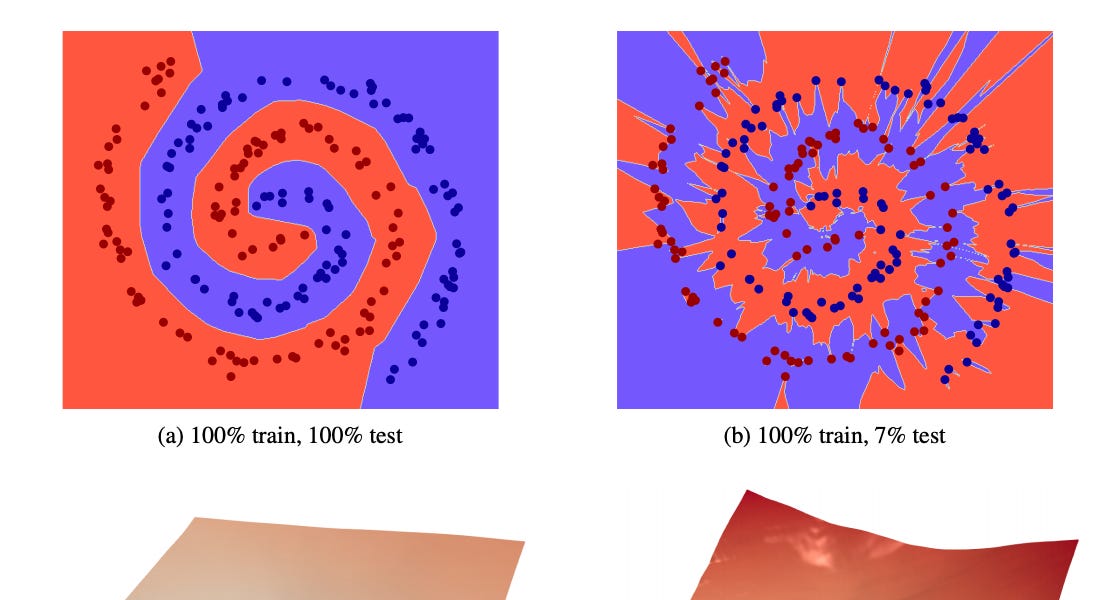

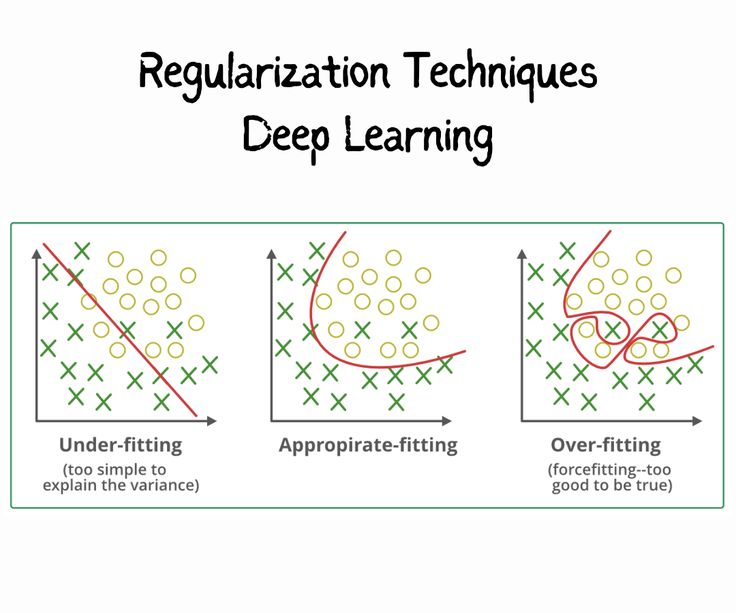

Regularization What Why And How Part 1 Only after inclusion of constraints regularization and few other techniques quick advancements began to happen. but why were they failing in general and how did regularization fixed some issues? lets understand this by first understanding overfitting. Regularization is a technique used in machine learning to prevent overfitting, which otherwise causes models to perform poorly on unseen data. by adding a penalty for complexity, regularization encourages simpler and more generalizable models.

Machine Learning Regularization Explained Sharp Sight Only after inclusion of regularization and few other techniques quick advancements began to happen. but why were we failing in general and how did regularization fixed some issues?. Regularization is a method to constraint the model to fit our data accurately and not overfit. it can also be thought of as penalizing unnecessary complexity in our model. Explicit regularization can be accomplished by adding an extra regularization term to, say, a least squares objective function. typical types of regularization include `2 penalties, and `1 penalties. Regularization provides one method for combatting over fitting in the data poor regime, by specifying (either implicitly or explicitly) a set of “preferences” over the hypotheses.

What Is Regularization And Why Is It Used In Machine Learning Explicit regularization can be accomplished by adding an extra regularization term to, say, a least squares objective function. typical types of regularization include `2 penalties, and `1 penalties. Regularization provides one method for combatting over fitting in the data poor regime, by specifying (either implicitly or explicitly) a set of “preferences” over the hypotheses. Regularization is crucial for addressing overfitting —where a model memorizes training data details but cannot generalize to new data. the goal of regularization is to encourage models to learn the broader patterns within the data rather than memorizing it. Dive deep into model generalizability, bias variance trade offs, and the art of regularization. learn about l2 and l1 penalties and automatic feature selection. apply these techniques to a real world use case!. Optimization finds parameters that minimize the (potentially regularized) loss function, while regularization guides the optimization process towards parameter values that not only fit the training data well but also generalize effectively to new data. Regularization is a technique used in machine learning and statistical modelling to prevent overfitting and improve the generalization ability of models. when a model is overfitting, it has learned the training data too well and may not perform well on new, unseen data.

Comments are closed.