Regularization Techniques In Deep Learning Dropout L Norm And Batch

Regularization Techniques In Deep Learning Dropout L Norm And Batch In this article, we’ll delve into three popular regularization methods: dropout, l norm regularization, and batch normalization. we’ll explore each technique’s intuition,. Learn how to effectively combine batch normalization and dropout as regularizers in neural networks. explore the challenges, best practices, and scenarios.

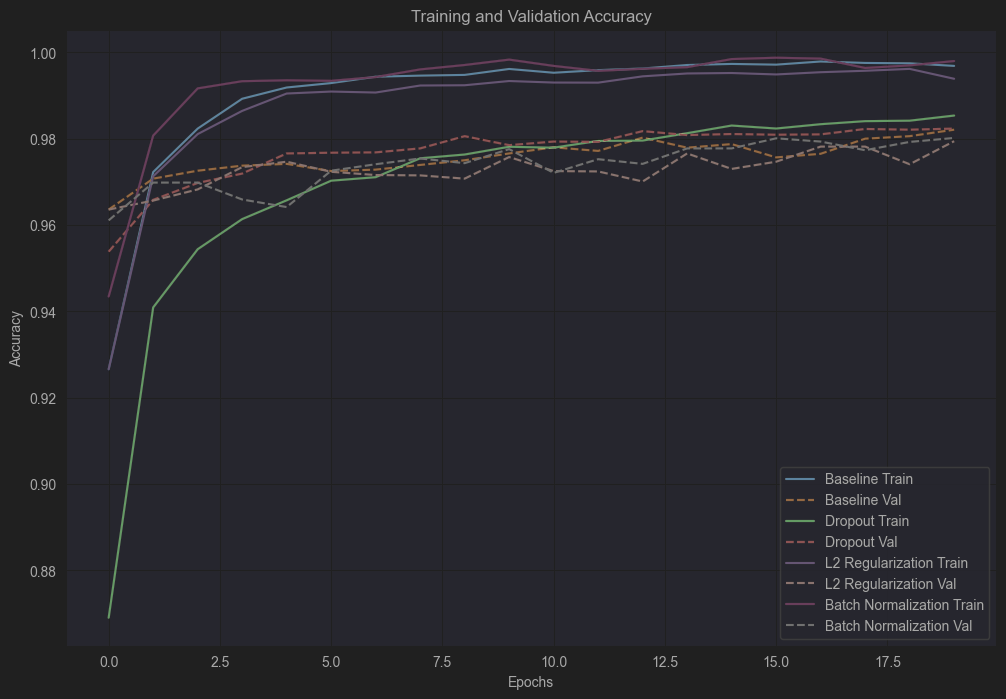

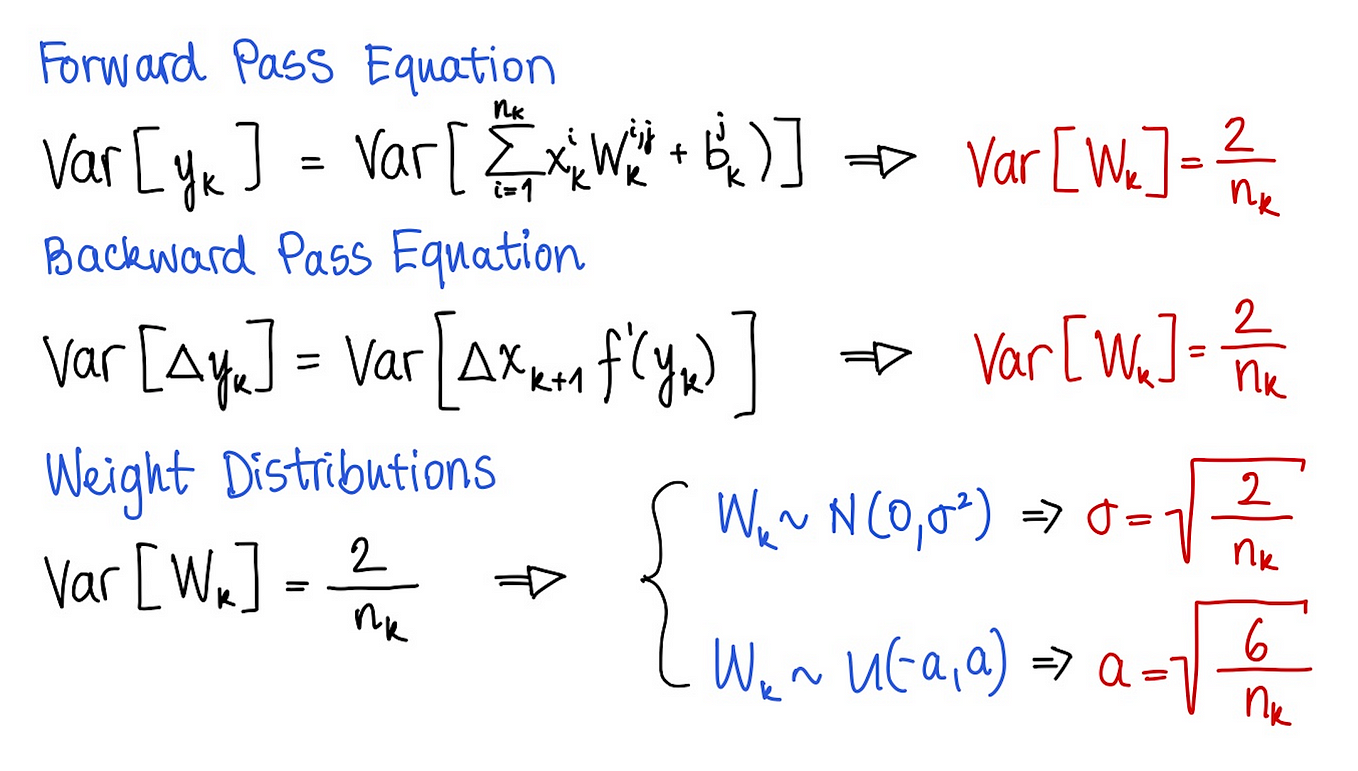

Regularization Techniques In Deep Learning Dropout L Norm And Batch This document covers regularization techniques used in deep learning to prevent overfitting and improve model generalization. we focus on methods such as dropout, batch normalization, and weight decay, describing their principles, implementations in pytorch, and best practices. Regularization is a technique used in machine learning to prevent overfitting by penalizing overly complex models. in tensorflow, regularization can be easily added to neural networks through various techniques, such as l1 and l2 regularization, dropout, and early stopping. Boost your neural network model performance and avoid the inconvenience of overfitting with these key regularization strategies. understand how l1 and l2, dropout, batch normalization, and early stopping regularization can help. Regularization techniques to prevent overfitting in mlps, such as dropout, l1 and l2 regularization, batch normalization, and early stopping. the relationship between bias and variance is often referred to as the bias variance tradeoff, which highlights the need for balance:.

Regularization Techniques In Deep Learning Dropout L Norm And Batch Boost your neural network model performance and avoid the inconvenience of overfitting with these key regularization strategies. understand how l1 and l2, dropout, batch normalization, and early stopping regularization can help. Regularization techniques to prevent overfitting in mlps, such as dropout, l1 and l2 regularization, batch normalization, and early stopping. the relationship between bias and variance is often referred to as the bias variance tradeoff, which highlights the need for balance:. Discuss considerations and common practices when using dropout and batch normalization together. But don’t worry! we have some secret weapons to fight overfitting: dropout, batch normalization, and early stopping. let’s dive in with an interactive twist! meet the overfitting monster!. Learn regularization techniques in deep learning – dropout, batchnorm & early stopping in our machine learning course. master the intermediate concepts of ai & machine learning with real world examples and step by step tutorials. The author emphasizes the importance of regularization in improving the robustness and performance of machine learning models. dropout is praised for its ability to mimic ensemble learning and reduce dependency on specific neurons, thus preventing overfitting.

Regularization Techniques In Deep Learning Dropout L Norm And Batch Discuss considerations and common practices when using dropout and batch normalization together. But don’t worry! we have some secret weapons to fight overfitting: dropout, batch normalization, and early stopping. let’s dive in with an interactive twist! meet the overfitting monster!. Learn regularization techniques in deep learning – dropout, batchnorm & early stopping in our machine learning course. master the intermediate concepts of ai & machine learning with real world examples and step by step tutorials. The author emphasizes the importance of regularization in improving the robustness and performance of machine learning models. dropout is praised for its ability to mimic ensemble learning and reduce dependency on specific neurons, thus preventing overfitting.

Regularization Techniques In Deep Learning Dropout L Norm And Batch Learn regularization techniques in deep learning – dropout, batchnorm & early stopping in our machine learning course. master the intermediate concepts of ai & machine learning with real world examples and step by step tutorials. The author emphasizes the importance of regularization in improving the robustness and performance of machine learning models. dropout is praised for its ability to mimic ensemble learning and reduce dependency on specific neurons, thus preventing overfitting.

Regularization Techniques In Deep Learning Dropout L Norm And Batch

Comments are closed.