Regularization Part I

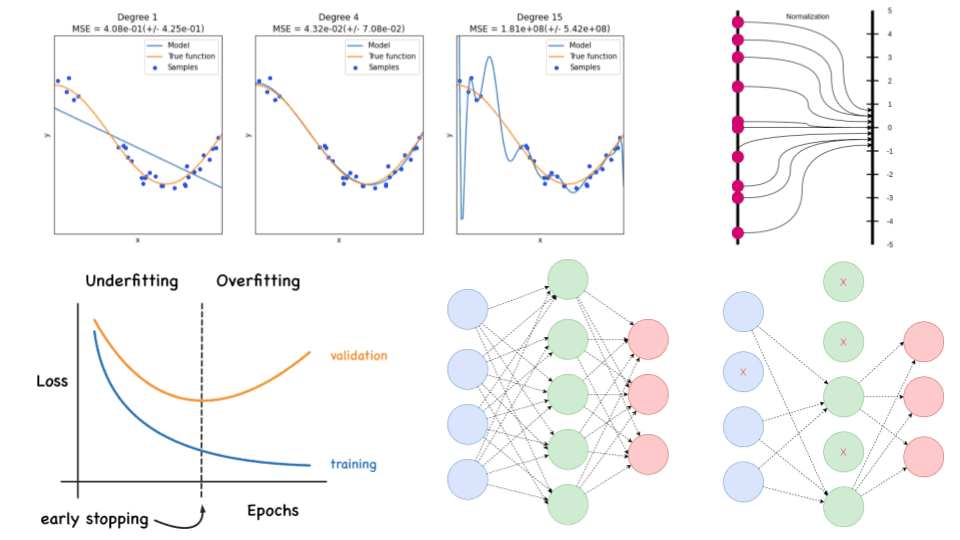

Regularization Learn Data Science With Travis Your Ai Powered Tutor Regularization is a technique used in machine learning to prevent overfitting, which otherwise causes models to perform poorly on unseen data. by adding a penalty for complexity, regularization encourages simpler and more generalizable models. Regularization provides one method for combatting over fitting in the data poor regime, by specifying (either implicitly or explicitly) a set of “preferences” over the hypotheses.

Regularization Learn Data Science With Travis Your Ai Powered Tutor In this comprehensive exploration of regularization in machine learning using linear regression as our framework, we delved into critical concepts such as overfitting, underfitting, and the bias variance trade off, providing a foundational understanding of model performance. Larger data set helps throwing away useless hypotheses also helps classical regularization: some principal ways to constrain hypotheses other types of regularization: data augmentation, early stopping, etc. Machine learning can generally be distilled to an optimization problem choose a classifier (function, hypothesis) from a set of functions that minimizes an objective function clearly we want part of this function to measure performance on the training set, but this is insufficient. Explicit regularization can be accomplished by adding an extra regularization term to, say, a least squares objective function. typical types of regularization include `2 penalties, and `1 penalties.

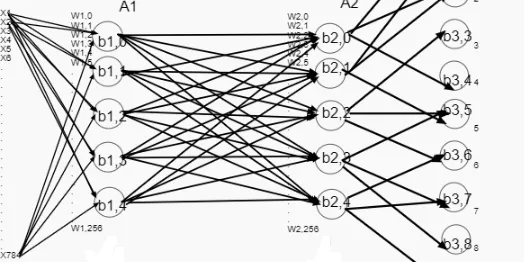

Understanding Regularization In Neural Networks And Deep Learning Machine learning can generally be distilled to an optimization problem choose a classifier (function, hypothesis) from a set of functions that minimizes an objective function clearly we want part of this function to measure performance on the training set, but this is insufficient. Explicit regularization can be accomplished by adding an extra regularization term to, say, a least squares objective function. typical types of regularization include `2 penalties, and `1 penalties. In this article, we will explore five popular regularization techniques: l1 regularization, l2 regularization, dropout, data augmentation, and early stopping. So today, we want to talk about regularization techniques and we start with a short introduction to regularization and the general problems of overfitting. so, we will first start about the background. Making this idea concrete is deceptively difficult, involving a foray into statistical learning theory. however, it turns out that we can also justify regularization from an optimization point of view: adding regularization makes the function easier to optimize. In machine learning, regularization is a technique used to prevent overfitting, which occurs when a model is too complex and fits the training data too well, but fails to generalize to new, unseen data.

Regularization Techniques For Training Deep Neural Networks Ai Summer In this article, we will explore five popular regularization techniques: l1 regularization, l2 regularization, dropout, data augmentation, and early stopping. So today, we want to talk about regularization techniques and we start with a short introduction to regularization and the general problems of overfitting. so, we will first start about the background. Making this idea concrete is deceptively difficult, involving a foray into statistical learning theory. however, it turns out that we can also justify regularization from an optimization point of view: adding regularization makes the function easier to optimize. In machine learning, regularization is a technique used to prevent overfitting, which occurs when a model is too complex and fits the training data too well, but fails to generalize to new, unseen data.

Comments are closed.