Regularization Part 2 Lasso L1 Regression

Regularization Part 2 Lasso L1 Regression Youtube A regression model which uses the l1 regularization technique is called lasso (least absolute shrinkage and selection operator) regression. it adds the absolute value of magnitude of the coefficient as a penalty term to the loss function (l). In this video, i start by talking about all of the similarities, and then show you the cool thing that lasso regression can do that ridge regression can't.

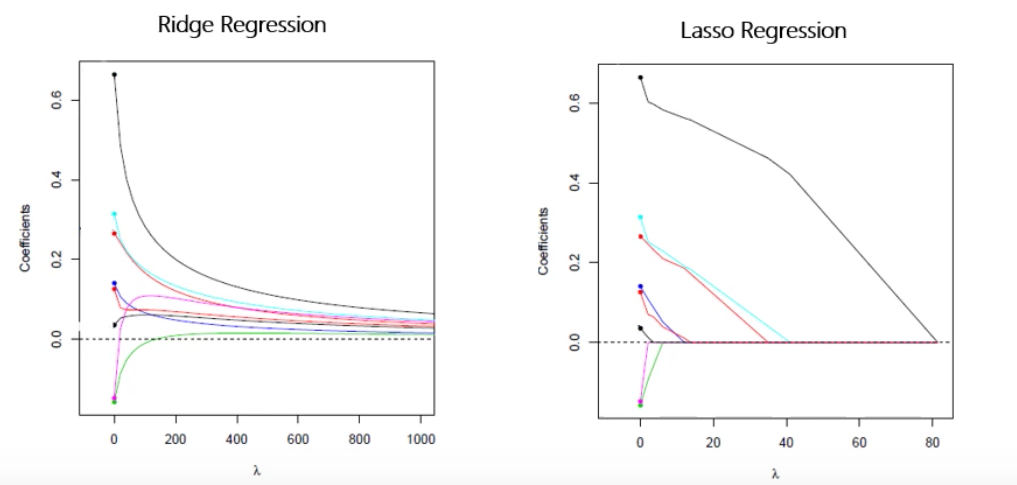

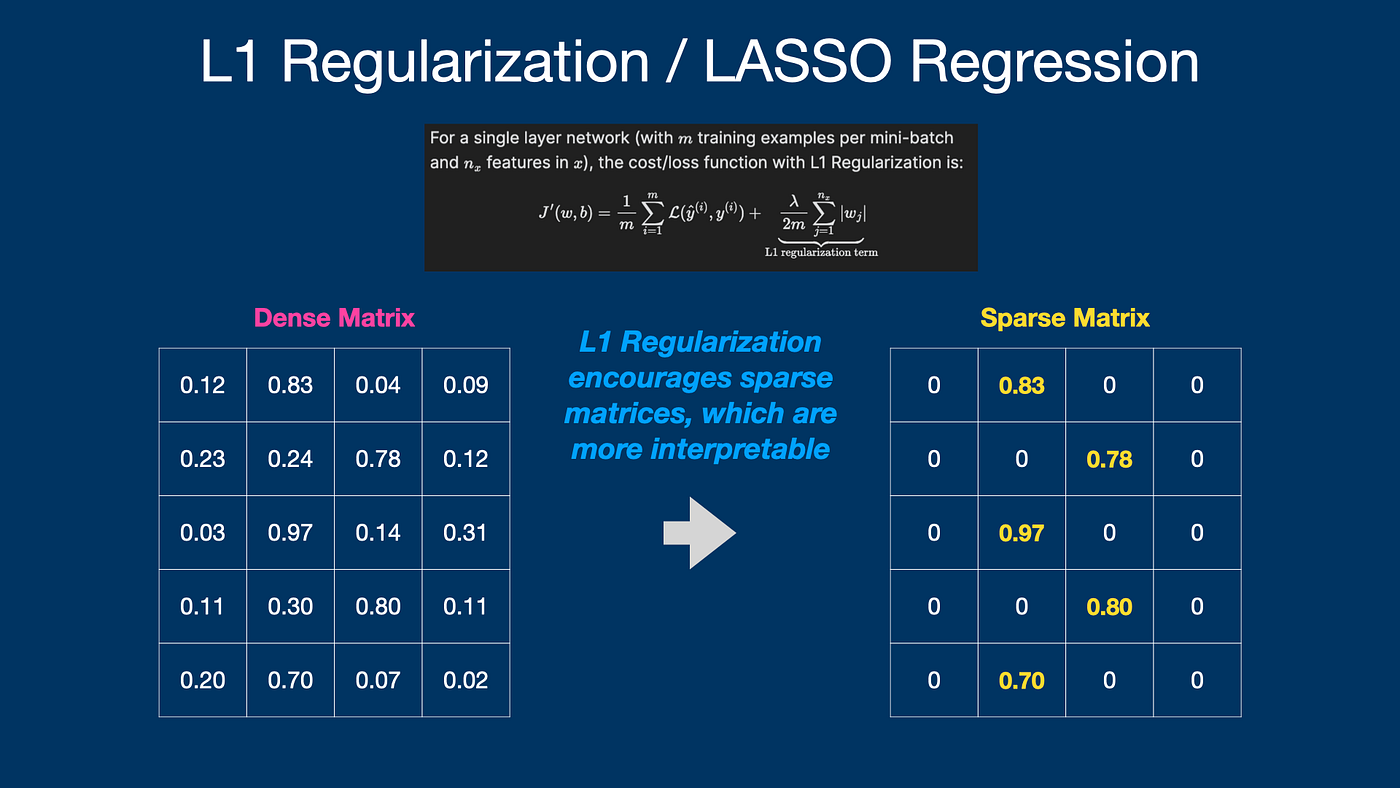

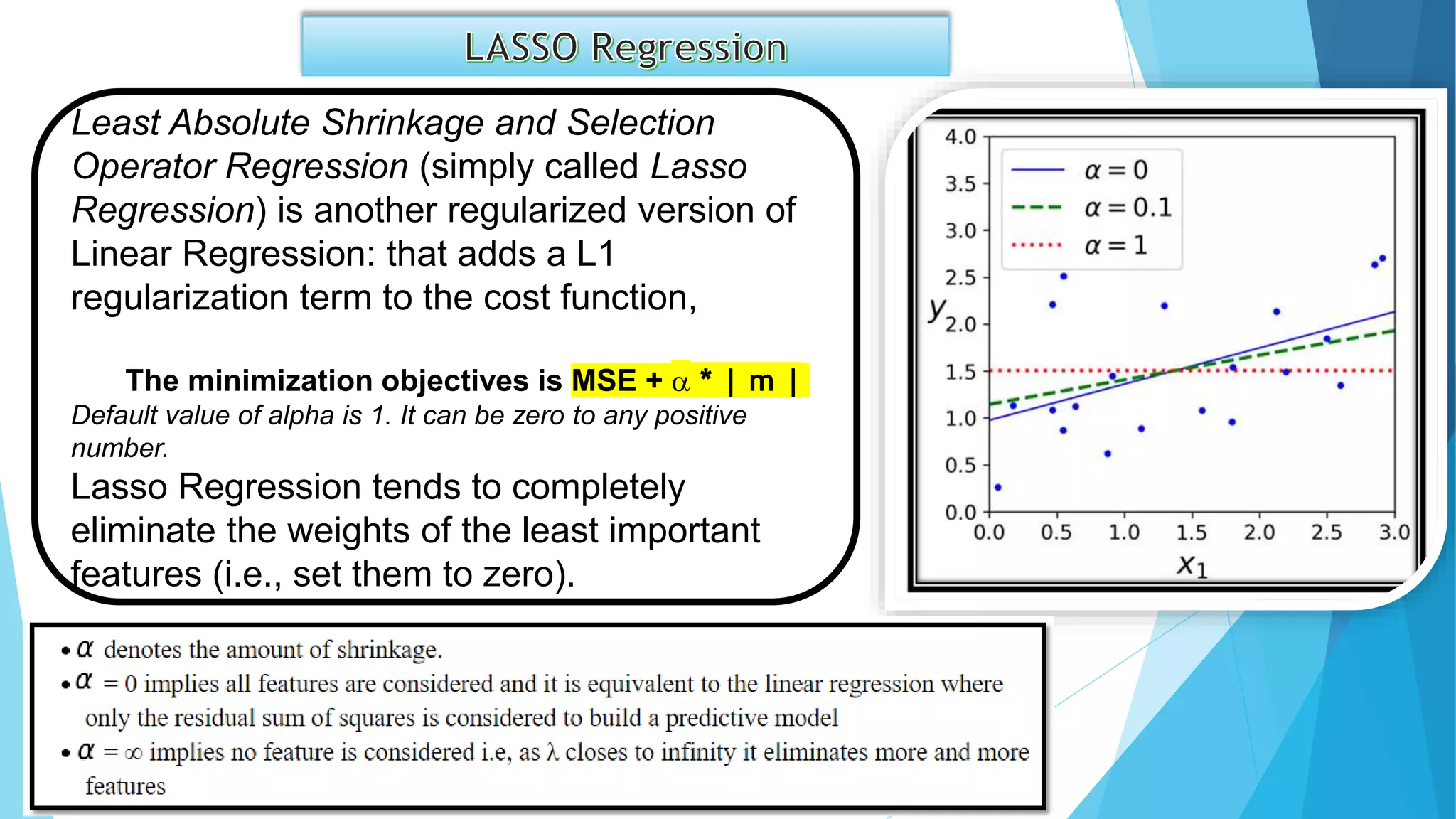

Neural Network L1 And L2 Regulatization These techniques are often applied when a model’s data set has a large number of features, and a less complex model is needed. a regression model that uses the l1 regularization technique is called lasso regression, and a model that uses the l2 is called ridge regression. Lasso (l1) regularization is a technique for building simpler, more interpretable regression models by encouraging sparsity—shrinking some coefficients exactly to zero and thus performing feature selection. Technically the lasso model is optimizing the same objective function as the elastic net with l1 ratio=1.0 (no l2 penalty). read more in the user guide. parameters: alphafloat, default=1.0 constant that multiplies the l1 term, controlling regularization strength. alpha must be a non negative float i.e. in [0, inf). when alpha = 0, the objective is equivalent to ordinary least squares, solved. Scikit learn provides classes like lasso for l1 regularization and ridge for l2 regularization, which can be easily integrated into your model training pipeline.

L1 Regularization One Minute Summary By Jeffrey Boschman One Technically the lasso model is optimizing the same objective function as the elastic net with l1 ratio=1.0 (no l2 penalty). read more in the user guide. parameters: alphafloat, default=1.0 constant that multiplies the l1 term, controlling regularization strength. alpha must be a non negative float i.e. in [0, inf). when alpha = 0, the objective is equivalent to ordinary least squares, solved. Scikit learn provides classes like lasso for l1 regularization and ridge for l2 regularization, which can be easily integrated into your model training pipeline. Def lasso regression sgd(x, y, verbose=true, theta precision = 0.001, batch size=30, alpha = 0.01, iterations = 10000, penalty=1.0): x = add axis for bias(x) m, n = x.shape theta history = [] cost history = [] theta = np.random.rand(1,n) * theta precision for iteration in range(iterations): theta history.append(theta[0]) indices = np.random. Learn how l1 (lasso) and l2 (ridge) regularization prevent overfitting, enhance model generalization, and enable effective feature selection. A hands on tutorial to understand l1 (lasso) and l2 (ridge) regularization using python and scikit learn with visual and performance comparison. this repository provides a detailed and practical demonstration of how l1 (lasso) and l2 (ridge) regularization work in various machine learning models. L1 and l2 regularization are two powerful techniques to control model complexity and prevent overfitting, especially in linear models. both methods add a penalty term to the loss function, but they use different mathematical formulations that lead to distinct behaviors.

Lasso And Ridge Regression Ppsx Def lasso regression sgd(x, y, verbose=true, theta precision = 0.001, batch size=30, alpha = 0.01, iterations = 10000, penalty=1.0): x = add axis for bias(x) m, n = x.shape theta history = [] cost history = [] theta = np.random.rand(1,n) * theta precision for iteration in range(iterations): theta history.append(theta[0]) indices = np.random. Learn how l1 (lasso) and l2 (ridge) regularization prevent overfitting, enhance model generalization, and enable effective feature selection. A hands on tutorial to understand l1 (lasso) and l2 (ridge) regularization using python and scikit learn with visual and performance comparison. this repository provides a detailed and practical demonstration of how l1 (lasso) and l2 (ridge) regularization work in various machine learning models. L1 and l2 regularization are two powerful techniques to control model complexity and prevent overfitting, especially in linear models. both methods add a penalty term to the loss function, but they use different mathematical formulations that lead to distinct behaviors.

Comments are closed.