Reduction Using Global And Shared Memory Intro To Parallel Programming

An Introduction To Parallel Programming Lecture Notes Study Material Lecture #9 covers parallel reduction algorithms for gpus, focusing on optimizing their implementation in cuda by addressing control divergence, memory divergence, minimizing global memory accesses, and thread coarsening, ultimately demonstrating how these techniques are employed in machine learning frameworks like pytorch and triton. Reduction is a major primitive in the parallel coding patterns. it's a good place to start and understand, a step by step approach to get a more optimal way to solve this problem. i have.

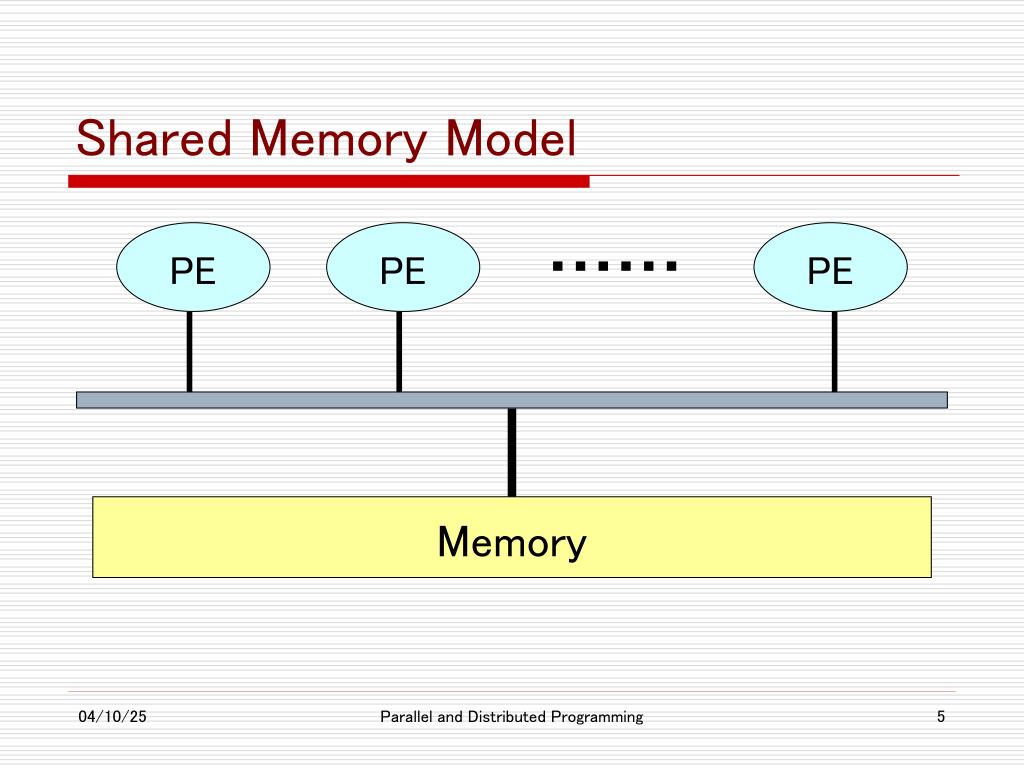

Ppt Shared Memory Parallel Programming Powerpoint Presentation Free Shared memory is a fast, on chip memory accessible by threads within the same block. it is used to reduce global memory accesses and improve performance in parallel algorithms. there are two types of shared memory: static (fixed size) and dynamic (runtime size). The implementation progressively applies advanced cuda optimization techniques including global memory reduction, shared memory optimization, multi stage reduction, thread coarsening, warp level operations, and bank conflict avoidance. Threads write their results to global memory, which is read again in the next iteration. by keeping the intermediate results in shared memory, we can reduce the number of global memory requests. This video is part of an online course, intro to parallel programming. check out the course here: udacity course cs344.

Ppt Shared Memory Parallel Programming Powerpoint Presentation Free Threads write their results to global memory, which is read again in the next iteration. by keeping the intermediate results in shared memory, we can reduce the number of global memory requests. This video is part of an online course, intro to parallel programming. check out the course here: udacity course cs344. This article will walk you through a c and cuda program that demonstrates this powerful technique, known as parallel reduction. we will focus on how to use a fast, on chip memory space called “shared memory” to have threads cooperate efficiently. Taking a simple parallel reduction and optimize it in 7 steps. in this post, i aim to take a simple yet popular algorithm — parallel reduction — and optimize its performance as much as possible. But cuda has no global synchronization. why? what is our optimization goal? half of the threads are idle on first loop iteration! this is wasteful for this to be correct, we must use the “volatile” keyword! note: this saves useless work in all warps, not just the last one!. Parallel reduction is foundational in many data processing applications, enabling high performance computation for large inputs through techniques such as thread index assignment, use of shared memory, thread coarsening, and segmented reduction.

Ppt Shared Memory Parallel Programming Powerpoint Presentation Free This article will walk you through a c and cuda program that demonstrates this powerful technique, known as parallel reduction. we will focus on how to use a fast, on chip memory space called “shared memory” to have threads cooperate efficiently. Taking a simple parallel reduction and optimize it in 7 steps. in this post, i aim to take a simple yet popular algorithm — parallel reduction — and optimize its performance as much as possible. But cuda has no global synchronization. why? what is our optimization goal? half of the threads are idle on first loop iteration! this is wasteful for this to be correct, we must use the “volatile” keyword! note: this saves useless work in all warps, not just the last one!. Parallel reduction is foundational in many data processing applications, enabling high performance computation for large inputs through techniques such as thread index assignment, use of shared memory, thread coarsening, and segmented reduction.

Comments are closed.