Reducing Hardware Experiments For Model Learning And Policy Optimization

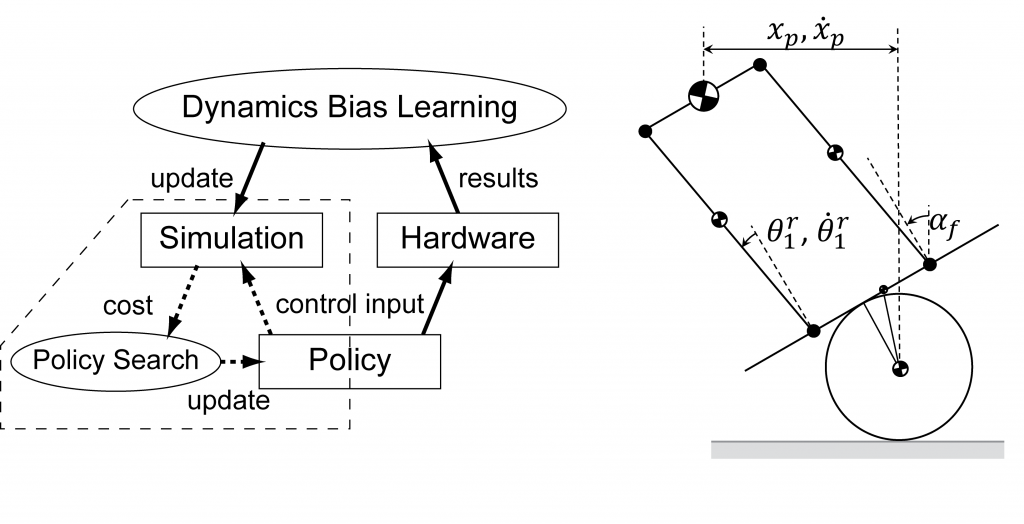

Reducing Hardware Experiments For Model Learning And Policy Conducting hardware experiment is often expensive in various aspects such as potential damage to the robot and the number of people required to operate the robo. This paper presents an iterative approach for model learning and policy optimization using as few experiments as possible. instead of learning the hardware model from scratch, our method reduces the number of experiments by only learning the difference from a simulation model.

Machine Learning Model Optimization Process Download Scientific Diagram This paper presents an iterative approach for learning hardware models and optimizing policies with as few hardware experiments as possible. instead of learning the model from scratch, our method learns the difference between a simulation model and hardware. This paper presents an iterative approach for learning hardware models and optimizing policies with as few hardware experiments as possible. This paper presents an iterative approach for learning hardware models and optimizing policies with as few hardware experiments as possible. instead of learning the model from scratch, our method learns the difference between a simulation model and hardware. It provides free access to secondary information on researchers, articles, patents, etc., in science and technology, medicine and pharmacy. the search results guide you to high quality primary information inside and outside jst.

Safe Learning Based Optimization Of Model Predictive Control This paper presents an iterative approach for learning hardware models and optimizing policies with as few hardware experiments as possible. instead of learning the model from scratch, our method learns the difference between a simulation model and hardware. It provides free access to secondary information on researchers, articles, patents, etc., in science and technology, medicine and pharmacy. the search results guide you to high quality primary information inside and outside jst. Bibliographic details on reducing hardware experiments for model learning and policy optimization. This paper proposes gosafeopt, a model free learning algorithm that can search for globally optimal policies while guaranteeing safe exploration with high probability. [c2] s. ha and k. yamane, reducing hardware experiments for model learning and policy optimization, in ieee international conference on robotics and automation (icra), 2015. In this paper, we provide a comprehensive assessment of state of the art work and selected results on the hardware aware modeling and optimization for ml applications.

Model Based Policy Optimization Using Symbolic World Model Ai Bibliographic details on reducing hardware experiments for model learning and policy optimization. This paper proposes gosafeopt, a model free learning algorithm that can search for globally optimal policies while guaranteeing safe exploration with high probability. [c2] s. ha and k. yamane, reducing hardware experiments for model learning and policy optimization, in ieee international conference on robotics and automation (icra), 2015. In this paper, we provide a comprehensive assessment of state of the art work and selected results on the hardware aware modeling and optimization for ml applications.

Model Optimization Methods For Efficient And Edge Ai Federated [c2] s. ha and k. yamane, reducing hardware experiments for model learning and policy optimization, in ieee international conference on robotics and automation (icra), 2015. In this paper, we provide a comprehensive assessment of state of the art work and selected results on the hardware aware modeling and optimization for ml applications.

Proximal Policy Optimization Based Reinforcement Learning For Joint

Comments are closed.