Reduce Overfitting By Calibrating Machine Learning Models

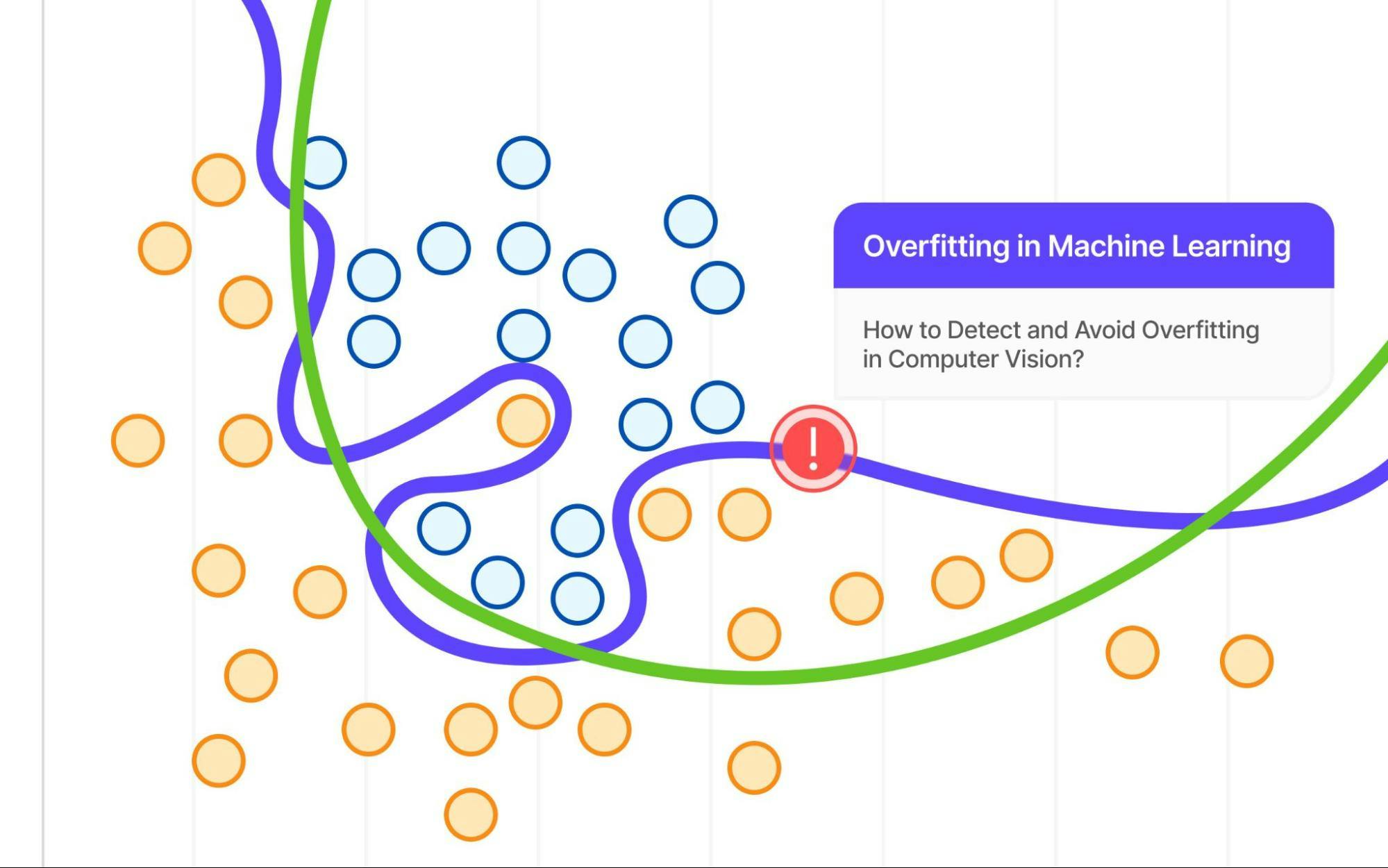

Overfitting In Machine Learning Explained Encord Two types of data sets can be used to measure overfitting: the validation set and the test set. the validation set is a subset of training data that is used to choose optimal model parameters and. Bagging is a technique that consists of creating multiple models using data subsampling and then combining their predictions using the average or majority, to reduce variance and increase.

Reduce Overfitting By Calibrating Machine Learning Models To prevent overfitting, the main objective is to make the model generalize well to new data instead of memorizing the training set. this can be achieved by controlling model complexity and improving data usage. This article, presented in a tutorial style, illustrates how to diagnose and fix overfitting in python. Fortunately, there are several effective strategies to combat overfitting and ensure your model performs consistently. from simplifying the model to using techniques like cross validation and regularization, these methods can help strike the right balance between bias and variance. Learn how to diagnose overfitting with nine practical tools learning curves, bias variance decomposition, regularisation, and data leakage detection in python.

3 Methods To Reduce Overfitting Of Machine Learning Models By Soner Fortunately, there are several effective strategies to combat overfitting and ensure your model performs consistently. from simplifying the model to using techniques like cross validation and regularization, these methods can help strike the right balance between bias and variance. Learn how to diagnose overfitting with nine practical tools learning curves, bias variance decomposition, regularisation, and data leakage detection in python. Overfitting is one of the most common pitfalls in machine learning that can significantly impair the performance of models. in this article, we take a deep dive into seven effective remedies designed to combat overfitting. In this comprehensive guide, we’ll explore proven techniques to reduce overfitting in scikit learn, complete with practical examples and actionable strategies you can implement immediately. We need to reduce or eliminate overfitting before deploying a machine learning model. there are several techniques to reduce overfitting. in this article, we will go over 3 commonly used methods. cross validation. the most robust method to reduce overfitting is collect more data. Trying to reduce overfitting (we informally call this ”solv ing”) is a large part of many machine learning courses, by showing students how to use regularization techniques, reduce model complexity by removing selected layers or decreasing width (number of neurons), or a diverse set of standard model architectures that can be used as a base.

Comments are closed.