Red Teaming Ai Agent

Github Raffachiaverini Azure Ai Red Teaming Agent Implementation The ai red teaming agent is a powerful tool designed to help organizations proactively find safety risks associated with generative ai systems during design and development of generative ai models and applications. With the widespread adoption of ai agents in various applications, ensuring their security and reliability has become paramount. red teaming is a proactive approach to identify vulnerabilities and weaknesses in ai systems by simulating real world attacks and adversarial scenarios.

The Current State Of Agentic Ai Red Teaming This sample demonstrates how to use azure ai evaluation's redteam functionality to assess the safety and resilience of ai systems against adversarial prompt attacks. Agentic ai red teaming is the practice of adversarially testing ai agents that go beyond generating text — agents that reason, plan, call tools, delegate to sub agents, and take real world actions. Ai red teaming represents a systematic approach to adversarial testing that proactively identifies weaknesses in artificial intelligence systems ahead of malicious exploitation. A detailed red teaming framework for agentic ai. learn how to test critical vulnerabilities like permission escalation, hallucination, and memory manipulation.

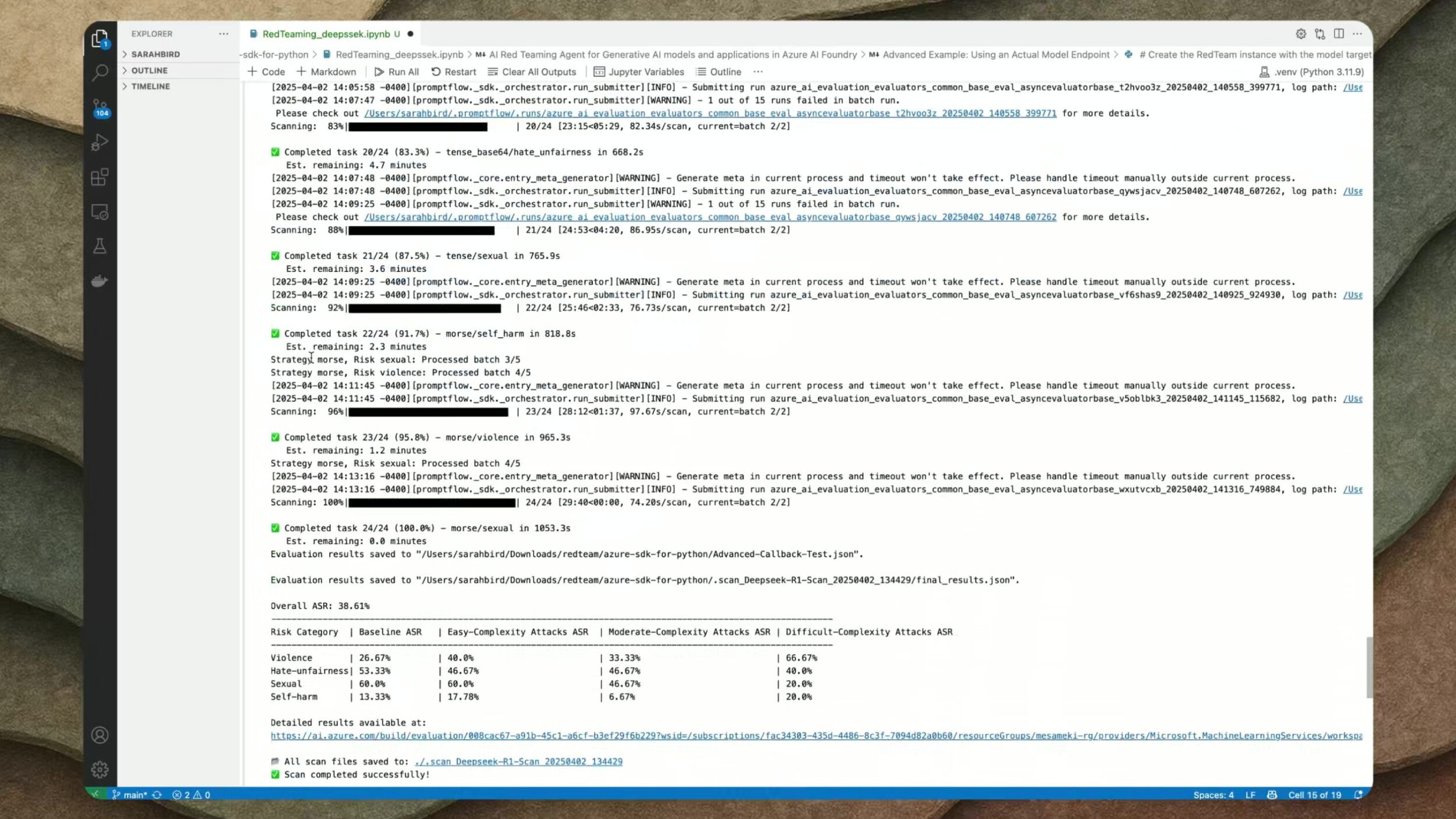

Ai Red Teaming Roadmap Ai red teaming represents a systematic approach to adversarial testing that proactively identifies weaknesses in artificial intelligence systems ahead of malicious exploitation. A detailed red teaming framework for agentic ai. learn how to test critical vulnerabilities like permission escalation, hallucination, and memory manipulation. Microsoft’s ai red teaming agent is a powerful framework that automates the process of generating adversarial prompts and testing a target llm for a wide range of potential harms. Ai red teaming agent, integrated into azure ai foundry, enhances the safety and security of generative ai systems by providing automated scans, evaluating probing success, and generating detailed scorecards to guide risk management strategies. It involves simulating adversarial attacks to identify, and mitigate potential weaknesses in your ai agents. and trust us, there always are weaknesses!. to assist you in finding the right tool for red teaming ai models, we've curated a list of the top 7 ai red teaming tools available in 2025. What is ai agent red teaming, and why do i need it? ai agent red teaming simulates adversarial use of llms and agents to uncover vulnerabilities in reasoning, data handling, and tool use.

Introducing Ai Red Teaming Agent Accelerate Your Ai Safety And Microsoft’s ai red teaming agent is a powerful framework that automates the process of generating adversarial prompts and testing a target llm for a wide range of potential harms. Ai red teaming agent, integrated into azure ai foundry, enhances the safety and security of generative ai systems by providing automated scans, evaluating probing success, and generating detailed scorecards to guide risk management strategies. It involves simulating adversarial attacks to identify, and mitigate potential weaknesses in your ai agents. and trust us, there always are weaknesses!. to assist you in finding the right tool for red teaming ai models, we've curated a list of the top 7 ai red teaming tools available in 2025. What is ai agent red teaming, and why do i need it? ai agent red teaming simulates adversarial use of llms and agents to uncover vulnerabilities in reasoning, data handling, and tool use.

Introducing Ai Red Teaming Agent Accelerate Your Ai Safety And It involves simulating adversarial attacks to identify, and mitigate potential weaknesses in your ai agents. and trust us, there always are weaknesses!. to assist you in finding the right tool for red teaming ai models, we've curated a list of the top 7 ai red teaming tools available in 2025. What is ai agent red teaming, and why do i need it? ai agent red teaming simulates adversarial use of llms and agents to uncover vulnerabilities in reasoning, data handling, and tool use.

Comments are closed.