Real Time Streaming With Kafka And Flink Lab 2 Write Data To Kafka

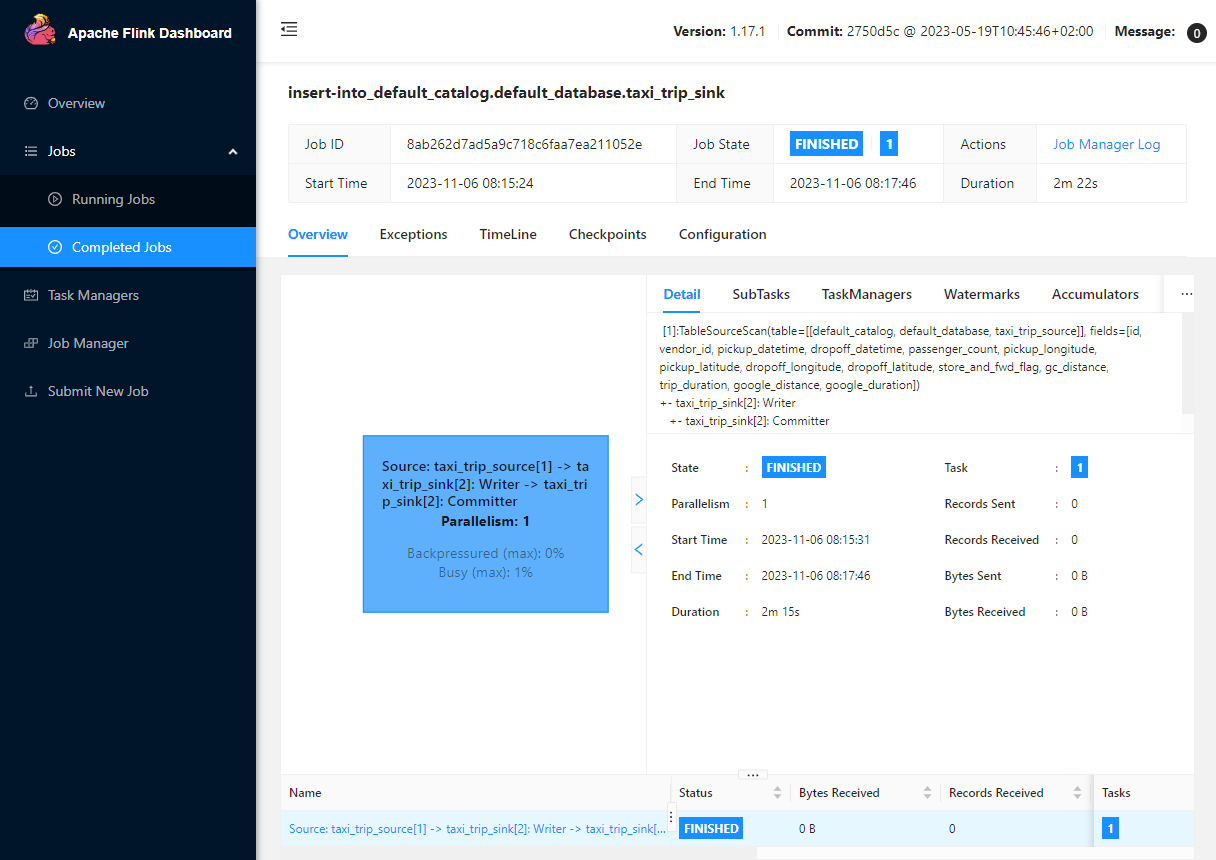

Real Time Streaming With Kafka And Flink Introduction Cevo A custom pipeline jar file will be created as the kafka cluster is authenticated by iam, and it will be demonstrated how to execute the app in a flink cluster deployed on docker as well as locally as a typical python app. When we have a fully working consumer and producer, we can try to process data from kafka and then save our results back to kafka. the full list of functions that can be used for stream processing can be found here.

Real Time Streaming With Kafka And Flink Lab 1 Produce Data To Kafka Learn how to build scalable real time data pipelines using apache kafka 4.0 and apache flink 2.0 with practical examples and performance optimization tips. This post walks through building a complete real time analytics system from scratch, including the mistakes, debugging sessions, and “aha moments” that make up real world development. Flink provides an apache kafka connector for reading data from and writing data to kafka topics with exactly once guarantees. apache flink ships with a universal kafka connector which attempts to track the latest version of the kafka client. the version of the client it uses may change between flink releases. This project sets up a real time streaming data pipeline using apache flink (pyflink), apache kafka, zookeeper, and a custom json data producer. it ingests data from a mocked external api, sends it to kafka, and processes it using pyflink.

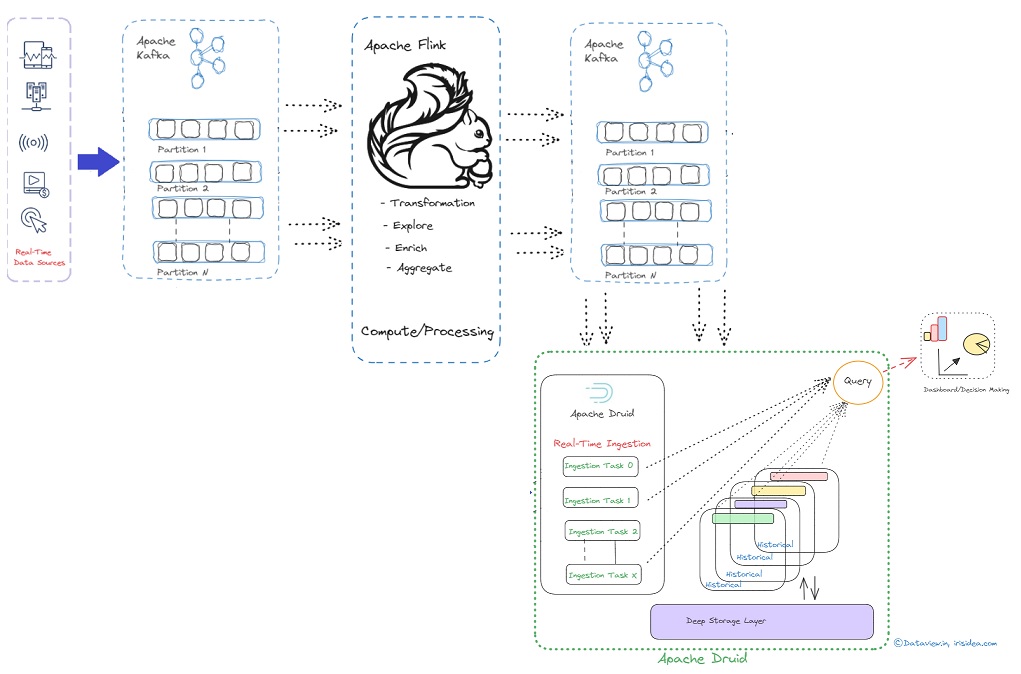

Real Time Data Transfer Analysis Decision Makiing Flink provides an apache kafka connector for reading data from and writing data to kafka topics with exactly once guarantees. apache flink ships with a universal kafka connector which attempts to track the latest version of the kafka client. the version of the client it uses may change between flink releases. This project sets up a real time streaming data pipeline using apache flink (pyflink), apache kafka, zookeeper, and a custom json data producer. it ingests data from a mocked external api, sends it to kafka, and processes it using pyflink. This blog post will explore how to build a streaming data pipeline using apache kafka and flink, covering core concepts, typical usage examples, common practices, and best practices. This context provides a tutorial on how to build a real time data processing system using apache flink, kafka, and python. Imagine autonomous vehicles relying on instant sensor data to avoid collisions or financial systems detecting fraud in milliseconds—enter apache kafka and flink, the powerhouse duo revolutionizing event driven architectures. A python based producer script sends test data into kafka (simulated by red panda). data is serialized into json for interoperability among different languages and systems.

Real Time Streaming With Kafka And Flink Lab 5 Write Data To Dynamodb This blog post will explore how to build a streaming data pipeline using apache kafka and flink, covering core concepts, typical usage examples, common practices, and best practices. This context provides a tutorial on how to build a real time data processing system using apache flink, kafka, and python. Imagine autonomous vehicles relying on instant sensor data to avoid collisions or financial systems detecting fraud in milliseconds—enter apache kafka and flink, the powerhouse duo revolutionizing event driven architectures. A python based producer script sends test data into kafka (simulated by red panda). data is serialized into json for interoperability among different languages and systems.

Real Time Streaming With Kafka And Flink Lab 5 Write Data To Dynamodb Imagine autonomous vehicles relying on instant sensor data to avoid collisions or financial systems detecting fraud in milliseconds—enter apache kafka and flink, the powerhouse duo revolutionizing event driven architectures. A python based producer script sends test data into kafka (simulated by red panda). data is serialized into json for interoperability among different languages and systems.

Real Time Streaming With Kafka And Flink Lab 2 Write Data To Kafka

Comments are closed.