Random Forests Data Science Concepts

Random Forests In Data Science Institutes In Bangalore Random forest is a machine learning algorithm that uses many decision trees to make better predictions. each tree looks at different random parts of the data and their results are combined by voting for classification or averaging for regression which makes it as ensemble learning technique. In this article, we will walk through the concepts, working principles, pseudocode, python usage, and pros and cons of random forests.

Interpreting Random Forests Comprehensive Guide On Random Forest By Random forest is a part of bagging (bootstrap aggregating) algorithm because it builds each tree using different random part of data and combines their answers together. throughout this article, we’ll focus on the classic golf dataset as an example for classification. The name “random forest” comes from the combination of two key concepts: randomness and forests. the “random” part refers to the random selection of data samples and features during the construction of each tree, while the “forest” part refers to the ensemble of decision trees. Random forests or random decision forests is an ensemble learning method for classification, regression and other tasks that works by creating a multitude of decision trees during training. for classification tasks, the output of the random forest is the class selected by most trees. Random forest algorithm is a supervised classification and regression algorithm. as the name suggests, this algorithm randomly creates a forest with several trees. generally, the more trees in the forest, the forest looks more robust.

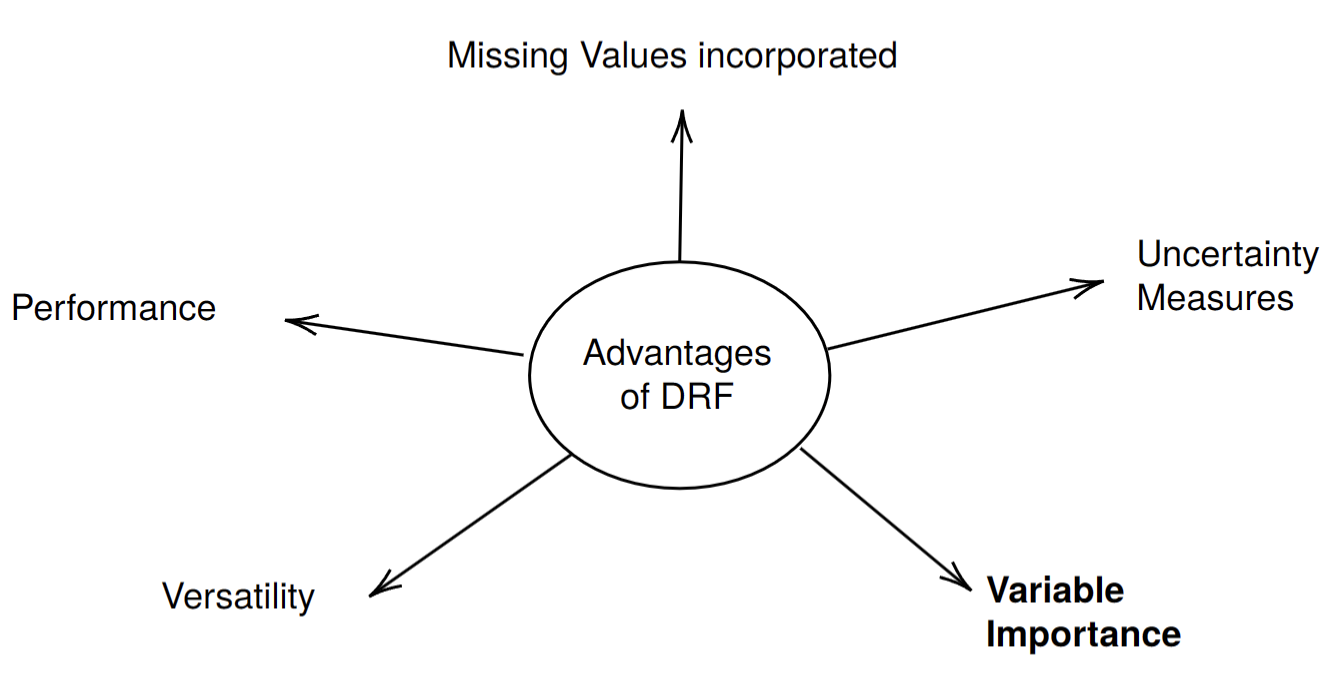

Demystifying Random Forests A Comprehensive Guide Institute Of Data Random forests or random decision forests is an ensemble learning method for classification, regression and other tasks that works by creating a multitude of decision trees during training. for classification tasks, the output of the random forest is the class selected by most trees. Random forest algorithm is a supervised classification and regression algorithm. as the name suggests, this algorithm randomly creates a forest with several trees. generally, the more trees in the forest, the forest looks more robust. Random forest is a commonly used machine learning algorithm, trademarked by leo breiman and adele cutler, that combines the output of multiple decision trees to reach a single result. its ease of use and flexibility have fueled its adoption, as it handles both classification and regression problems. Explore random forest in machine learning—its working, advantages, and use in classification and regression with simple examples and tips. Master the random forest algorithm and ensemble learning. learn how bagging, feature randomness, and variance reduction create accurate predictive models. Random forest, a popular machine learning algorithm developed by leo breiman and adele cutler, merges the outputs of numerous decision trees to produce a single outcome. its popularity stems from its user friendliness and versatility, making it suitable for both classification and regression tasks.

Comments are closed.