Rag Pipeline Evaluation Using Deepeval Haystack

Deepeval Haystack Deepeval is a framework to evaluate retrieval augmented generation (rag) pipelines. it supports metrics like context relevance, answer correctness, faithfulness, and more. Refer to this link for a detailed tutorial on how to create rag pipelines. in this notebook, we're using the squad v2 dataset for getting the context, questions and ground truth answers.

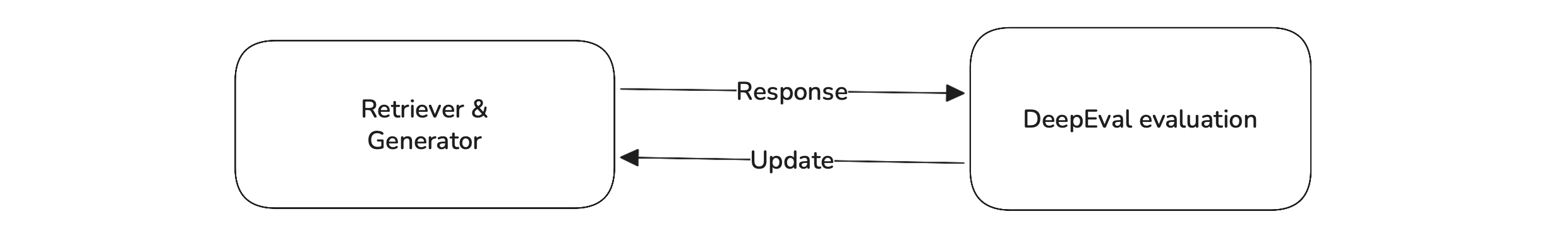

Deepeval Haystack Since a satisfactory llm output depends entirely on the quality of the retriever and generator, rag evaluation focuses on evaluating the retriever and generator in your rag pipeline separately. this also allows for easier debugging and to pinpoint issues on a component level. In my previous evaluating rag pipelines post, i introduced two approaches to evaluating rag pipelines. in this post, i will show you how to implement these two approaches in detail. Incorporating these evaluation techniques and metrics allows for a comprehensive assessment of rag pipelines, ensuring that both retrieval and generation components function optimally to. A complete evaluation framework for retrieval augmented generation (rag) pipelines built with haystack. it runs your rag pipeline against evaluation questions with known answers, then scores the results across seven metrics covering retrieval accuracy, answer quality, and context relevance.

Rag Evaluation Deepeval The Open Source Llm Evaluation Framework Incorporating these evaluation techniques and metrics allows for a comprehensive assessment of rag pipelines, ensuring that both retrieval and generation components function optimally to. A complete evaluation framework for retrieval augmented generation (rag) pipelines built with haystack. it runs your rag pipeline against evaluation questions with known answers, then scores the results across seven metrics covering retrieval accuracy, answer quality, and context relevance. In this tutorial, we'll walkthrough how to setup a full testing suite for rag applications using deepeval. This article will show you how to generate these realistic test cases using deepeval, an open source framework that simplifies llm evaluation, allowing you to benchmark your rag pipeline before it goes live. I just published a detailed guide on evaluating retrieval augmented generation (rag) pipelines using deepeval and haystack metrics! 🎯 if you're working with rag pipelines or. Explore how deepeval’s evaluation framework uses llm judge metrics and golden datasets to improve the reliability of rag pipelines.

Evaluate A Rag Based Contract Assistant With Deepeval Deepeval The In this tutorial, we'll walkthrough how to setup a full testing suite for rag applications using deepeval. This article will show you how to generate these realistic test cases using deepeval, an open source framework that simplifies llm evaluation, allowing you to benchmark your rag pipeline before it goes live. I just published a detailed guide on evaluating retrieval augmented generation (rag) pipelines using deepeval and haystack metrics! 🎯 if you're working with rag pipelines or. Explore how deepeval’s evaluation framework uses llm judge metrics and golden datasets to improve the reliability of rag pipelines.

Comments are closed.