Quick Introduction Deepeval The Open Source Llm Evaluation Framework

Introduction To Llm Evals Deepeval The Open Source Llm Evaluation Deepeval is an open source evaluation framework for llms. deepeval makes it extremely easy to build and iterate on llm (applications) and was built with the following principles in mind:. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps.

Introduction To Llm Evals Deepeval The Open Source Llm Evaluation Deepeval is an open source evaluation framework designed specifically for large language models, enabling developers to efficiently build, improve, test, and monitor llm based applications. Deepeval is an open source evaluation framework designed to assess large language model (llm) performance. it provides a comprehensive suite of metrics and features, including the ability to generate synthetic datasets, perform real time evaluations, and integrate seamlessly with testing frameworks like pytest. By the authors of deepeval, confident ai is a cloud llm evaluation platform. it allows you to use deepeval for team wide, collaborative ai testing. try deepeval free on confident ai. Deepeval is a powerful open source llm evaluation framework. in these tutorials we'll show you how you can use deepeval to improve your llm application one step at a time.

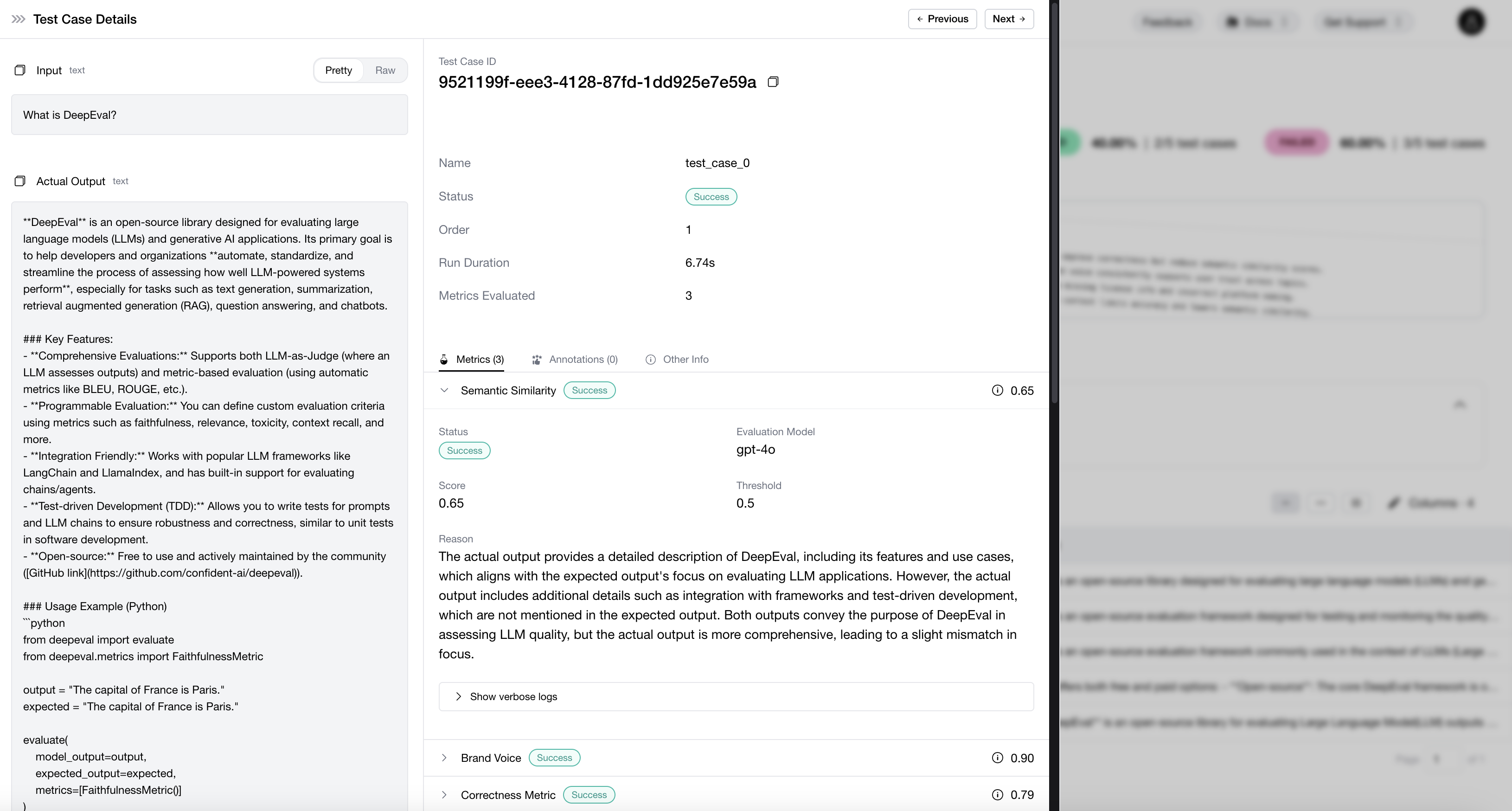

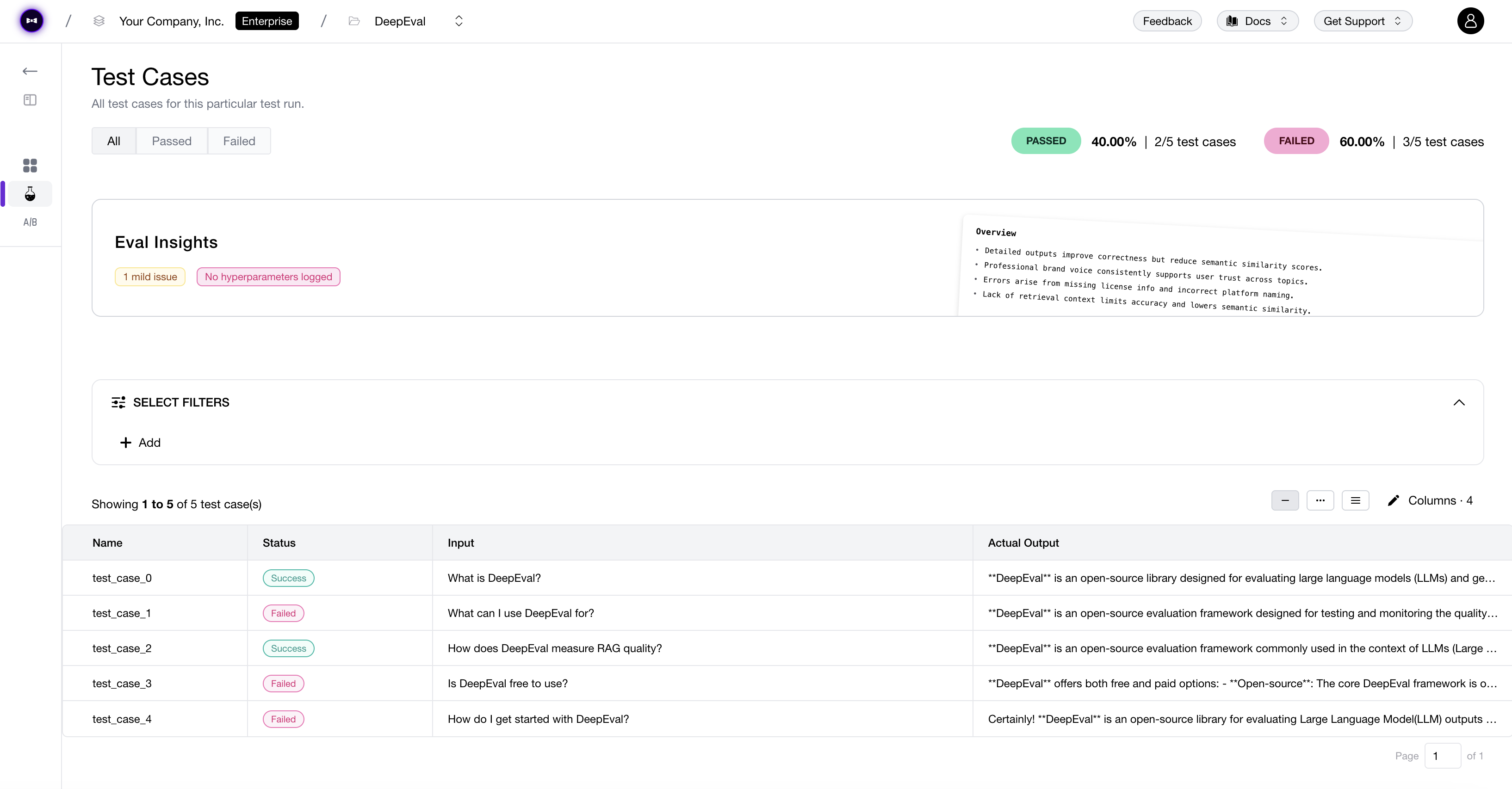

How An Llm Evaluation Framework Can Help You Improve Your Product By the authors of deepeval, confident ai is a cloud llm evaluation platform. it allows you to use deepeval for team wide, collaborative ai testing. try deepeval free on confident ai. Deepeval is a powerful open source llm evaluation framework. in these tutorials we'll show you how you can use deepeval to improve your llm application one step at a time. Running an llm evaluation creates a test run — a collection of test cases that benchmarks your llm application at a specific point in time. if you're logged into confident ai, you'll also receive a fully sharable llm testing report on the cloud. If you’re looking to learn how llm evaluation works, building your own llm evaluation framework is a great choice. however, if you want something robust and working, use deepeval, we’ve done all the hard work for you already. Deepeval is a specialized framework for evaluating llm outputs. unlike traditional unit testing frameworks, it is tailored specifically for llms, making it easier to test ai responses against. Deepeval is an open source framework for evaluating large language models (llms), similar to pytest but specialized for llm outputs. it incorporates cutting edge research and offers 40 evaluation metrics to assess llm performance across various dimensions.

Github Bigdatasciencegroup Llm Evaluation Deepeval The Llm Running an llm evaluation creates a test run — a collection of test cases that benchmarks your llm application at a specific point in time. if you're logged into confident ai, you'll also receive a fully sharable llm testing report on the cloud. If you’re looking to learn how llm evaluation works, building your own llm evaluation framework is a great choice. however, if you want something robust and working, use deepeval, we’ve done all the hard work for you already. Deepeval is a specialized framework for evaluating llm outputs. unlike traditional unit testing frameworks, it is tailored specifically for llms, making it easier to test ai responses against. Deepeval is an open source framework for evaluating large language models (llms), similar to pytest but specialized for llm outputs. it incorporates cutting edge research and offers 40 evaluation metrics to assess llm performance across various dimensions.

Deepeval By Confident Ai The Llm Evaluation Framework Deepeval is a specialized framework for evaluating llm outputs. unlike traditional unit testing frameworks, it is tailored specifically for llms, making it easier to test ai responses against. Deepeval is an open source framework for evaluating large language models (llms), similar to pytest but specialized for llm outputs. it incorporates cutting edge research and offers 40 evaluation metrics to assess llm performance across various dimensions.

Deepeval By Confident Ai The Llm Evaluation Framework

Comments are closed.