Questions About Delta Model Issue 226 Lm Sys Fastchat Github

Questions About Delta Model Issue 226 Lm Sys Fastchat Github Have a question about this project? sign up for a free github account to open an issue and contact its maintainers and the community. what's the delta model and how is it trained? the delta model size looks the same as llama 13b. This document covers the model compression techniques implemented in fastchat. model compression is essential for reducing the memory footprint and improving inference efficiency of large language models (llms).

Qwen Training Issue 2351 Lm Sys Fastchat Github We discussed the various models supported by fastchat, such as lmsys models and other popular models, highlighting features like quantization and backend support. We use mt bench, a set of challenging multi turn open ended questions to evaluate models. to automate the evaluation process, we prompt strong llms like gpt 4 to act as judges and assess the quality of the models' responses. [09 2025] invited talk on scalable sim to real learning for general purpose humanoid skills at grasp sfi seminar. [08 2025] passed my phd thesis proposal! [12 2024] received nvidia graduate fellowship. thanks, nvidia! [11 2024] received cmu ri presidential fellowship. thanks, cmu! [07 2024] abs is selected as the outstanding student paper award finalist at rss 2024! [04 2024] invited talk on. Motivated by these observations and by the economic mismatch between open and closed model ecosystems, we present squeeze evolve, a multi model orchestration framework that routes each evolutionary operation to the most cost effective model based on confidence derived fitness signals, reserving expensive models for only the highest marginal.

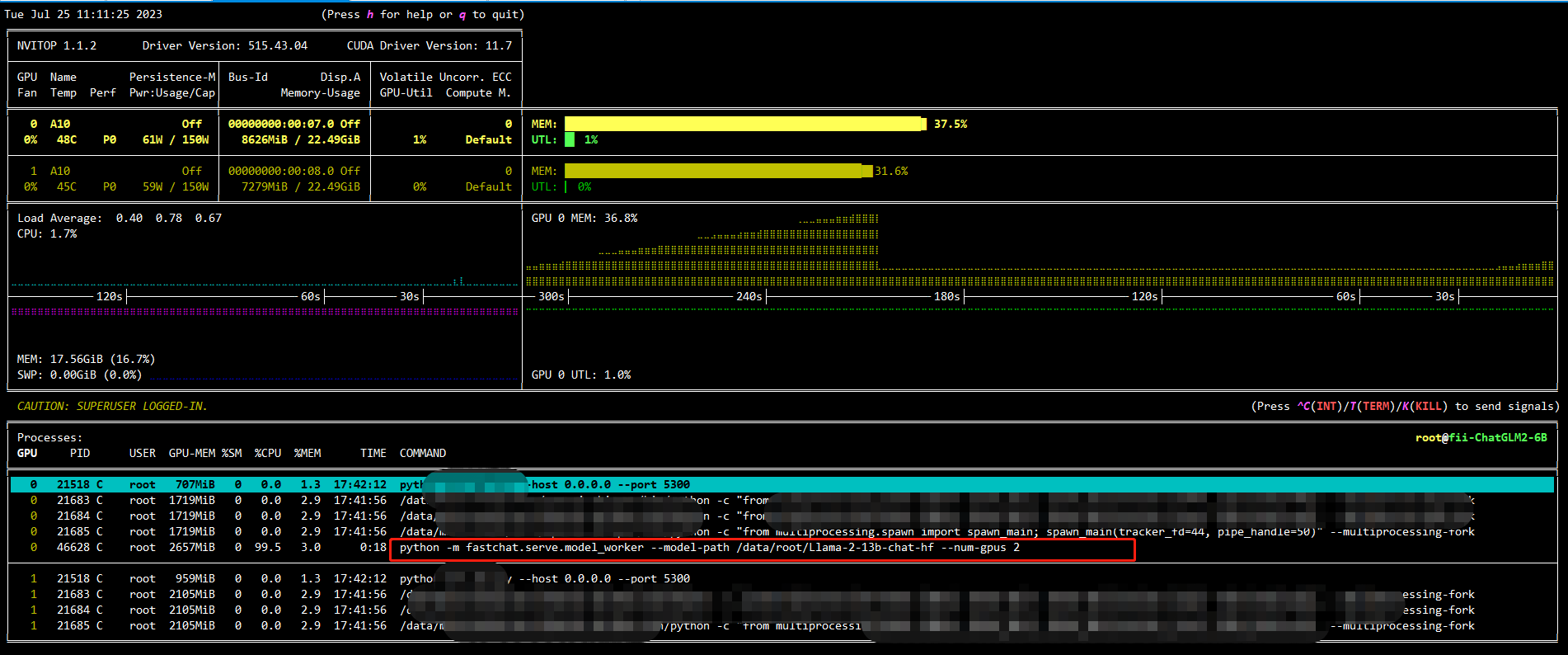

Fastchat 为什么不能使用多卡 Issue 2071 Lm Sys Fastchat Github [09 2025] invited talk on scalable sim to real learning for general purpose humanoid skills at grasp sfi seminar. [08 2025] passed my phd thesis proposal! [12 2024] received nvidia graduate fellowship. thanks, nvidia! [11 2024] received cmu ri presidential fellowship. thanks, cmu! [07 2024] abs is selected as the outstanding student paper award finalist at rss 2024! [04 2024] invited talk on. Motivated by these observations and by the economic mismatch between open and closed model ecosystems, we present squeeze evolve, a multi model orchestration framework that routes each evolutionary operation to the most cost effective model based on confidence derived fitness signals, reserving expensive models for only the highest marginal. How to contribute: once released on github, developers can contribute by: improving core functionalities adding support for new ai models creating plugins for specific use cases or technologies enhancing the user interface and experience writing documentation and tutorials reporting issues and suggesting new features ai commander. A preliminary evaluation of the model quality is conducted by creating a set of 80 diverse questions and utilizing gpt 4 to judge the model outputs. see vicuna.lmsys.org for more details. 文章浏览阅读0次。# 从零开始:用mtbench为你的大语言模型做专业评估 第一次训练出自己的大语言模型时,那种成就感无与伦比。但兴奋之余,一个现实问题摆在面前:这个模型到底有多强?与市面上的主流模型相比处于什么水平?这时候,你需要一个客观、专业的评估工具——mtbench正是为此而生. Interactive architecture diagram for lm sys fastchat.

Comments are closed.