Question Unable To Install Mlc Llm Error Module Tvm Script Parser

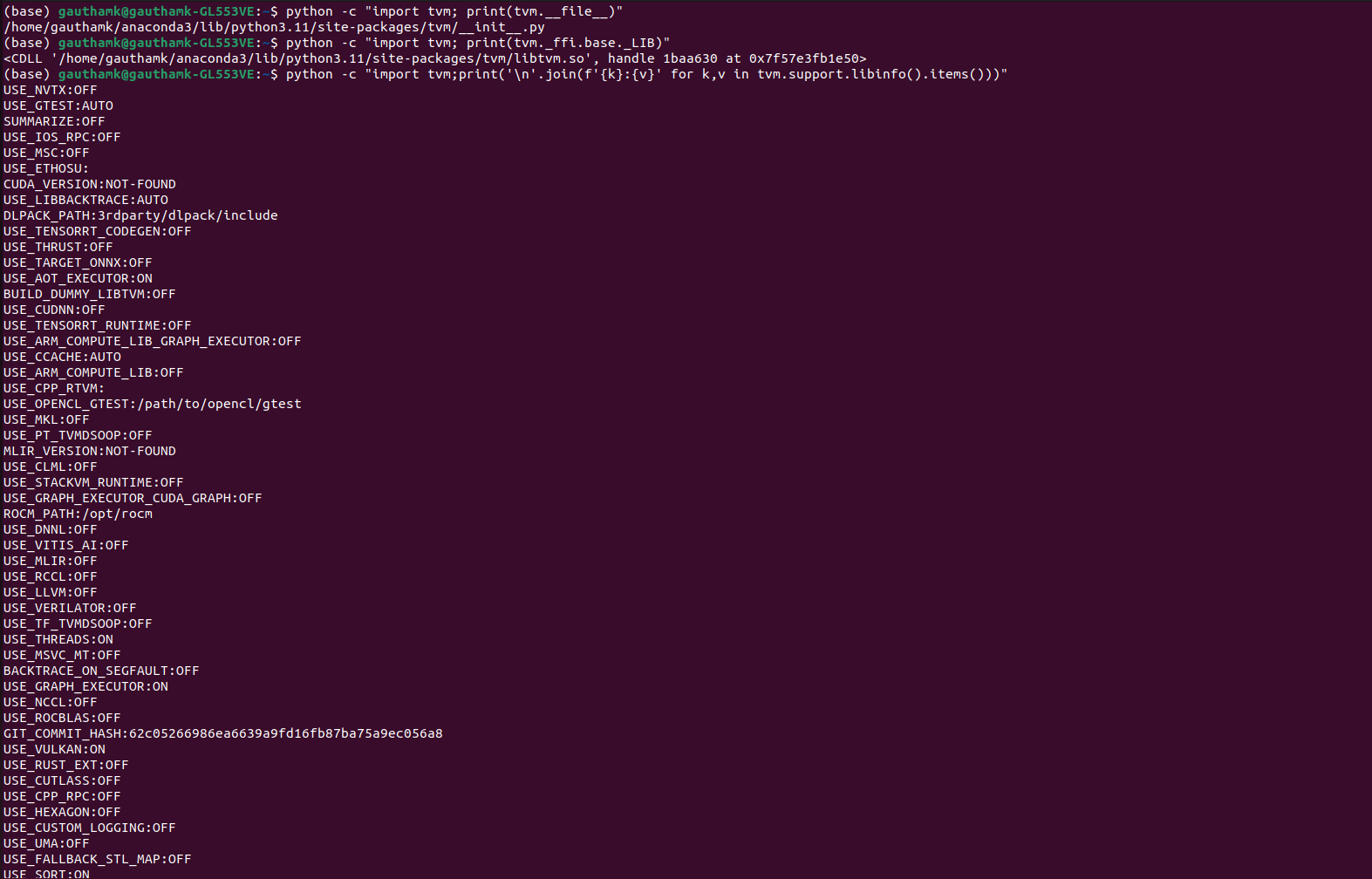

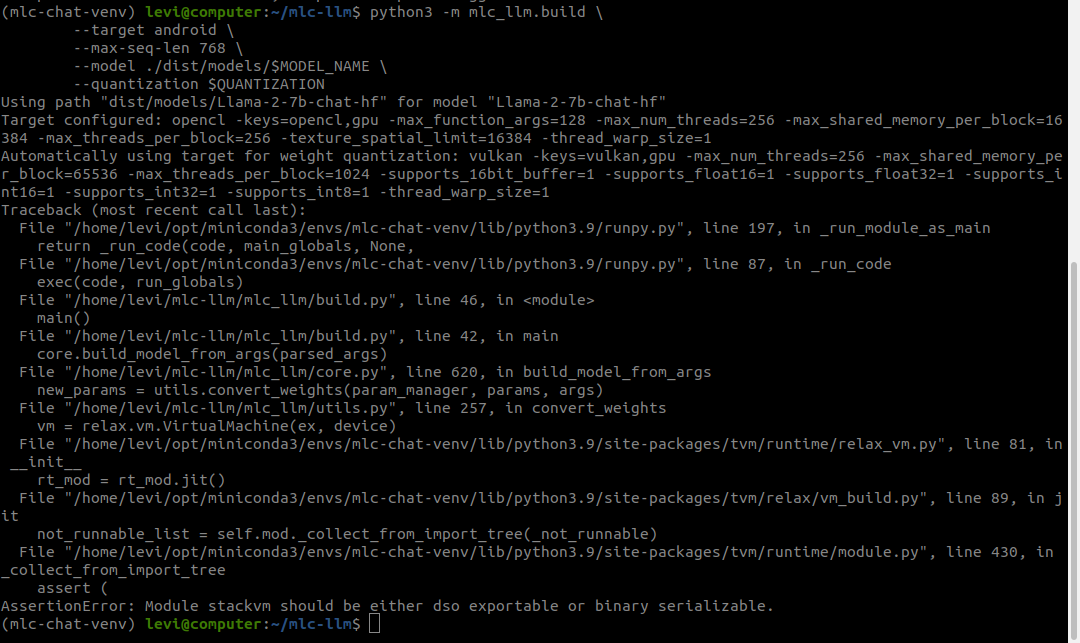

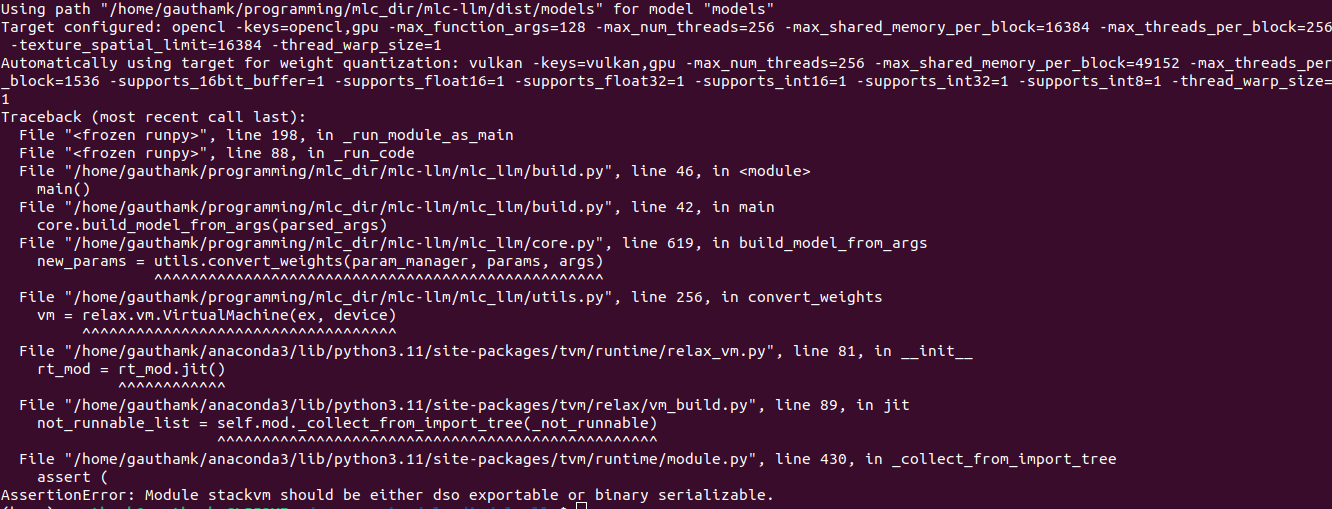

Error With Relax While Build Vicuna 7b With Mlc Llm Build Py Module We provide x86 64 linux wheels, and we do not know why it fails on some devices. we are more than happy to help build new wheels if you can provide the instructions :). As a concrete example, mlc llm compilation is implemented in pure python using its api. tvm can be installed directly from a prebuilt developer package, or built from source.

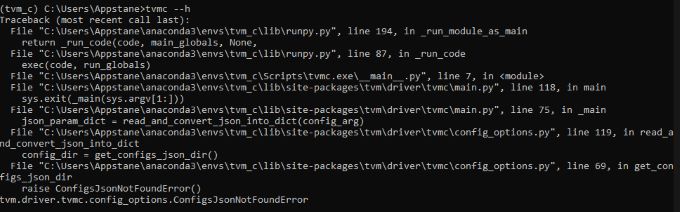

Error With Relax While Build Vicuna 7b With Mlc Llm Build Py Module This page covers the installation of apache tvm unity compiler infrastructure and the configuration of gpu drivers and sdks required for model compilation in mlc llm. Originally, this was the main cause of the error, and i believe many windows users installing tvm encountered the same issue due to the same cause. after completing all the setup steps, tvmc h was successfully executed. We’ll also want to install tvm unity to be able to build new models: this is a two ste process: # doesn't seem to support chat template? in python. note, if you run without the library path, it will not jit compile the module!: or you can run with mlc chat. mlc’s benchmarking is a bit unfortunate. Hi, we tried reproducing it on a windows 11 machine with cuda 12.6 (rtx 3050 ti) and we couldn't reproduce the bug. we don't yet support cuda for windows. mlc works through vulkan on windows for now, so it's likely not a cuda issue here. it might be some other issue causing the library load failure.

Error With Relax While Build Vicuna 7b With Mlc Llm Build Py Module We’ll also want to install tvm unity to be able to build new models: this is a two ste process: # doesn't seem to support chat template? in python. note, if you run without the library path, it will not jit compile the module!: or you can run with mlc chat. mlc’s benchmarking is a bit unfortunate. Hi, we tried reproducing it on a windows 11 machine with cuda 12.6 (rtx 3050 ti) and we couldn't reproduce the bug. we don't yet support cuda for windows. mlc works through vulkan on windows for now, so it's likely not a cuda issue here. it might be some other issue causing the library load failure. The issue here was that, if i follow the mlc llm build from source instructions, at least on my setup, ffi is being created without awareness of any specifics regarding tvm. Mlc llm python package can be installed directly from a prebuilt developer package, or built from source. option 1. prebuilt package. we provide nightly built pip wheels for mlc llm via pip. select your operating system compute platform and run the command in your terminal:. Filenotfounderror: could not find module 'path\to\site packages\tvm\tvm.dll' (or one of its dependencies). try using the full path with constructor syntax. the file is there, so there is something else missing. by following the instructions, there is no way to figure out what is missing. Could you remove tvm and try to install it using pip install i mlc ai nightly f mlc.ai wheels? same error here. same issue when building mlc ai from source. solved by building tvm. (i have vulkan problem when using prebuild package.) sign up for free to join this conversation on github. already have an account? sign in to comment.

Error On Using D2l Tvm With Colab For Learning Tvm 5 By Hwanggeun The issue here was that, if i follow the mlc llm build from source instructions, at least on my setup, ffi is being created without awareness of any specifics regarding tvm. Mlc llm python package can be installed directly from a prebuilt developer package, or built from source. option 1. prebuilt package. we provide nightly built pip wheels for mlc llm via pip. select your operating system compute platform and run the command in your terminal:. Filenotfounderror: could not find module 'path\to\site packages\tvm\tvm.dll' (or one of its dependencies). try using the full path with constructor syntax. the file is there, so there is something else missing. by following the instructions, there is no way to figure out what is missing. Could you remove tvm and try to install it using pip install i mlc ai nightly f mlc.ai wheels? same error here. same issue when building mlc ai from source. solved by building tvm. (i have vulkan problem when using prebuild package.) sign up for free to join this conversation on github. already have an account? sign in to comment.

Error Using Tvmc Even After Successfully Installing Tvm Filenotfounderror: could not find module 'path\to\site packages\tvm\tvm.dll' (or one of its dependencies). try using the full path with constructor syntax. the file is there, so there is something else missing. by following the instructions, there is no way to figure out what is missing. Could you remove tvm and try to install it using pip install i mlc ai nightly f mlc.ai wheels? same error here. same issue when building mlc ai from source. solved by building tvm. (i have vulkan problem when using prebuild package.) sign up for free to join this conversation on github. already have an account? sign in to comment.

Comments are closed.