Quantization Quantizemodelstest Testmodelendtoend Function Does Not

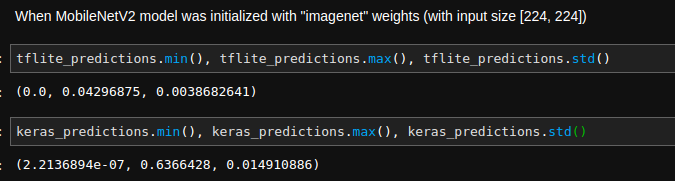

Quantization Quantizemodelstest Testmodelendtoend Function Does Not Once you apply quantization to a model during training, the accuracy drops heavily in the beginning, but it will recover quite quickly after that if you are starting with pre trained weights. The quantization api reference contains documentation of quantization apis, such as quantization passes, quantized tensor operations, and supported quantized modules and functions.

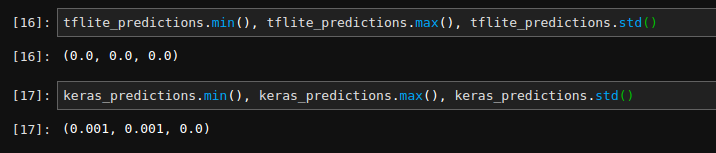

Quantization Quantizemodelstest Testmodelendtoend Function Does Not Even for quantization demos, decent weights are needed. the code will work even if you skip training (the quantization part is independent), but accuracy will be poor. Post training dynamic quantization (ptq dynamic): quantizes typically the weights of linear layers in advance but activations are dynamically quantized during inference. unlike static. The result model consists of all the quantized layers, except of my custom layer, that had not been quantized. i'll note that when i'm replacing the custom layer myconv with the code below, as in the comprehensive guide, the quantization works. Quantization provides several advantages when deploying models on device compared to their floating point counterparts: faster inference reduced memory usage lower power consumption these benefits, however, come with a tradeoff in model accuracy—specifically, the task specific accuracy the model was originally designed to achieve.

Post Training Quantization The result model consists of all the quantized layers, except of my custom layer, that had not been quantized. i'll note that when i'm replacing the custom layer myconv with the code below, as in the comprehensive guide, the quantization works. Quantization provides several advantages when deploying models on device compared to their floating point counterparts: faster inference reduced memory usage lower power consumption these benefits, however, come with a tradeoff in model accuracy—specifically, the task specific accuracy the model was originally designed to achieve. Note: a quantization aware model is not actually quantized. creating a quantized model is a separate step. your use case: subclassed models are not supported. tips for better model accuracy: try "quantize some layers" to skip quantizing the layers that reduce accuracy the most. To apply dynamic quantization, which converts all the weights in a model from 32 bit floating numbers to 8 bit integers but doesn’t convert the activations to int8 till just before performing the computation on the activations, simply call torch.quantization.quantize dynamic:. Quantization is a technique to reduce the computational and memory costs of running inference by representing the weights and activations with low precision data types like 8 bit integer (int8) instead of the usual 32 bit floating point (float32). What are the recommended approaches for performing quantization on the jetson agx orin with pytorch? i would appreciate any insights or solutions, especially in terms of how to enable or use quantization on the jetson agx orin.

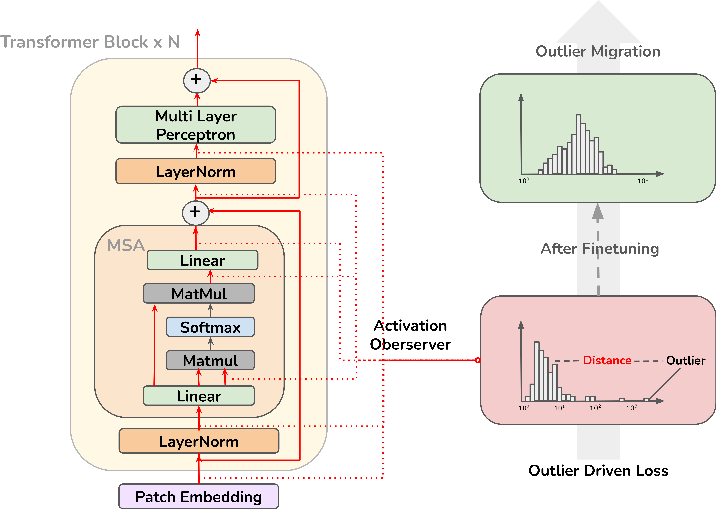

Quanttune Optimizing Model Quantization With Adaptive Outlier Driven Note: a quantization aware model is not actually quantized. creating a quantized model is a separate step. your use case: subclassed models are not supported. tips for better model accuracy: try "quantize some layers" to skip quantizing the layers that reduce accuracy the most. To apply dynamic quantization, which converts all the weights in a model from 32 bit floating numbers to 8 bit integers but doesn’t convert the activations to int8 till just before performing the computation on the activations, simply call torch.quantization.quantize dynamic:. Quantization is a technique to reduce the computational and memory costs of running inference by representing the weights and activations with low precision data types like 8 bit integer (int8) instead of the usual 32 bit floating point (float32). What are the recommended approaches for performing quantization on the jetson agx orin with pytorch? i would appreciate any insights or solutions, especially in terms of how to enable or use quantization on the jetson agx orin.

Quantization Fails For Custom Backend Quantization Pytorch Forums Quantization is a technique to reduce the computational and memory costs of running inference by representing the weights and activations with low precision data types like 8 bit integer (int8) instead of the usual 32 bit floating point (float32). What are the recommended approaches for performing quantization on the jetson agx orin with pytorch? i would appreciate any insights or solutions, especially in terms of how to enable or use quantization on the jetson agx orin.

Comments are closed.