Python For Llm Workflows Tools Best Practices

Intro To Llm Workflows With Python Course Construct and manage large language model (llm) workflows using python. this course covers essential libraries like langchain and llamaindex, api interactions, prompt engineering, retrieval augmented generation (rag), testing strategies, and deployment practices. Having the proper tool will make it easier to work with large models, handle complex tasks, and improve performance. the 10 libraries in this list help with tasks like text generation, data processing, and ai automation.

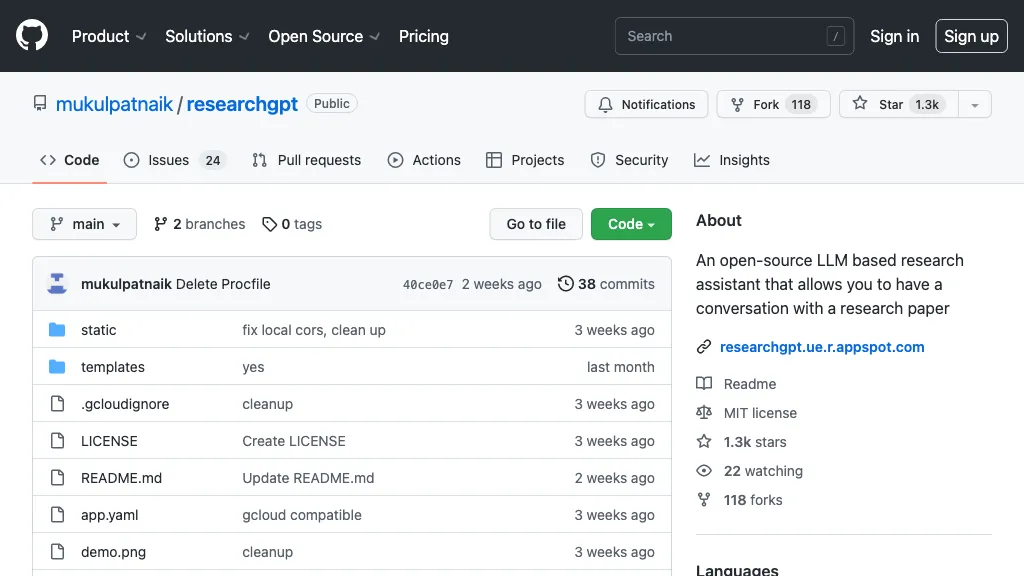

What Are Llm Workflows Python Llm Course Working with llms in python offers a wide range of opportunities for natural language processing tasks. by understanding the fundamental concepts, mastering the usage methods, following common practices, and implementing best practices, you can effectively utilize llms in your projects. In 2025, python continues to be the go to language for integrating llms, thanks to its robust ecosystem of libraries designed for ai and machine learning workflows. this guide explores the best python libraries for llm integration, complete with code examples, use cases, and insights into when to use each option. 🚀 why python for llm. Deploy and scale machine learning models on kubernetes. built for llms, embeddings, and speech to text. a kubernetes operator that simplifies serving and tuning large ai models (e.g. falcon or phi 3) using container images and gpu auto provisioning. After building dozens of llm applications (and making every mistake possible 😅), i’ve stumbled upon some libraries and tools that have become my secret weapons.

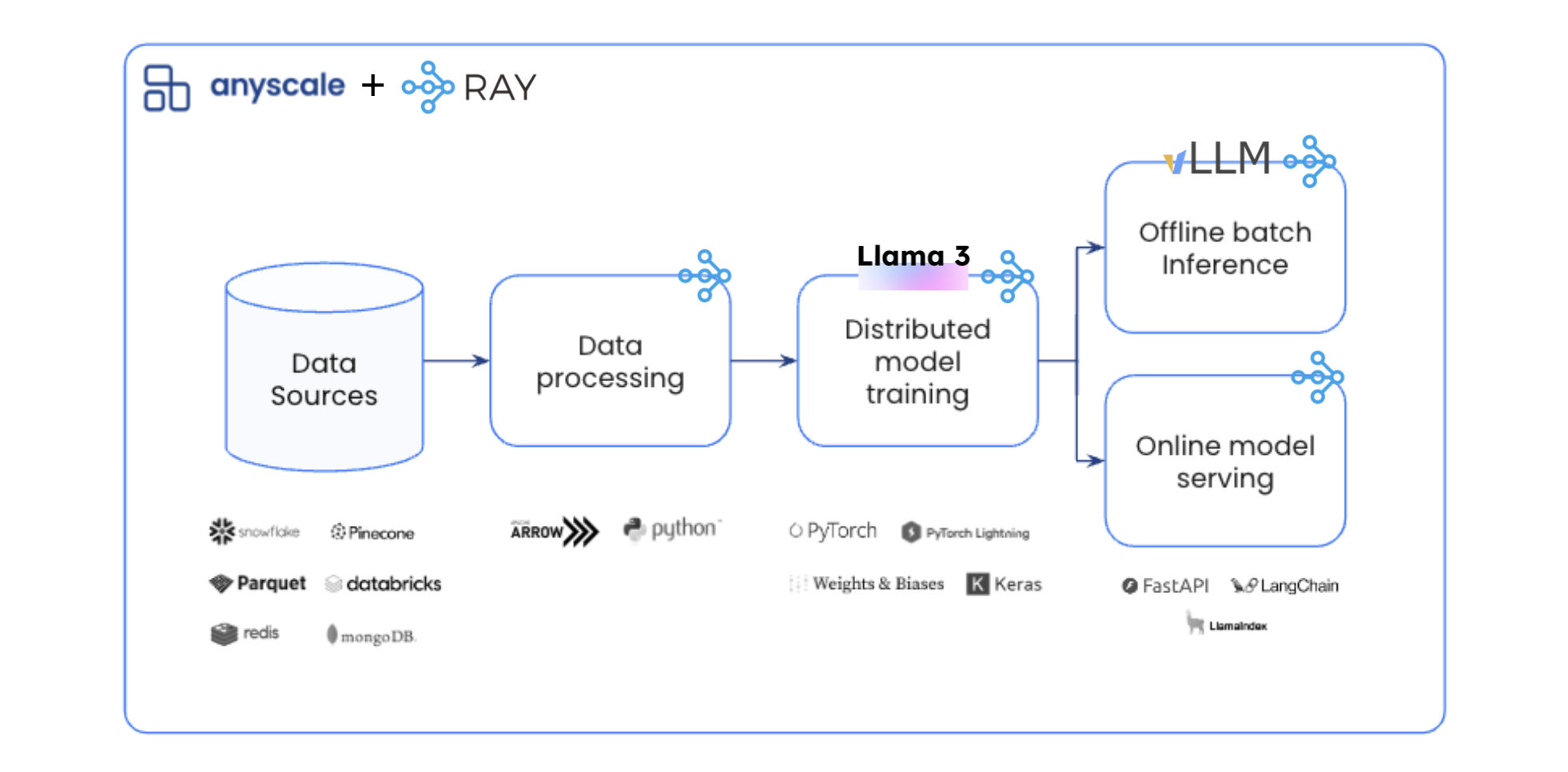

Github Onlyphantom Llm Python Large Language Models Llms Tutorials Deploy and scale machine learning models on kubernetes. built for llms, embeddings, and speech to text. a kubernetes operator that simplifies serving and tuning large ai models (e.g. falcon or phi 3) using container images and gpu auto provisioning. After building dozens of llm applications (and making every mistake possible 😅), i’ve stumbled upon some libraries and tools that have become my secret weapons. First, it reduces the need for users to write repetitive boilerplate code. second, by establishing a standardized interface for tasks (e.g. specifying how a task tracks history), a workflow can serve as a means of aggregating information from all tasks, such as token usage, costs, and more. Most llm workflows are chains of api calls glued together with python. they break when models change, cost 10x what they should, and nobody can debug them. this guide covers the patterns that work in production, the tools that run them, and a concrete three step workflow that cuts coding agent token usage by 60%. I would like to introduce fluidity (github: asht python fluidity & pip install fluidityai), a set of python classes that makes ai workflow development particularly easy and intuitive. In this guide, we'll learn how to execute the end to end llm workflows to develop & productionize llms at scale. data preprocessing: prepare our dataset for fine tuning with batch data processing. fine tuning: tune our llm (lora full param) with key optimizations with distributed training.

End To End Llm Workflows Guide Ai News Club Latest Artificial First, it reduces the need for users to write repetitive boilerplate code. second, by establishing a standardized interface for tasks (e.g. specifying how a task tracks history), a workflow can serve as a means of aggregating information from all tasks, such as token usage, costs, and more. Most llm workflows are chains of api calls glued together with python. they break when models change, cost 10x what they should, and nobody can debug them. this guide covers the patterns that work in production, the tools that run them, and a concrete three step workflow that cuts coding agent token usage by 60%. I would like to introduce fluidity (github: asht python fluidity & pip install fluidityai), a set of python classes that makes ai workflow development particularly easy and intuitive. In this guide, we'll learn how to execute the end to end llm workflows to develop & productionize llms at scale. data preprocessing: prepare our dataset for fine tuning with batch data processing. fine tuning: tune our llm (lora full param) with key optimizations with distributed training.

Free Video Llm Workflows From Automation To Ai Agents With Python I would like to introduce fluidity (github: asht python fluidity & pip install fluidityai), a set of python classes that makes ai workflow development particularly easy and intuitive. In this guide, we'll learn how to execute the end to end llm workflows to develop & productionize llms at scale. data preprocessing: prepare our dataset for fine tuning with batch data processing. fine tuning: tune our llm (lora full param) with key optimizations with distributed training.

11 Best Open Source Llm Model Ai Tools

Comments are closed.