Python Feature Scaling In Scikit Learn Normalization Vs Standardization

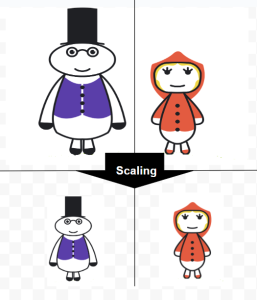

Feature Scaling Normalization Vs Standardization Data Science Horizon Learn the difference between normalization and standardization in scikit learn with practical code examples. understand when to use. Scaling ensures regularization treats every feature fairly. min max scaling (called "normalization" in the scikit learn ecosystem) linearly maps each feature to a bounded interval, typically [0, 1]. it preserves the shape of the original distribution while compressing all values into a fixed range.

Github Vishvaspatil Scaling And Standardization Using Python Scikit Scikit learn provides several transformers for normalization, including minmaxscaler, standardscaler, and robustscaler. let’s go through each of these with examples. Feature scaling transforms numerical features to a common range or distribution. without scaling, features with large magnitudes dominate features with small magnitudes in algorithms that compute distances or gradients. Standardization vs normalization in python explained with code. generate a small dataset, scale with standardscaler and minmaxscaler, and see how results change. Normalization and standardization are two techniques commonly used during data preprocessing to adjust the features to a common scale. in this guide, we'll dive into what feature scaling is and scale the features of a dataset to a more fitting scale.

Feature Scaling Normalization Vs Standardization Data Science Horizon Standardization vs normalization in python explained with code. generate a small dataset, scale with standardscaler and minmaxscaler, and see how results change. Normalization and standardization are two techniques commonly used during data preprocessing to adjust the features to a common scale. in this guide, we'll dive into what feature scaling is and scale the features of a dataset to a more fitting scale. To illustrate this, we compare the principal components found using pca on unscaled data with those obtained when using a standardscaler to scale data first. in the last part of the example we show the effect of the normalization on the accuracy of a model trained on pca reduced data. Feature scaling and normalization are techniques used to bring all features onto a similar scale, ensuring fair contribution from each feature. we will look at two common scaling techniques available in scikit learn: standardization and min max scaling. So, the main difference is that sklearn.preprocessing.normalizer scales samples to unit norm (vector lenght) while sklearn.preprocessing.standardscaler scales features to unit variance, after subtracting the mean. Learn what feature scaling and normalization are in machine learning with real life examples, python code, and beginner friendly explanations. understand why scaling matters and how to apply it using scikit learn.

Feature Scaling Normalization Vs Standardization Data Science Horizon To illustrate this, we compare the principal components found using pca on unscaled data with those obtained when using a standardscaler to scale data first. in the last part of the example we show the effect of the normalization on the accuracy of a model trained on pca reduced data. Feature scaling and normalization are techniques used to bring all features onto a similar scale, ensuring fair contribution from each feature. we will look at two common scaling techniques available in scikit learn: standardization and min max scaling. So, the main difference is that sklearn.preprocessing.normalizer scales samples to unit norm (vector lenght) while sklearn.preprocessing.standardscaler scales features to unit variance, after subtracting the mean. Learn what feature scaling and normalization are in machine learning with real life examples, python code, and beginner friendly explanations. understand why scaling matters and how to apply it using scikit learn.

Understanding Feature Scaling Normalization Vs Standardization So, the main difference is that sklearn.preprocessing.normalizer scales samples to unit norm (vector lenght) while sklearn.preprocessing.standardscaler scales features to unit variance, after subtracting the mean. Learn what feature scaling and normalization are in machine learning with real life examples, python code, and beginner friendly explanations. understand why scaling matters and how to apply it using scikit learn.

Feature Scaling Standardization Vs Normalization Explain In Detail

Comments are closed.