Pyspark Tutorial 1 Introduction To Apache Spark Sparkarchitecture Pysparktutorial Databricks

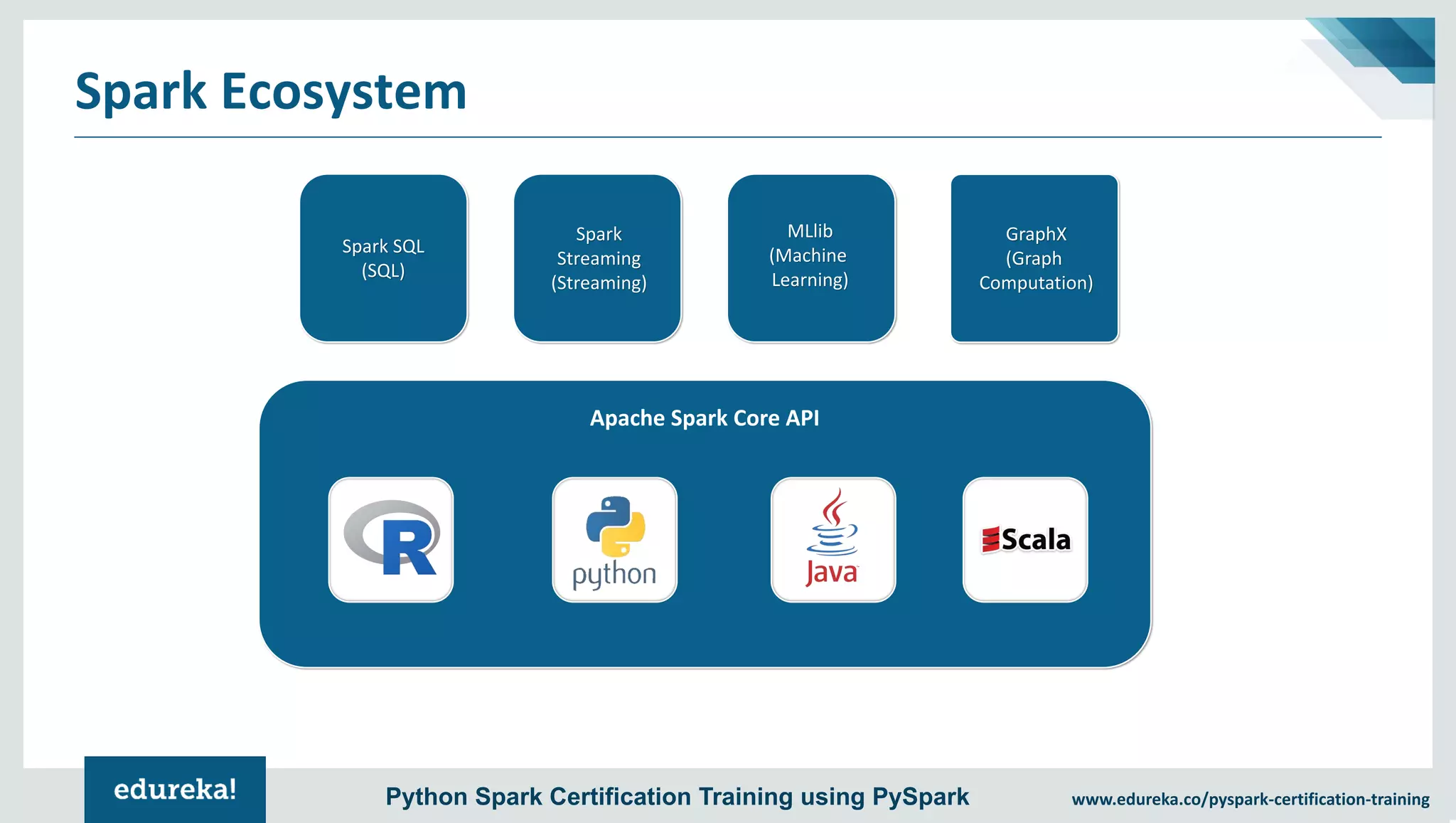

Pyspark Tutorial For Beginners Python Examples Spark By Examples This self paced guide is the “hello world” tutorial for apache spark using databricks. in the following tutorial modules, you will learn the basics of creating spark jobs, loading data, and working with data. Pyspark is the python api for apache spark. it enables you to perform real time, large scale data processing in a distributed environment using python. it also provides a pyspark shell for interactively analyzing your data.

Pyspark Tutorial Introduction To Apache Spark With Python Pyspark In this first video, we give you a beginner friendly introduction to pyspark — the python api for apache spark, widely used for big data processing, etl, and analytics. 🔑 what you’ll learn. Pyspark, a powerful data processing engine built on top of apache spark, has revolutionized how we handle big data. in this tutorial, we’ll explore pyspark with databricks, covering. Pyspark is the python api for apache spark, designed for big data processing and analytics. it lets python developers use spark's powerful distributed computing to efficiently process large datasets across clusters. We’ll walk through how spark’s architecture is designed, from the master worker model and execution workflow, to its memory management and fault tolerance mechanisms. if you want to build fast, fault tolerant, and efficient big data applications, you’re in the right place!.

Pyspark Tutorial Introduction To Apache Spark With Python Pyspark Pyspark is the python api for apache spark, designed for big data processing and analytics. it lets python developers use spark's powerful distributed computing to efficiently process large datasets across clusters. We’ll walk through how spark’s architecture is designed, from the master worker model and execution workflow, to its memory management and fault tolerance mechanisms. if you want to build fast, fault tolerant, and efficient big data applications, you’re in the right place!. Welcome to my repository where i document my learning and hands on practice with pyspark on databricks. this journey covers everything from the basics to advanced data engineering and big data concepts. In essence, pyspark is a python package that provides an api for apache spark. in other words, with pyspark you are able to use the python language to write spark applications and run them on a spark cluster in a scalable and elegant way. This article walks through simple examples to illustrate usage of pyspark. it assumes you understand fundamental apache spark concepts and are running commands in a azure databricks notebook connected to compute. Pyspark, the python interface to apache spark, enables developers to process massive datasets across distributed systems with ease. its architecture—built around the driver, executors, and cluster manager—forms the foundation of this capability.

Pyspark Tutorial Introduction To Apache Spark With Python Pyspark Welcome to my repository where i document my learning and hands on practice with pyspark on databricks. this journey covers everything from the basics to advanced data engineering and big data concepts. In essence, pyspark is a python package that provides an api for apache spark. in other words, with pyspark you are able to use the python language to write spark applications and run them on a spark cluster in a scalable and elegant way. This article walks through simple examples to illustrate usage of pyspark. it assumes you understand fundamental apache spark concepts and are running commands in a azure databricks notebook connected to compute. Pyspark, the python interface to apache spark, enables developers to process massive datasets across distributed systems with ease. its architecture—built around the driver, executors, and cluster manager—forms the foundation of this capability.

Pyspark Tutorial Introduction To Apache Spark With Python Pyspark This article walks through simple examples to illustrate usage of pyspark. it assumes you understand fundamental apache spark concepts and are running commands in a azure databricks notebook connected to compute. Pyspark, the python interface to apache spark, enables developers to process massive datasets across distributed systems with ease. its architecture—built around the driver, executors, and cluster manager—forms the foundation of this capability.

Comments are closed.