Pseudocode For The Dynamic Programming Algorithm For Finding The

Dynamic Programming Techniques For Solving Algorithmic Problems Coin This example will show an unusual use of dynamic programming: how a different sub problem is used in the solution. we'll start with solving another problem: find the largest suffix for each prefix. We introduce a unified approach for calculating nonparametric shape constrained regression. enforcement of the shape constraint often accounts for the impact of a physical phenomenon or a specific.

Algorithm 04 Dynamic Programming Approach: bellman ford algorithm o (v*e) time and o (v) space negative weight cycle: a negative weight cycle is a cycle in a graph, whose sum of edge weights is negative. if you traverse the cycle, the total weight accumulated would be less than zero. in the presence of negative weight cycle in the graph, the shortest path doesn't exist because with each traversal of the cycle shortest path. Design a dynamic programming algorithm and indicate its time efficiency. (the algorithm may be useful for, say, finding the largest free square area on a computer screen or for selecting a construction site.). The paradigm of dynamic programming: define a sequence of subproblems, with the following properties:. Shortly we will examine some algorithms to efficiently determine if a pattern string p exists within text string t. if you have ever used the “find” feature in a word processor to look for a word, then you have just performed string matching.

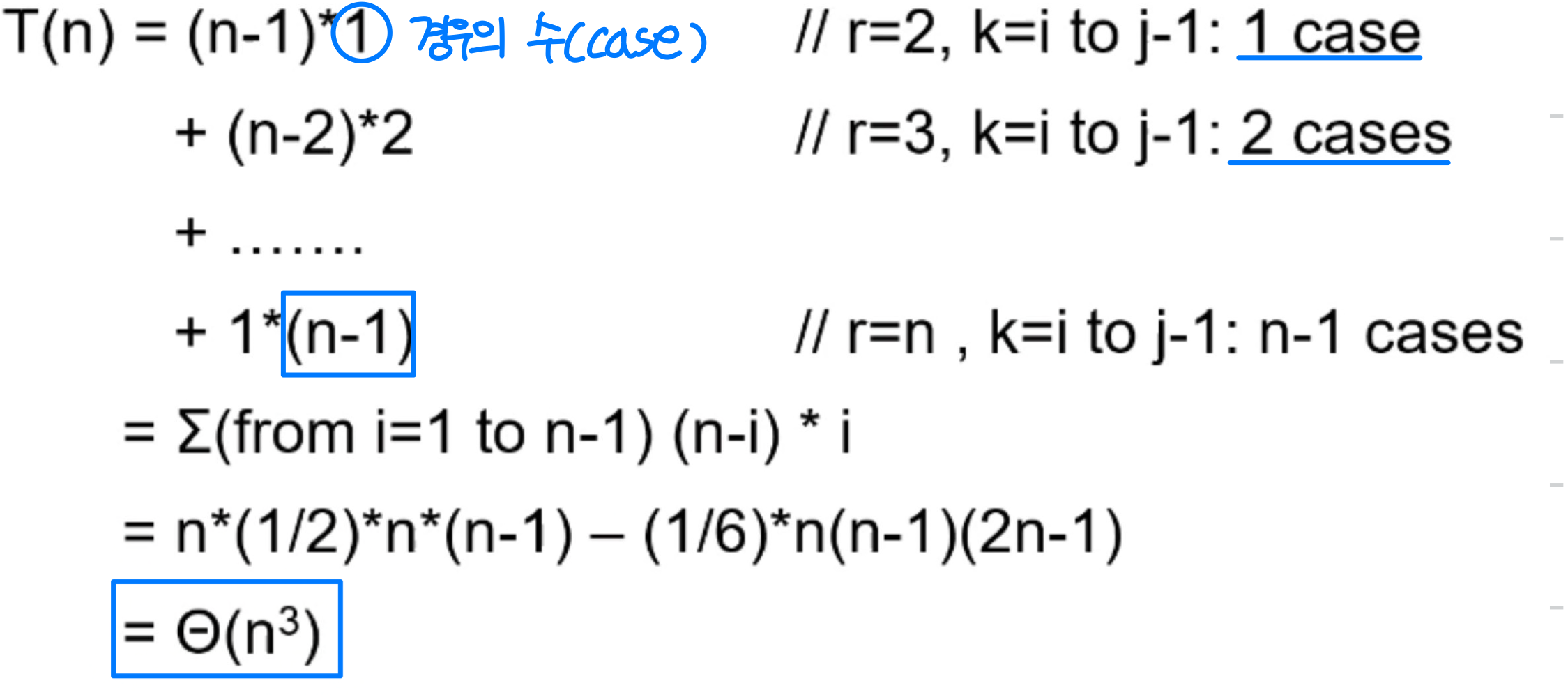

Dynamic Programming Algorithm Understanding With Example The paradigm of dynamic programming: define a sequence of subproblems, with the following properties:. Shortly we will examine some algorithms to efficiently determine if a pattern string p exists within text string t. if you have ever used the “find” feature in a word processor to look for a word, then you have just performed string matching. This page offers several corrected exercises for dynamic programming and divide and rule. the goal is to understand the difference between the divide & conquer paradigm and dynamic programming. Write pseudocode of the algorithm that finds the composition of an optimal subset from the table generated by the bottom up dynamic programming algorithm for the knapsack problem. The root of the entire tree is r [1,n], and the left and right subtrees can be found recursively using the values in r. overall, the algorithm uses dynamic programming to find the optimal binary search tree by considering all possible subtrees and their costs. Pseudocode for our dynamic programming algorithm is shown below; as expected, our algorithm clearly runs ino(n2)time. if necessary, we can reduce the space bound fromo(n2) too(n) by maintaining only the two most recent columns of the table,lisbigger[·,j] andlisbigger[·,j 1].

4 Dynamic Programming Algorithm For Finding Min Cost Alignment In This page offers several corrected exercises for dynamic programming and divide and rule. the goal is to understand the difference between the divide & conquer paradigm and dynamic programming. Write pseudocode of the algorithm that finds the composition of an optimal subset from the table generated by the bottom up dynamic programming algorithm for the knapsack problem. The root of the entire tree is r [1,n], and the left and right subtrees can be found recursively using the values in r. overall, the algorithm uses dynamic programming to find the optimal binary search tree by considering all possible subtrees and their costs. Pseudocode for our dynamic programming algorithm is shown below; as expected, our algorithm clearly runs ino(n2)time. if necessary, we can reduce the space bound fromo(n2) too(n) by maintaining only the two most recent columns of the table,lisbigger[·,j] andlisbigger[·,j 1].

Pseudocode For The Dynamic Programming Algorithm For Finding The The root of the entire tree is r [1,n], and the left and right subtrees can be found recursively using the values in r. overall, the algorithm uses dynamic programming to find the optimal binary search tree by considering all possible subtrees and their costs. Pseudocode for our dynamic programming algorithm is shown below; as expected, our algorithm clearly runs ino(n2)time. if necessary, we can reduce the space bound fromo(n2) too(n) by maintaining only the two most recent columns of the table,lisbigger[·,j] andlisbigger[·,j 1].

Pseudocode For The Dynamic Programming Algorithm For Finding The

Comments are closed.