Prior Probability Definition Deepai

Prior Probability Definition Deepai Prior is a probability calculated to express one's beliefs about this quantity before some evidence is taken into account. in statistical inferences and bayesian techniques, priors play an important role in influencing the likelihood for a datum. Prior probability is defined as the initial assessment or the likelihood of the event or an outcome before any new data is considered. in simple words, it tells us about what we know based on previous knowledge or experience.

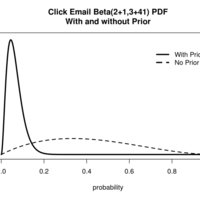

Prior Probability Definition Deepai Bayes' theorem (alternatively bayes' law or bayes' rule), named after thomas bayes ( beɪz ), gives a mathematical rule for inverting conditional probabilities, allowing the probability of a cause to be found given its effect. for example, with bayes' theorem, the probability that a patient has a disease given that they tested positive for that disease can be found using the probability that. In this section, we will talk about constructing a prior for a proportion, although the discussion will extend to any single parameter. to use a concrete example, suppose i’m interested in the proportion of students p at my university who send or receive text messages while driving. Prior probability refers to the probability of an outcome before any additional information is taken into account. it is calculated based on the frequency of each outcome in the dataset, ensuring that the dataset used is representative of the population for accurate results in data mining. Posterior is the probability that takes both prior knowledge we have about the disease, and new data (the test result) into account. when ben uses the information given, the posterior probability that you have have the disease given that the test is positive is only 9%.

Prior Probability Definition Deepai Prior probability refers to the probability of an outcome before any additional information is taken into account. it is calculated based on the frequency of each outcome in the dataset, ensuring that the dataset used is representative of the population for accurate results in data mining. Posterior is the probability that takes both prior knowledge we have about the disease, and new data (the test result) into account. when ben uses the information given, the posterior probability that you have have the disease given that the test is positive is only 9%. Prior probability is the likelihood of an event based on existing knowledge before new data. bayes' theorem adjusts prior probability to create a posterior probability with new data. Posterior probability, p (b e l i e f | d a t a). the fourth part of bayes’ theorem, probability of the data, p (d a t a) is used to normalize the posterior so it accurately reflects a probability from 0 to 1. in practice, we don’t always need p (data), so this value doesn’t have a special name. In bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken into account. A prior belief (or prior probability) is the initial belief about a parameter or hypothesis before seeing any new data. in bayesian inference, this belief is represented mathematically as a prior distribution.

Comments are closed.