Principal Component Analysis Pca Machine Learning Pptx Physics

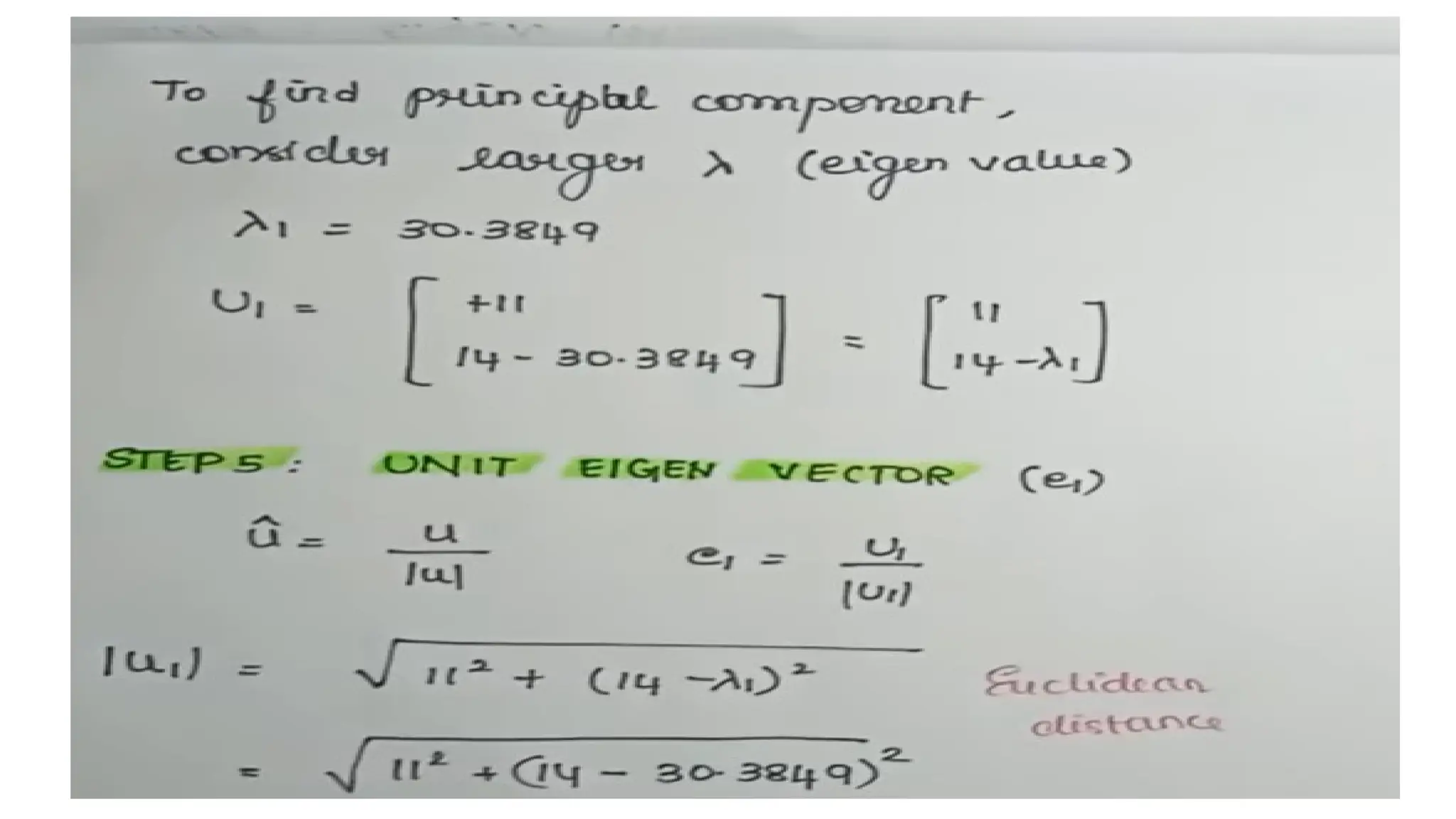

Principal Component Analysis Pca In Machine Learning Pdf The process involves generating a covariance matrix and determining eigenvalues and eigenvectors to identify key components that explain the data's variability. download as a ppt, pdf or view online for free. Other large variance directions can also be found likewise (with each being orthogonal to all others) using the eigendecomposition of cov matrix 𝑺 (this is pca).

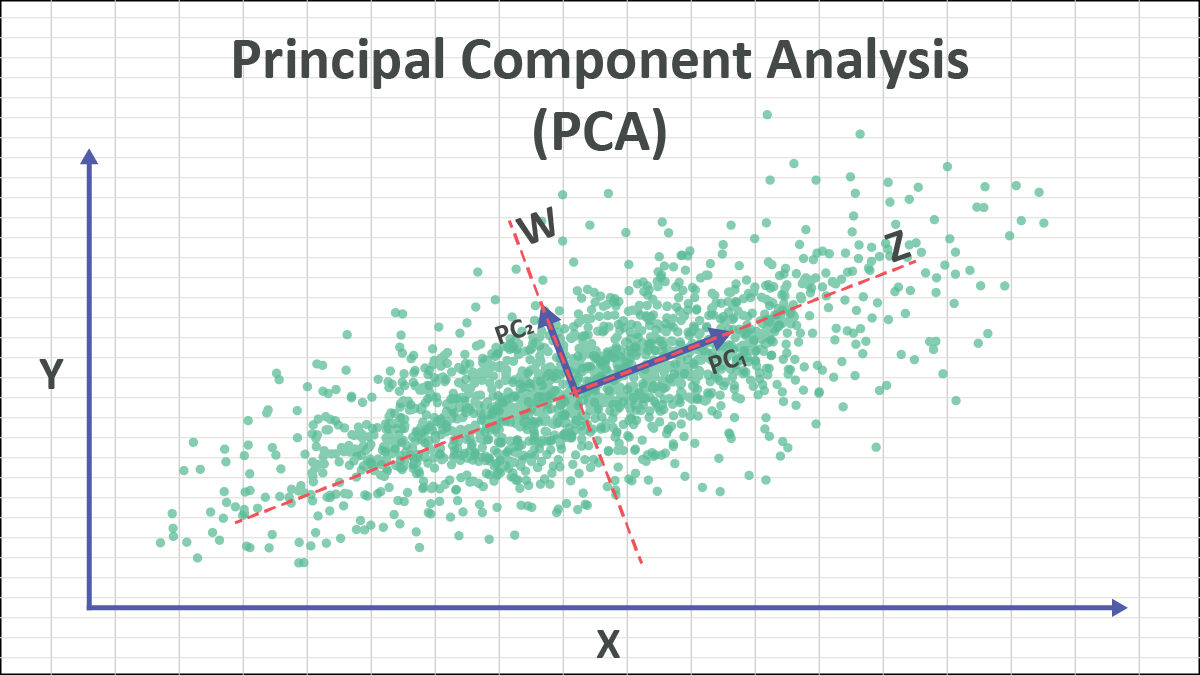

Pca Machine Learning Pdf Principal Component Analysis Eigenvalues The first new axis is called the first principal component (pc1) and it is in the direction of the greatest variance in the data. each new axis is constructed orthogonal to the previous ones and along the direction with the largest remaining variance. Principal components analysis ( pca) an exploratory technique used to reduce the dimensionality of the data set to 2d or 3d can be used to: reduce number of dimensions in data. Pca machine learning free download as powerpoint presentation (.ppt .pptx), pdf file (.pdf), text file (.txt) or view presentation slides online. the document discusses principal component analysis (pca), a method of dimensionality reduction. Principal component analysis (pca) is a statistical procedure that uses an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables called principal components.

Principal Component Analysis Pca Pptx Pca machine learning free download as powerpoint presentation (.ppt .pptx), pdf file (.pdf), text file (.txt) or view presentation slides online. the document discusses principal component analysis (pca), a method of dimensionality reduction. Principal component analysis (pca) is a statistical procedure that uses an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables called principal components. Pca projects the data onto a subspace which maximizes the projected variance, or equivalently, minimizes the reconstruction error. the optimal subspace is given by the top eigenvectors of the empirical covariance matrix. • transform some large number of variables into a smaller number of uncorrelated variables called principal components (pcs). • developed to capture as much of the variation in data as possible. Each principal component is a linear combination of the variables from original data (𝑈=[𝑋1,𝑋2,𝑋3]𝑇) with coefficients from the 𝑘 eigenvectors. 𝑌𝑘×1=𝑊𝑘×𝑛𝑈𝑛×1. now, 𝑌= 𝑌1, 𝑌2𝑇 since 𝑘=2 and each 𝑌𝑗 is a linear combination of 𝑋1, 𝑋2 and 𝑋3. for example, 𝑌1 might look like. 𝑌1=0.3𝑋1 3.98𝑋2 3.21𝑋3. Pca is a linear transformation that transforms data to a new coordinate system. the data with the greatest variance lie on the first axis (first principal component) and so on. pca tries to fit an ellipsoid to the data.

Principal Component Analysis In Machine Learning Pptx Pca projects the data onto a subspace which maximizes the projected variance, or equivalently, minimizes the reconstruction error. the optimal subspace is given by the top eigenvectors of the empirical covariance matrix. • transform some large number of variables into a smaller number of uncorrelated variables called principal components (pcs). • developed to capture as much of the variation in data as possible. Each principal component is a linear combination of the variables from original data (𝑈=[𝑋1,𝑋2,𝑋3]𝑇) with coefficients from the 𝑘 eigenvectors. 𝑌𝑘×1=𝑊𝑘×𝑛𝑈𝑛×1. now, 𝑌= 𝑌1, 𝑌2𝑇 since 𝑘=2 and each 𝑌𝑗 is a linear combination of 𝑋1, 𝑋2 and 𝑋3. for example, 𝑌1 might look like. 𝑌1=0.3𝑋1 3.98𝑋2 3.21𝑋3. Pca is a linear transformation that transforms data to a new coordinate system. the data with the greatest variance lie on the first axis (first principal component) and so on. pca tries to fit an ellipsoid to the data.

Github W412k Machine Learning Principal Component Analysis Pca Each principal component is a linear combination of the variables from original data (𝑈=[𝑋1,𝑋2,𝑋3]𝑇) with coefficients from the 𝑘 eigenvectors. 𝑌𝑘×1=𝑊𝑘×𝑛𝑈𝑛×1. now, 𝑌= 𝑌1, 𝑌2𝑇 since 𝑘=2 and each 𝑌𝑗 is a linear combination of 𝑋1, 𝑋2 and 𝑋3. for example, 𝑌1 might look like. 𝑌1=0.3𝑋1 3.98𝑋2 3.21𝑋3. Pca is a linear transformation that transforms data to a new coordinate system. the data with the greatest variance lie on the first axis (first principal component) and so on. pca tries to fit an ellipsoid to the data.

Principal Component Analysis Pca Explained 49 Off Rbk Bm

Comments are closed.