Practical Post Training Quantization Of An Onnx Model

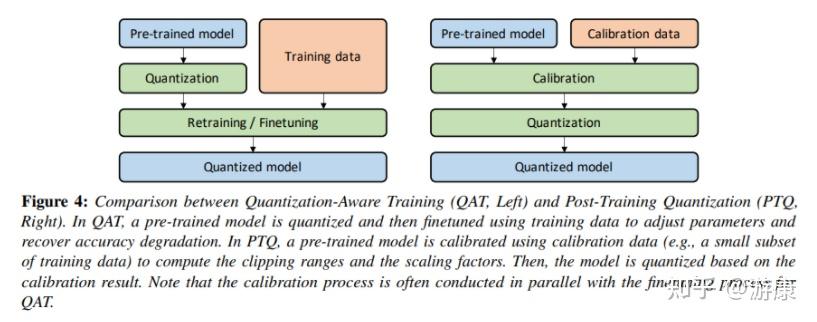

Onnx Model Quantization Nvidia Model Optimizer Deepwiki Onnx runtime provides python apis for converting 32 bit floating point model to an 8 bit integer model, a.k.a. quantization. these apis include pre processing, dynamic static quantization, and debugging. In general, it is recommended to use dynamic quantization for rnn and transformer based models, and static quantization for cnn models. if both post training quantization can not meet your accuracy goal, you can try quantization aware training (qat) to retrain the model.

Post Training Quantization Ptq For Llms In order to leverage these optimizations, you need to optimize your models using the transformer model optimization tool before quantizing the model. this notebook demonstrates the process. Our toolkit is aimed at developers looking to enhance performance, reduce model size, and accelerate inference times without compromising the accuracy of their neural networks when deployed with tensorrt. quantization is an effective model optimization technique that compresses your models. This document describes the onnx based post training quantization (ptq) infrastructure in modelopt. this pathway operates directly on onnx graph representations and inserts quantize dequantize (qdq) nodes to produce quantized models compatible with tensorrt and onnx runtime. We presented seqto, a utility for tuning selective quantization of onnx models, and demonstrated its effectiveness on four models deployed on cpu and gpu. seqto reduced accuracy loss by up to 54.14 % while retaining up to 98.18 % of the model size reduction achieved by full quantization.

Fake Quantization Onnx Model Parse Error Using Tensorrt Tensorrt This document describes the onnx based post training quantization (ptq) infrastructure in modelopt. this pathway operates directly on onnx graph representations and inserts quantize dequantize (qdq) nodes to produce quantized models compatible with tensorrt and onnx runtime. We presented seqto, a utility for tuning selective quantization of onnx models, and demonstrated its effectiveness on four models deployed on cpu and gpu. seqto reduced accuracy loss by up to 54.14 % while retaining up to 98.18 % of the model size reduction achieved by full quantization. 🤗 optimum provides an optimum.onnxruntime package that enables you to apply quantization on many models hosted on the hugging face hub using the onnx runtime quantization tool. the quantization process is abstracted via the ortconfig and the ortquantizer classes. In this section we continue our human emotions detection project. we shall focus on practically quantizing our already trained model with onnxruntime. more. The process of converting a high precision model (eg floating point 32 bit) into a lower precision representation (eg int 8) to reduce the model size, improve inference speed, and lower memory. This quick start guide explains how to use the model compression toolkit (mct) to quantize a pytorch model. we will load a pre trained model and quantize it using the mct with.

Post Training Quantization 🤗 optimum provides an optimum.onnxruntime package that enables you to apply quantization on many models hosted on the hugging face hub using the onnx runtime quantization tool. the quantization process is abstracted via the ortconfig and the ortquantizer classes. In this section we continue our human emotions detection project. we shall focus on practically quantizing our already trained model with onnxruntime. more. The process of converting a high precision model (eg floating point 32 bit) into a lower precision representation (eg int 8) to reduce the model size, improve inference speed, and lower memory. This quick start guide explains how to use the model compression toolkit (mct) to quantize a pytorch model. we will load a pre trained model and quantize it using the mct with.

Onnx Quantization Ppt Designs Acp Ppt Example The process of converting a high precision model (eg floating point 32 bit) into a lower precision representation (eg int 8) to reduce the model size, improve inference speed, and lower memory. This quick start guide explains how to use the model compression toolkit (mct) to quantize a pytorch model. we will load a pre trained model and quantize it using the mct with.

Post Training Quantization Tensorflow Quantization Techniques Ixxliq

Comments are closed.