Ppt Pattern Classification Maximum Likelihood Parameter Estimation

Github Alnahian37 Parameter Estimation Of Maximum Likelihood Understanding the principles of maximum likelihood parameter estimation in pattern classification, comparing it with bayesian estimation, and exploring estimation of unknown parameters in a multivariate gaussian distribution. Pattern classification, chapter 3 6 in ml estimation parameters are assumed to be fixed but unknown!.

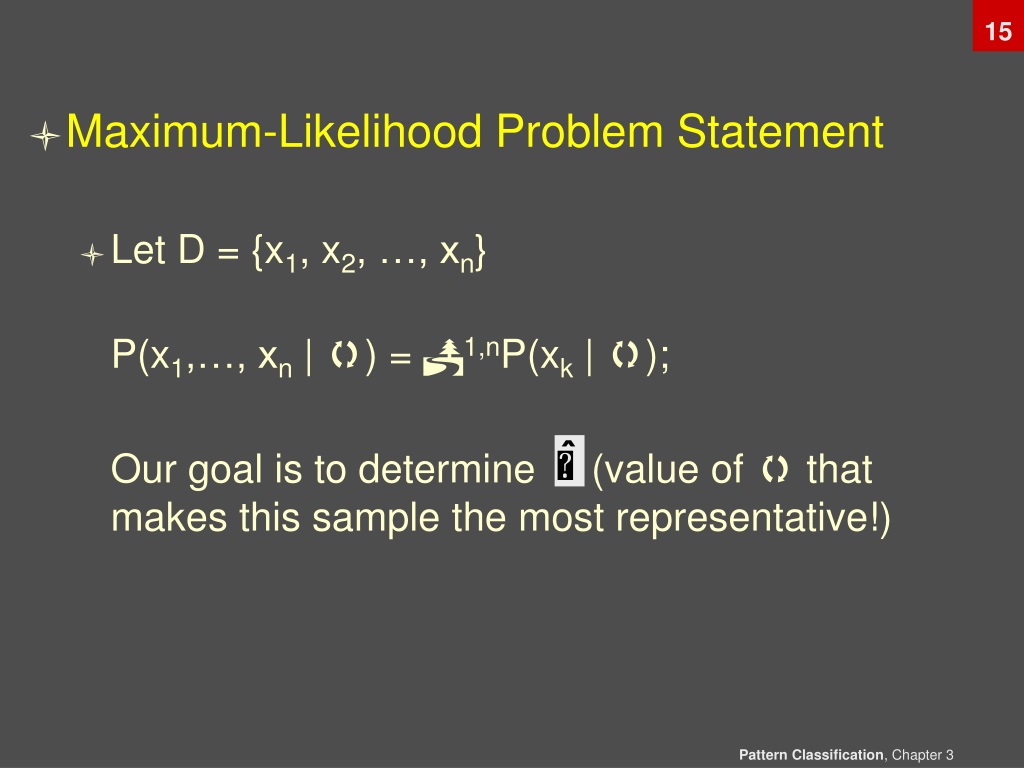

Ppt Pattern Classification Maximum Likelihood Parameter Estimation Three test procedures. to construct the basic test we need an estimate of the likelihood value at the unrestricted point and the restricted point and we compare these two. there are three ways of deriving this. the likelihood ratio test we simply estimate the model twice, once unrestricted and once restricted and compare the two. Bayesian learning of gaussians why we should care maximum likelihood estimation is a very very very very fundamental part of data analysis. “mle for gaussians” is training wheels for our future techniques learning gaussians is more useful than you might guess…. Maximum likelihood estimation is a general method for estimating parameters in statistical models. it involves finding the parameter values that maximize the likelihood function, or the probability of obtaining the sample results given the parameters. In either approach, we use p( i | x) for our classification rule!.

Ppt Pattern Classification Maximum Likelihood Parameter Estimation Maximum likelihood estimation is a general method for estimating parameters in statistical models. it involves finding the parameter values that maximize the likelihood function, or the probability of obtaining the sample results given the parameters. In either approach, we use p( i | x) for our classification rule!. Pattern classification all materials in these slides were taken from pattern classification (2nd ed) by r. o. duda, p. e. hart and d. g. stork, john wiley & sons, 2000 with the permission of the authors and the publisher. Linear models for regression, parameter estimation methods maximum likelihood method and maximum a posteriori method; regularization, ridge regression, lasso, bias variance decomposition, bayesian linear regression. For example, variance (also known as μ’2, the 2nd population central moment) has an unbiased estimator s2 (sample variance), as well as a maximum likelihood estimator m’2(the 2nd sample central moment), and they are different. Since good predictions are better, a natural approach to parameter estimation is to choose the set of parameter values that yields the best predictions—that is, the parameter that maximizes the likelihood of the observed data.

Comments are closed.