Ppt Maximum Likelihood Ml Expectation Maximization Em Pieter

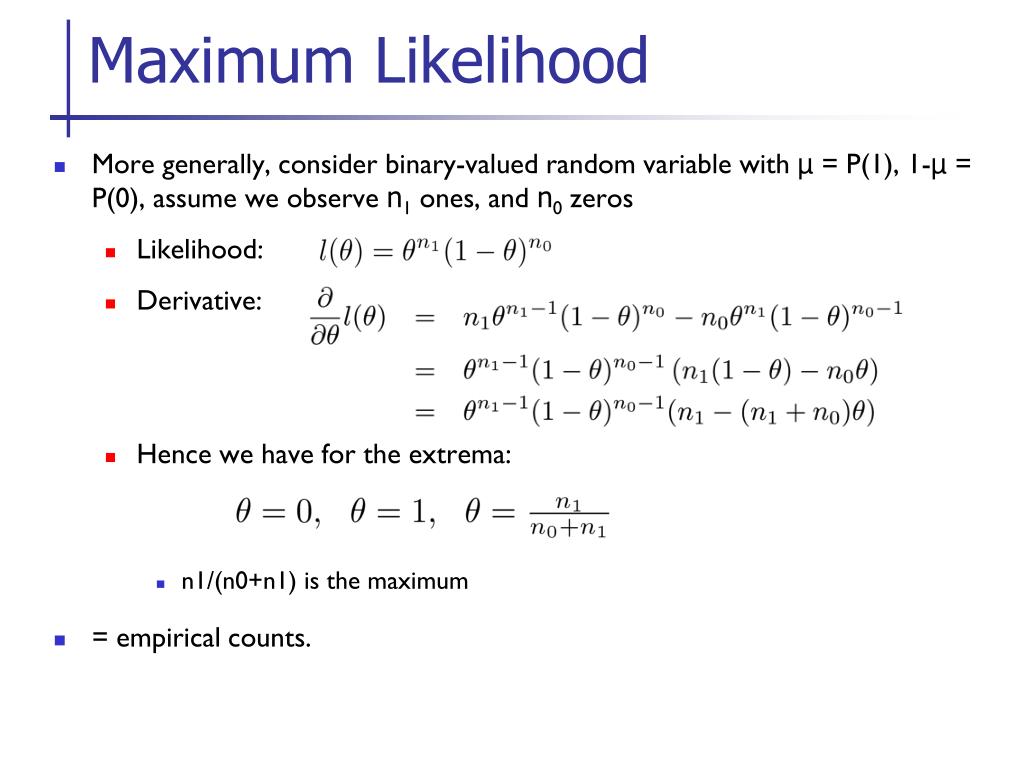

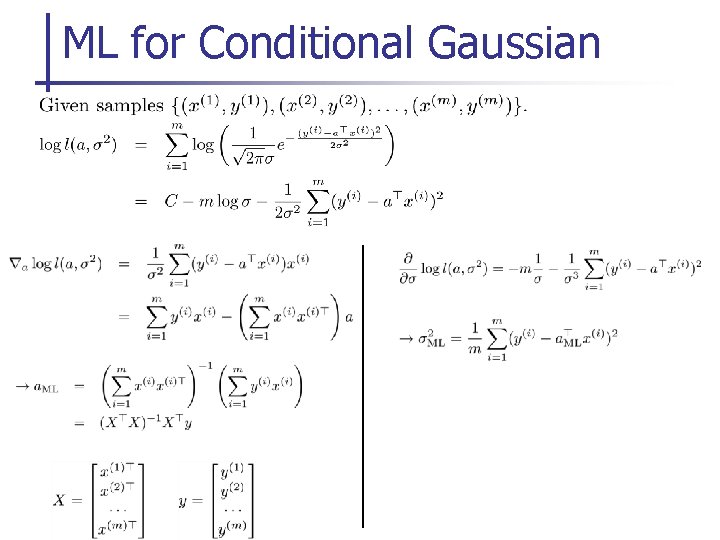

Ml 2 Expectation Maximization Pdf Support Vector Machine Cluster Learn about maximum likelihood estimation (mle) and expectation maximization (em) for parameter estimation in bayesian networks and general problems, covering concepts, examples, and comparisons with map estimation. includes applications in gene expression regulation. Maximum likelihood (ml), expecta6on maximiza6on (em) pieter abbeel uc berkeley eecs many slides adapted from thrun, burgard and fox, download.

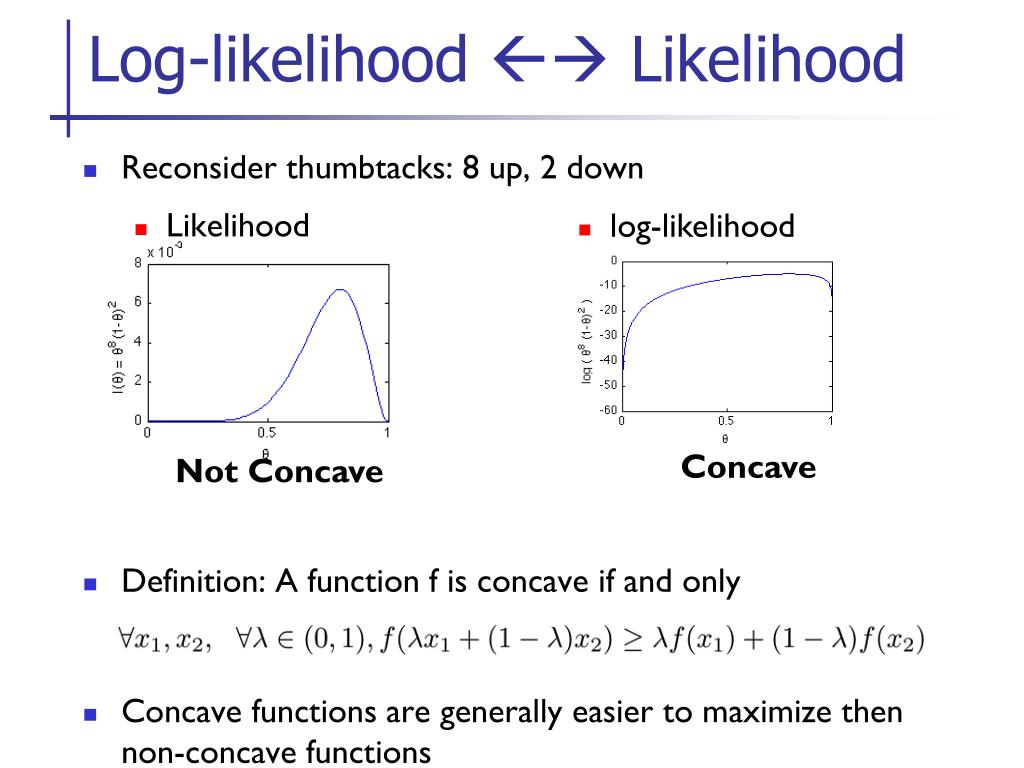

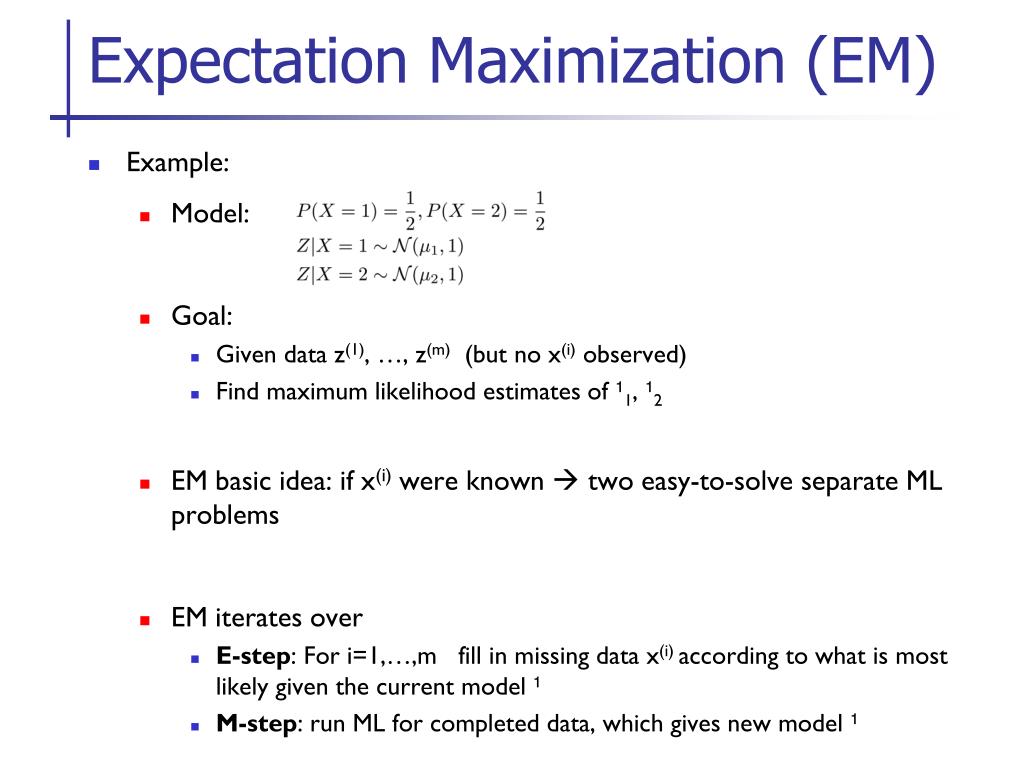

Ppt Maximum Likelihood Ml Expectation Maximization Em Pieter The document discusses maximum likelihood estimation. it begins by explaining that maximum likelihood chooses parameter values that make the observed data most probable given a statistical model. Em issues under mild assumptions, em is guaranteed to increase likelihood with every e m iteration, hence will converge. but it may converge to a local, not global, max. Maximum likelihood (ml), expectation maximization (em) pieter abbeel uc berkeley eecs many slides adapted from thrun, burgard and fox, probabilistic. The em algorithm involves alternately computing a lower bound on the log likelihood for the current parameter values and then maximizing this bound to obtain the new parameter values.

Ppt Maximum Likelihood Ml Expectation Maximization Em Pieter Maximum likelihood (ml), expectation maximization (em) pieter abbeel uc berkeley eecs many slides adapted from thrun, burgard and fox, probabilistic. The em algorithm involves alternately computing a lower bound on the log likelihood for the current parameter values and then maximizing this bound to obtain the new parameter values. Maximum likelihood (ml), expectation maximization (em) pieter abbeel uc berkeley eecs many slides adapted from thrun, burgard and fox, probabilistic robotics texpoint fonts used in emf. Mle with hidden latent variables: expectation maximisation general problem: data parameters hidden variables for mle, want to maximise the log likelihood the sum over z inside the log gives a complicated expression for the ml solution. The expectation maximization (em) algorithm is used in machine learning to estimate missing data and optimize parameters when dealing with latent variables. it consists of an expectation step to estimate missing values and a maximization step to update parameters, repeating until convergence. Perform a “line search” to find the setting that achieves the highest log likelihood score.

Maximum Likelihood Ml Expectation Maximization Em Pieter Abbeel Maximum likelihood (ml), expectation maximization (em) pieter abbeel uc berkeley eecs many slides adapted from thrun, burgard and fox, probabilistic robotics texpoint fonts used in emf. Mle with hidden latent variables: expectation maximisation general problem: data parameters hidden variables for mle, want to maximise the log likelihood the sum over z inside the log gives a complicated expression for the ml solution. The expectation maximization (em) algorithm is used in machine learning to estimate missing data and optimize parameters when dealing with latent variables. it consists of an expectation step to estimate missing values and a maximization step to update parameters, repeating until convergence. Perform a “line search” to find the setting that achieves the highest log likelihood score.

Ppt Maximum Likelihood Ml Expectation Maximization Em Pieter The expectation maximization (em) algorithm is used in machine learning to estimate missing data and optimize parameters when dealing with latent variables. it consists of an expectation step to estimate missing values and a maximization step to update parameters, repeating until convergence. Perform a “line search” to find the setting that achieves the highest log likelihood score.

Comments are closed.