Position Embedding

Roformer Enhanced Transformer With Rotary Positional Embedding Llama 2 This blog post examines positional encoding techniques, emphasizing their vital importance in traditional transformers and their use with 2d data in vision transformers (vit). Position embeddings help the model understand the position of each word in relation to others to accurately identify entity boundaries and their roles within the context.

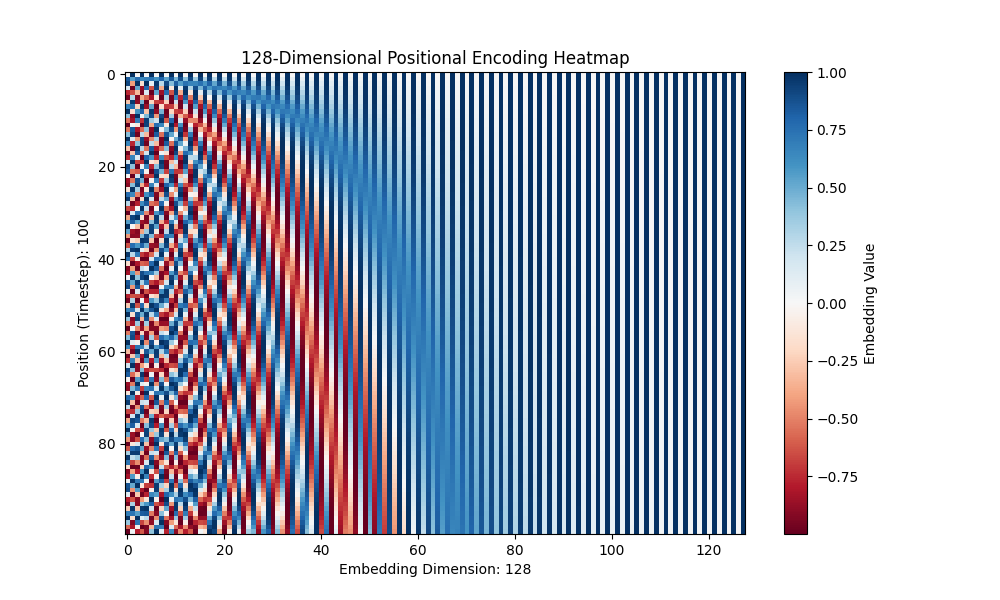

Understanding Transformer Sinusoidal Position Embedding By Hiroaki This code visualizes the positional embeddings from a transformer model by plotting selected dimensions across positions. it helps show how positional information is encoded differently across dimensions in the embedding space. Learn what positional encoding is and why it's important for transformer models that do not use recurrence or convolution. see how to implement and visualize positional encoding using sine and cosine functions and numpy. Rotary positional embedding, often called rope (rotary position embedding), is a clever approach that combines some benefits of both absolute and relative embeddings. Therefore, there is a range of different methods to incorporate position information into transformer models. adding position information can be done by using position embeddings, manipulating attention matrices, or alternative methods such as preprocessing the input with a recurrent neural network.

Understanding Transformer Sinusoidal Position Embedding By Hiroaki Rotary positional embedding, often called rope (rotary position embedding), is a clever approach that combines some benefits of both absolute and relative embeddings. Therefore, there is a range of different methods to incorporate position information into transformer models. adding position information can be done by using position embeddings, manipulating attention matrices, or alternative methods such as preprocessing the input with a recurrent neural network. Relative positional embedding (1): t5 bias 1. generalizes better to sequences of unseen lengths. raffel, et al. exploring the limits of transfer learning with a unified text to text transformer. jmlr 2020. relative positional embedding (2): alibi 1. generalizes better to sequences of unseen lengths. 1. Learn how transformer models use positional embeddings to encode context and distance information in sequences. explore the design criteria, the trigonometric trick, and the intuition behind positional embeddings. Learn how positional embeddings (pe) are used to encode the order of tokens in multi head self attention (mhsa) for computer vision tasks. see the theory, the code, and the visualizations of different pe methods. This blog will explore the mathematical concepts behind positional embeddings, particularly focusing on sinusoidal positional embeddings, and will work through a detailed example to.

Understanding Transformer Sinusoidal Position Embedding By Hiroaki Relative positional embedding (1): t5 bias 1. generalizes better to sequences of unseen lengths. raffel, et al. exploring the limits of transfer learning with a unified text to text transformer. jmlr 2020. relative positional embedding (2): alibi 1. generalizes better to sequences of unseen lengths. 1. Learn how transformer models use positional embeddings to encode context and distance information in sequences. explore the design criteria, the trigonometric trick, and the intuition behind positional embeddings. Learn how positional embeddings (pe) are used to encode the order of tokens in multi head self attention (mhsa) for computer vision tasks. see the theory, the code, and the visualizations of different pe methods. This blog will explore the mathematical concepts behind positional embeddings, particularly focusing on sinusoidal positional embeddings, and will work through a detailed example to.

Understanding Transformer Sinusoidal Position Embedding By Hiroaki Learn how positional embeddings (pe) are used to encode the order of tokens in multi head self attention (mhsa) for computer vision tasks. see the theory, the code, and the visualizations of different pe methods. This blog will explore the mathematical concepts behind positional embeddings, particularly focusing on sinusoidal positional embeddings, and will work through a detailed example to.

Comments are closed.