Performance X64 Cache Blocking Matrix Blocking

Matrix Multiplication And Cache Blocking In this video we'll start out talking about cache lines. after that we look at a technique called blocking. this is where we split a large problem into small. Overview in this assignment, you’ll explore the effects on performance of writing “cache friendly” code — code that exhibits good spatial and temporal locality. the focus will be on implementing matrix multiplication.

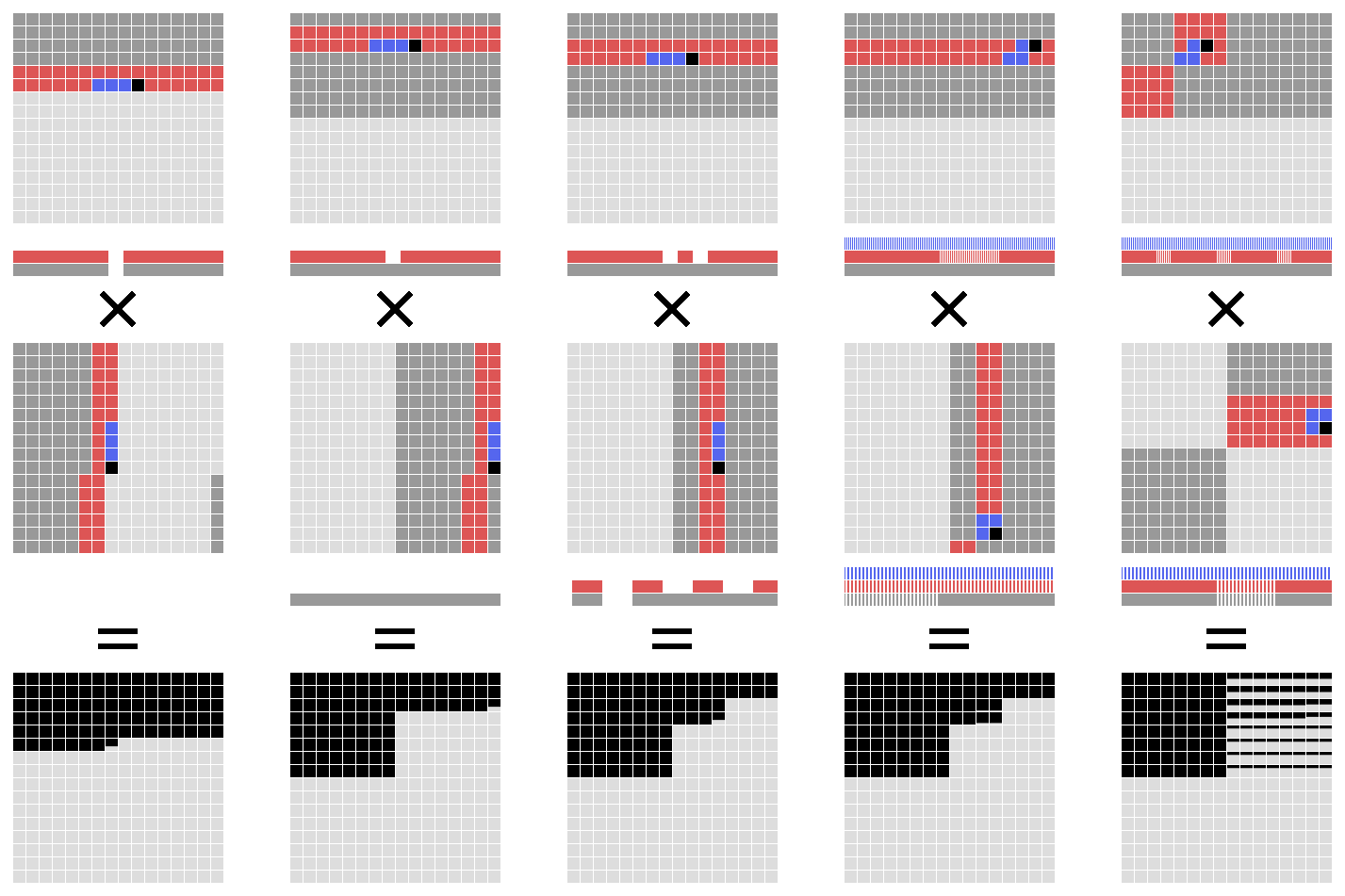

8 Non Blocking Vs Blocking With Various Cache Configurations In this talk, we will explore different optimization techniques for matrix multiplication, from naive implementations to highly tuned versions leveraging modern hardware features. we will cover key performance enhancing strategies such as loop unrolling, cache blocking, simd vectorization, parallelization using threads and more. Below is the program i used to benchmark. there are three functions: naive multiplication, in place transpose of b, and in place transpose of b blocking. i ran this with n = 4000 and block sizes 1, 10, 20, 50, 100, 200. More formally, cache blocking is a technique that attempts to reduce the cache miss rate by improving the temporal and or spatial locality of memory accesses. in the case of matrix transposition we consider 2d blocking to perform the transposition one submatrix at a time. Array size vs. cache miss analysis (thrashing) — ripes demonstration a complete experiment showing how array matrix size interacts with cache capacity, why performance suddenly collapses when the working set exceeds cache size, and how loop tiling restores locality.

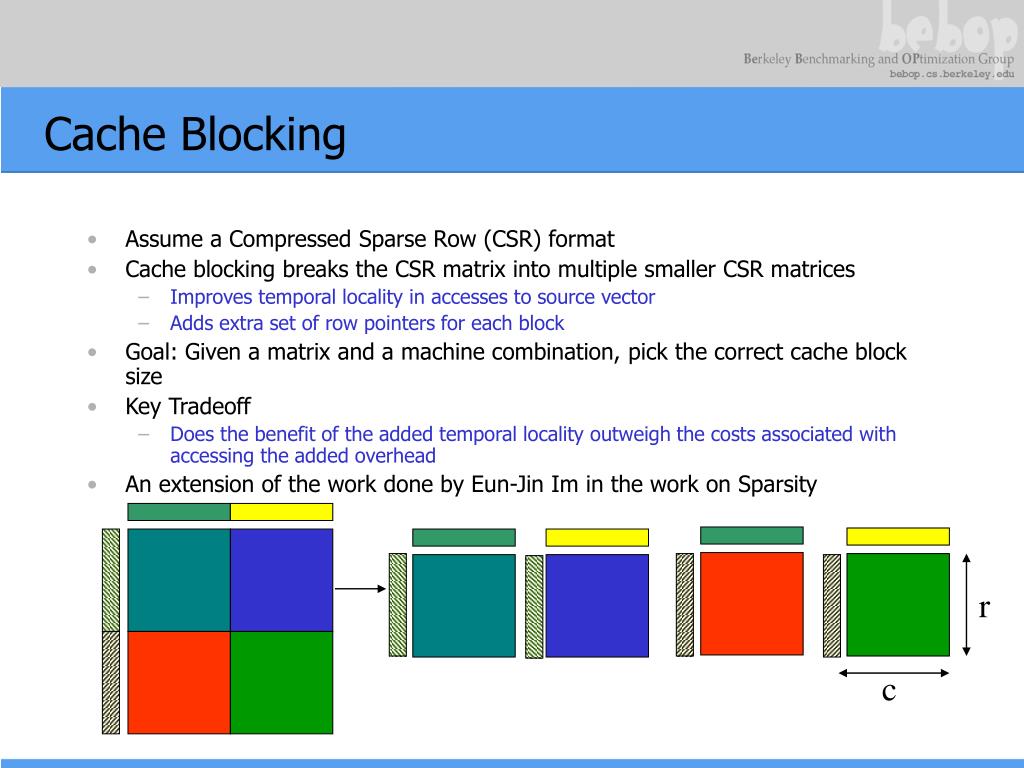

Cache Blocking Performance On Different Architectures As A Function Of More formally, cache blocking is a technique that attempts to reduce the cache miss rate by improving the temporal and or spatial locality of memory accesses. in the case of matrix transposition we consider 2d blocking to perform the transposition one submatrix at a time. Array size vs. cache miss analysis (thrashing) — ripes demonstration a complete experiment showing how array matrix size interacts with cache capacity, why performance suddenly collapses when the working set exceeds cache size, and how loop tiling restores locality. This document explains cache blocking techniques for optimizing matrix matrix multiplication (gemm) operations. cache blocking is a critical performance optimization that reduces memory access latency by improving data locality. What is the performance of this code? what do you expect? are loads and stores affected by cache locality in the same way? what went wrong? ask questions! this post is licensed under cc by 4.0 by the author. If we can restructure the product of two large matrices into products of smaller matrices, then we can tune the small matrix size so that things fit nicely in cache!. In this post, we explore how low‑level implementation details—like loop ordering and data layout—can dramatically change performance on real hardware, even when the algorithmic complexity remains the same.

Effective Nonblocking Cache Architecture For Highperformance Texture This document explains cache blocking techniques for optimizing matrix matrix multiplication (gemm) operations. cache blocking is a critical performance optimization that reduces memory access latency by improving data locality. What is the performance of this code? what do you expect? are loads and stores affected by cache locality in the same way? what went wrong? ask questions! this post is licensed under cc by 4.0 by the author. If we can restructure the product of two large matrices into products of smaller matrices, then we can tune the small matrix size so that things fit nicely in cache!. In this post, we explore how low‑level implementation details—like loop ordering and data layout—can dramatically change performance on real hardware, even when the algorithmic complexity remains the same.

Ppt When Cache Blocking Of Sparse Matrix Vector Multiply Works And If we can restructure the product of two large matrices into products of smaller matrices, then we can tune the small matrix size so that things fit nicely in cache!. In this post, we explore how low‑level implementation details—like loop ordering and data layout—can dramatically change performance on real hardware, even when the algorithmic complexity remains the same.

Comments are closed.